This guide is part of our Complete Guide to AI Policy and Governance for Companies, the central resource for everything you need to know about AI compliance in 2026.

When an auditor asks “How do you govern AI usage in your organization?”, what evidence can you actually produce?

Most companies can point to a policy document. Some can show training materials. But when the auditor asks “Can you prove employees acknowledged this policy?” or “Show me evidence of enforcement over the past 12 months,” the conversation usually stops.

That gap between having a policy and proving it’s followed is exactly what an AI audit trail closes.

This guide explains what an AI audit trail is, why regulators and auditors increasingly require one, what should be in it, and how to build one that satisfies compliance requirements across GDPR, the EU AI Act, SOC 2, and other frameworks.

TABLE OF CONTENTS:

- What Is an AI Audit Trail?

- Why Regulators and Auditors Want One

- What Regulations Require Audit Trails?

- The 7 Components of a Complete AI Audit Trail

- What Auditors Actually Ask For

- Common Audit Trail Gaps (And How to Fix Them)

- Building an Audit Trail That Scales

- How PolicyGuard Creates Audit-Ready Documentation

- Frequently Asked Questions

1. What Is an AI Audit Trail?

An AI audit trail is a documented record of how your organization governs the use of artificial intelligence tools. It captures the policies you have in place, who acknowledged them, when they acknowledged them, what training was completed, and evidence that enforcement mechanisms are active.

Think of it as the evidence package that proves your AI governance program exists and functions, not just on paper, but in practice.

An audit trail answers the fundamental question auditors care about: “Can you demonstrate that your AI governance is real, not just aspirational?”

A complete AI audit trail typically includes:

- Policy documentation: The actual AI usage policies, version history, and approval records

- Acknowledgment records: Timestamped proof that employees read and acknowledged the policy

- Training completion: Records showing who completed AI governance training, when, and their assessment scores

- Enforcement evidence: Logs showing that governance controls are active at the point of AI tool usage

- Exception handling: Documentation of any policy exceptions, who approved them, and why

- Incident records: Any AI-related compliance incidents and how they were addressed

- Review cadence: Evidence that policies are reviewed and updated on a regular schedule

Without this documentation, you have an AI governance program in theory. With it, you have an AI governance program you can prove.

2. Why Regulators and Auditors Want One

The regulatory environment for AI has shifted from “best practices” to “show me the evidence.” Three forces are driving the demand for AI audit trails.

Force 1: Regulatory enforcement is real.

The EU AI Act became enforceable in February 2025 with penalties up to €35 million or 7% of global annual revenue. GDPR authorities issued over €1.2 billion in fines in 2024. These are not theoretical risks. Regulators are actively investigating and penalizing organizations for compliance failures.

When a regulator investigates, they do not accept “we have a policy” as evidence of compliance. They want documentation proving the policy is communicated, acknowledged, trained on, and enforced. An audit trail is that documentation.

Force 2: Auditors are adding AI to their scope.

Whether you are pursuing SOC 2, ISO 27001, ISO 42001, or undergoing client security assessments, auditors are now asking about AI governance. “How do you manage employee use of AI tools?” has become a standard question in security questionnaires.

Auditors are trained to verify, not trust. When you claim you have AI governance, they will ask for evidence. An audit trail provides that evidence in a format auditors recognize and accept.

Force 3: Clients are asking before they buy.

Enterprise buyers increasingly include AI governance in vendor assessments. “Do you have an AI usage policy?” is followed by “How do you enforce it?” and “Can you demonstrate compliance?”

Companies that can produce audit trail documentation close deals faster. Companies that cannot answer these questions lose deals to competitors who can.

3. What Regulations Require Audit Trails?

Multiple regulatory frameworks either explicitly require or strongly imply the need for AI audit trail documentation.

EU AI Act

The EU AI Act requires organizations deploying high-risk AI systems to maintain documentation demonstrating compliance. For a complete breakdown of EU AI Act requirements, see our EU AI Act Compliance Guide. Article 12 specifically requires “automatic recording of events” (logging) for high-risk systems. Article 26 requires deployers to keep logs generated by high-risk AI systems.

For organizations using general-purpose AI tools like ChatGPT or Claude in business contexts, the transparency and documentation requirements mean you need evidence of how these tools are governed, especially if they touch decisions affecting EU residents.

GDPR

GDPR’s accountability principle (Article 5(2)) requires organizations to demonstrate compliance, not just claim it. If AI tools process personal data (and they almost always do when employees paste information into them), you need documentation showing:

- Lawful basis for processing

- Data protection impact assessments where required

- Records of processing activities

- Evidence of appropriate technical and organizational measures

An AI audit trail provides the “technical and organizational measures” documentation GDPR auditors look for.

SOC 2

SOC 2 Trust Service Criteria require organizations to demonstrate that controls are designed and operating effectively. If AI tools are part of your operations, auditors will ask how they are controlled.

Relevant criteria include:

- CC6.1: Logical access controls

- CC6.7: Restricting access to information

- CC7.2: Monitoring system components for anomalies

- CC7.4: Responding to identified security incidents

An AI audit trail demonstrates that you have controls around AI tool usage and that those controls are operating.

ISO 27001

ISO 27001 requires documented information security policies and evidence that they are communicated and enforced. Annex A controls relevant to AI include:

- A.5.10: Acceptable use of information assets

- A.6.3: Information security awareness and training

- A.8.2: Privileged access management

If AI tools are information assets (they are), you need documented controls around their use.

ISO 42001

ISO 42001 is the new international standard specifically for AI management systems. It requires organizations to:

- Establish an AI policy

- Document AI system lifecycles

- Maintain records of AI-related decisions

- Demonstrate ongoing monitoring and improvement

An AI audit trail is essentially required documentation under ISO 42001.

HIPAA

Healthcare organizations using AI tools that touch protected health information (PHI) must document the safeguards in place. This includes:

- Policies governing AI use with PHI

- Training on those policies

- Access controls and monitoring

- Incident response procedures

An AI audit trail covering these elements satisfies HIPAA documentation requirements.

4. The 7 Components of a Complete AI Audit Trail

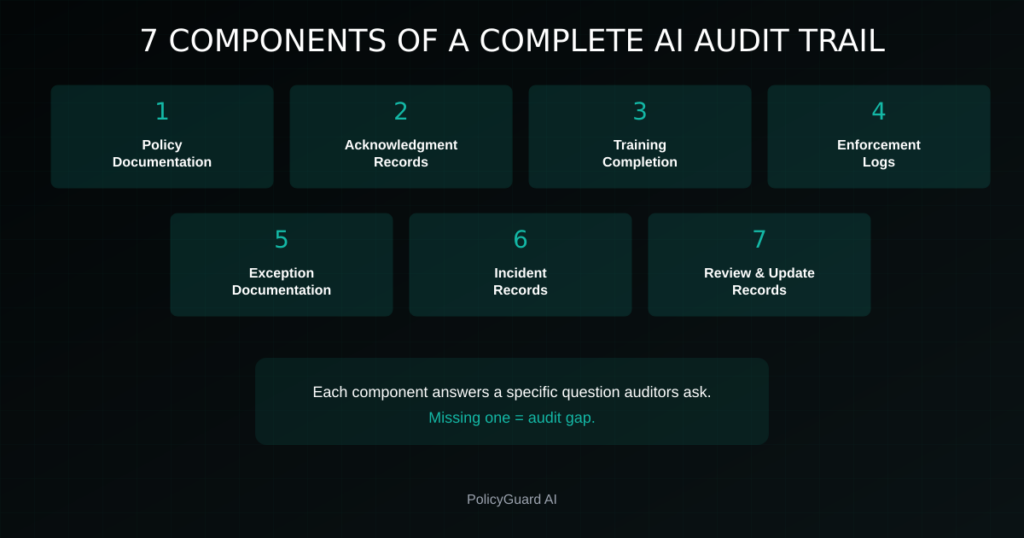

A complete AI audit trail has seven components. Each one answers a specific question auditors ask.

Component 1: Policy Documentation

What it includes:

- Current AI acceptable use policy (full text) — see our complete guide on AI Acceptable Use Policy Templates

- Version history showing policy evolution

- Approval records (who approved, when)

- Distribution records (how it was communicated)

What it proves: You have a formal, approved policy that has been officially communicated.

Auditor question it answers: “Show me your AI usage policy and when it was last updated.”

Component 2: Acknowledgment Records

What it includes:

- Employee name and identifier

- Policy version acknowledged

- Timestamp of acknowledgment

- Method of acknowledgment (digital signature, checkbox, etc.)

- IP address or device identifier (optional but helpful)

Auditor question it answers: “Can you prove your employees read and acknowledged this policy?”

Component 3: Training Completion Records

What it includes:

- Training module completed

- Employee name and identifier

- Completion date and time

- Assessment score (if applicable)

- Certificate or completion confirmation

What it proves: Employees have been trained on AI governance, not just told a policy exists.

Auditor question it answers: “How do you ensure employees understand the policy, not just acknowledge it?”

Component 4: Enforcement Logs

What it includes:

- AI tools accessed

- Timestamps of access

- Policy reminder displayed (yes/no)

- Acknowledgment at point of use

- Any blocked access attempts

What it proves: Governance controls are active during actual AI tool usage, not just during onboarding.

Auditor question it answers: “How do you enforce this policy in practice?”

Component 5: Exception Documentation

What it includes:

- Exception requested

- Business justification

- Approver name and decision

- Approval timestamp

- Conditions or limitations on the exception

- Review or expiration date

What it proves: Exceptions to policy are controlled, documented, and approved, not informal workarounds.

Auditor question it answers: “How do you handle situations where employees need to deviate from policy?”

Component 6: Incident Records

What it includes:

- Incident description

- Date and time of occurrence

- Employees involved

- Data or systems affected

- Response actions taken

- Remediation completed

- Lessons learned and policy updates

What it proves: When things go wrong, you detect them, respond appropriately, and improve.

Auditor question it answers: “Have you had any AI-related compliance incidents, and how did you handle them?”

Component 7: Review and Update Records

What it includes:

- Scheduled review dates

- Actual review completion dates

- Changes made during review

- Approval of updated policies

- Communication of updates to employees

What it proves: AI governance is actively maintained, not a one-time setup that gets stale.

Auditor question it answers: “How do you keep your AI governance current as tools and regulations evolve?”

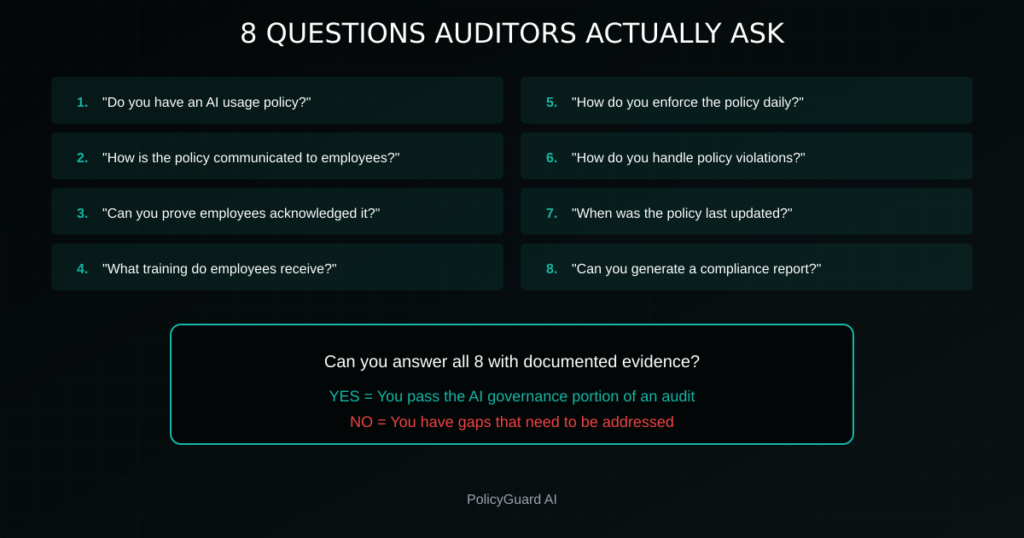

5. What Auditors Actually Ask For

Based on real audit experiences, here are the specific questions auditors ask and the evidence they expect to see.

Question 1: “Do you have an AI usage policy?”

Expected evidence: The policy document itself. PDF or accessible link. Current version with effective date.

Question 2: “How is the policy communicated to employees?”

Expected evidence: Distribution records, onboarding checklists, email announcements, or training assignments showing the policy was pushed to employees.

Question 3: “Can you prove employees acknowledged the policy?”

Expected evidence: Acknowledgment log with names, timestamps, and policy versions. Ability to pull a specific employee’s acknowledgment record.

Question 4: “What training do employees receive on AI governance?”

Expected evidence: Training curriculum, completion records, quiz or assessment scores demonstrating comprehension.

Question 5: “How do you enforce the policy during daily work?”

Expected evidence: Technical controls documentation, enforcement logs, screenshots of policy reminders at point of use.

Question 6: “How do you handle policy violations?”

Expected evidence: Violation response procedures, incident records (anonymized if needed), disciplinary guidelines.

Question 7: “When was the policy last reviewed and updated?”

Expected evidence: Review schedule, last review date, change log, approval records for updates.

Question 8: “Can you generate a compliance report for a specific time period?”

Expected evidence: Ability to export audit trail data for any requested date range, formatted in a way auditors can review.

If you can answer all eight questions with documented evidence, you pass the AI governance portion of an audit. If you cannot, you have gaps that need to be addressed.

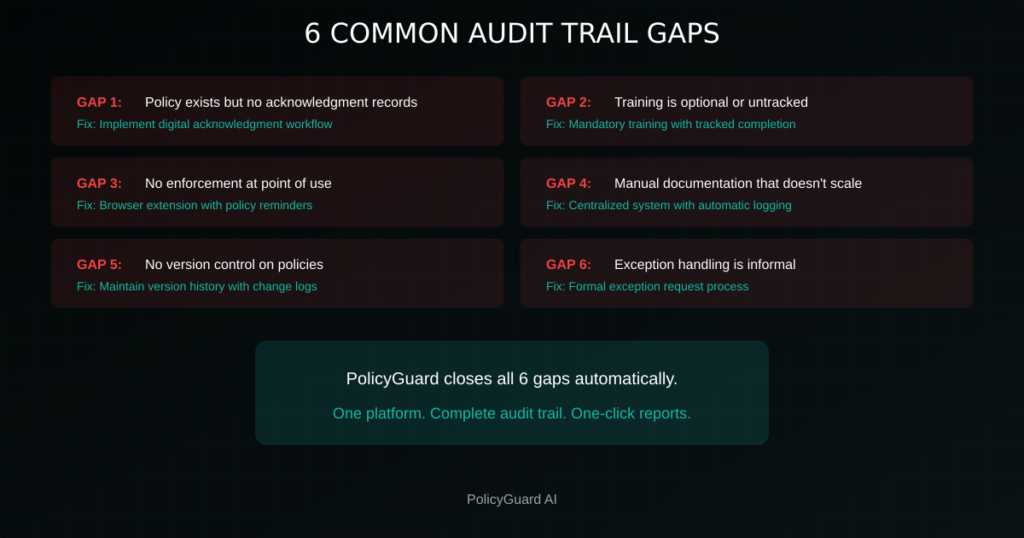

6. Common Audit Trail Gaps (And How to Fix Them)

Most organizations have some elements of an AI audit trail but are missing critical pieces. Here are the most common gaps and how to close them.

Gap 1: Policy exists but no acknowledgment records

The problem: You have a policy document, but no proof employees have seen it. The policy lives in a wiki, SharePoint, or handbook that employees may or may not have read.

How to fix it: Implement a policy acknowledgment workflow. Every employee should digitally acknowledge the policy, creating a timestamped record. Require re-acknowledgment when the policy is updated.

Gap 2: Training is optional or untracked

The problem: Training materials exist, but completion is not mandatory or not tracked. You cannot prove who has been trained.

How to fix it: Make AI governance training mandatory with tracked completion. Include an assessment to verify comprehension. Maintain completion records with dates and scores.

Gap 3: No enforcement at point of use

The problem: Policy exists, training happened, but nothing reinforces governance when employees actually use AI tools. This is how shadow AI thrives — see Shadow AI: The Hidden Risk in Every Company for more on this challenge. There is a gap between onboarding and daily behavior.

How to fix it: Implement point-of-use enforcement. When an employee opens ChatGPT, Claude, or other AI tools, show a policy reminder and require acknowledgment before proceeding. Log every interaction.

Gap 4: Manual documentation that does not scale

The problem: Audit trail elements exist but are scattered across spreadsheets, email threads, and manual logs. Pulling evidence for an audit takes days or weeks.

How to fix it: Centralize audit trail data in a single system. Use a purpose-built tool that automatically logs acknowledgments, training, and enforcement. Enable one-click report generation.

Gap 5: No version control on policies

The problem: The policy has been updated, but you cannot show what previous versions said or when changes were made. Auditors cannot see the evolution.

How to fix it: Maintain version history with dates, change descriptions, and approval records. When someone acknowledged “version 1.2,” you should be able to show exactly what version 1.2 contained.

Gap 6: Exception handling is informal

The problem: Some employees have exceptions to the policy (approved tools, special use cases), but these are handled via email or verbal approval with no documentation.

How to fix it: Create a formal exception request process. Document every exception with business justification, approver, conditions, and expiration date. Include exceptions in your audit trail.

7. Building an Audit Trail That Scales

For organizations with 50 employees or 5,000, the audit trail requirements are the same. The difference is whether your approach can scale without breaking.

Principle 1: Automate capture, not compliance.

The goal is not to automate compliance decisions. It is to automatically capture the evidence that compliance is happening. Policy acknowledgments, training completions, and enforcement logs should generate without manual effort.

Principle 2: Centralize everything.

Scattered documentation is effectively no documentation when an auditor asks for it. Every element of your audit trail should be accessible from one system, exportable in one report. For an overview of all the components you need, see The Complete AI Governance Toolkit.

Principle 3: Build for the audit you will face, not the one you have avoided.

Many organizations build minimal documentation until they face an audit, then scramble. Build your audit trail as if the audit is next month, because eventually it will be.

Principle 4: Make it easy on employees.

If governance creates friction, employees route around it. Design acknowledgment and enforcement to take seconds, not minutes. The less friction, the higher compliance rates, and the cleaner your audit trail.

Principle 5: Update continuously, not annually.

Policies and training that update once a year are stale within months. AI tools, regulations, and risks evolve constantly. Your audit trail should show continuous maintenance, not annual checkbox exercises.

8. How PolicyGuard Creates Audit-Ready Documentation

PolicyGuard was built specifically to generate the audit trail documentation regulators and auditors require.

Policy acknowledgment at point of use: When employees open AI tools (ChatGPT, Claude, Gemini, Copilot, and 80+ others), they see your policy highlights and must acknowledge before proceeding. Every acknowledgment is timestamped and logged with employee ID, policy version, and timestamp.

Training with verified completion: Each policy template includes training modules and quizzes. Completion is tracked automatically. You can see who has completed training, when, and their assessment scores.

Enforcement logs: Every time an employee accesses an AI tool and acknowledges the policy, it is logged. You have a complete record of governed AI tool usage across your organization.

One-click audit reports: Need to show an auditor your AI governance evidence? Export a complete compliance report in one click. Reports include policy versions, acknowledgment records, training completion, and enforcement logs for any date range you specify.

Version control: Policy updates are tracked with version history. When an employee acknowledged “version 2.1,” you can show exactly what that version contained.

The result: When an auditor asks “How do you govern AI usage?”, you do not scramble for scattered documentation. You export a report, hand it over, and move on.

Start your free 14-day trial and see how PolicyGuard builds your audit trail from day one.

Frequently Asked Questions

What is an AI audit trail? An AI audit trail is a documented record proving how your organization governs AI tool usage. It includes policies, acknowledgment records, training completion, enforcement logs, and evidence that governance controls are active. It allows you to demonstrate compliance to regulators and auditors, not just claim it.

Why do auditors want AI audit trails? Auditors are trained to verify, not trust. When you claim to have AI governance, they require evidence. An audit trail provides timestamped, documented proof that policies exist, employees acknowledged them, training happened, and enforcement is active.

What regulations require AI audit trails? The EU AI Act, GDPR, SOC 2, ISO 27001, ISO 42001, HIPAA, and other frameworks all require or strongly imply the need for documented AI governance evidence. The specific requirements vary, but all expect you to demonstrate compliance, not just assert it.

What should be in an AI audit trail? A complete audit trail includes: policy documentation with version history, employee acknowledgment records, training completion logs, enforcement evidence, exception documentation, incident records, and review/update records. Each component answers a specific question auditors ask.

How do I build an AI audit trail? You can build an audit trail manually with spreadsheets and document management, but this approach does not scale and is difficult to maintain. Purpose-built tools like PolicyGuard automatically capture acknowledgments, training, and enforcement, generating audit-ready documentation without manual effort.

How often should AI audit trails be updated? Audit trail data should be captured continuously as events happen (acknowledgments, training completions, enforcement actions). Policies should be reviewed at least annually or whenever regulations, tools, or organizational practices change significantly.

Related Resources

- The Complete Guide to AI Policy and Governance for Companies — The pillar guide covering all aspects of AI governance

- AI Acceptable Use Policy Template: A Complete Guide — How to create the foundational policy document

- AI Policy for Employees: What to Include and How to Enforce It — Detailed guidance on employee-facing AI policies

- EU AI Act Compliance: What Companies Need to Do — Regulatory requirements affecting AI governance

- Shadow AI: The Hidden Risk in Every Company — Understanding and governing unapproved AI tool usage

- The Complete AI Governance Toolkit — All the components you need for a complete AI governance program

- AI Policy Generator vs Expert-Curated Templates — Why human-written policies outperform AI-generated ones

Comments 1