This guide is part of our Complete Guide to AI Policy and Governance for Companies, the central resource for everything you need to know about AI compliance in 2026.

Your employees are using AI tools you do not know about. They are pasting customer data, proprietary code, financial information, and confidential documents into ChatGPT, Claude, Gemini, and dozens of other AI platforms, and they are not telling you about it.

This is shadow AI, and it is happening in your organization right now.

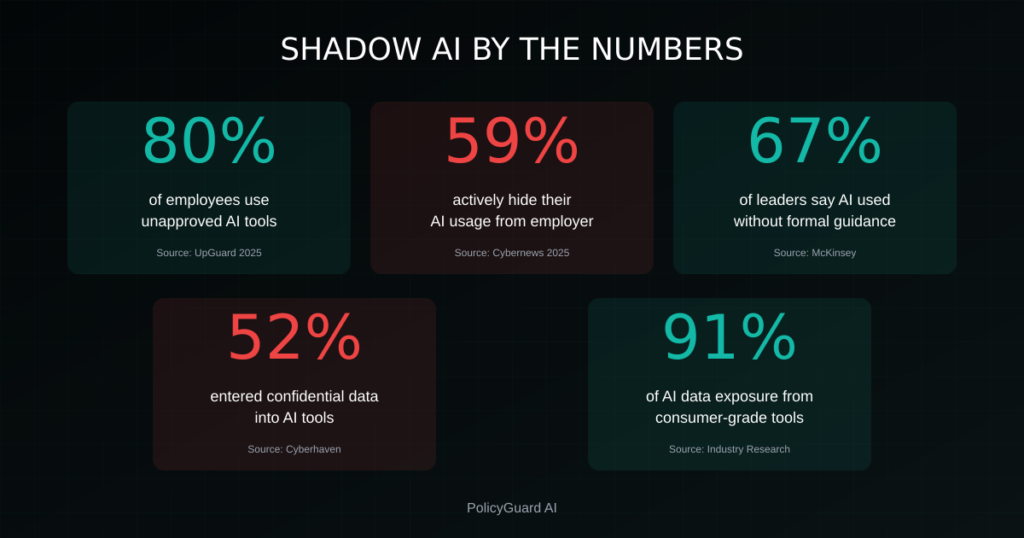

The data is unambiguous: 80% of employees use AI tools their employer has not officially approved, and 59% actively hide their AI usage from their employer. This is not a future risk. It is a present reality with real compliance, security, and legal implications.

This guide explains what shadow AI is, why it is dangerous, how to detect it, and what to do about it before regulators, auditors, or a data breach force your hand.

TABLE OF CONTENTS:

- What Is Shadow AI?

- The Statistics: How Bad Is the Problem?

- Why Employees Hide Their AI Usage

- 7 Real Risks of Shadow AI

- Shadow AI vs Shadow IT: What’s Different

- How to Detect Shadow AI in Your Organization

- The Wrong Response: Banning AI Entirely

- The Right Response: Govern, Don’t Block

- Building a Shadow AI Detection Program

- Getting Started Today

- Frequently Asked Questions

1. What Is Shadow AI?

Shadow AI is the use of artificial intelligence tools by employees without organizational knowledge, approval, or oversight. It is the AI equivalent of shadow IT, where employees adopt cloud services, apps, and software outside of official IT channels.

Shadow AI includes:

Unapproved tools. Employees using AI platforms the company has not vetted, approved, or even heard of. This includes consumer versions of ChatGPT, Claude, Gemini, Perplexity, and hundreds of specialized AI tools for writing, coding, image generation, and data analysis.

Unapproved uses of approved tools. Even if your company has approved certain AI tools, employees may be using them in ways that violate policy, such as entering customer data, proprietary information, or regulated data types.

Hidden usage. Employees deliberately not disclosing that they used AI to complete a task, generate content, write code, or make decisions. The work product appears human-generated but is actually AI-assisted.

Personal account usage. Employees using personal accounts on AI platforms for work tasks, bypassing any enterprise agreements, data protection controls, or audit trails your organization has in place.

The defining characteristic of shadow AI is invisibility. The organization does not know it is happening, cannot measure its scope, cannot assess its risks, and cannot demonstrate governance when auditors ask.

2. The Statistics: How Bad Is the Problem?

The research on shadow AI is consistent and alarming.

80% of employees use AI tools their employer has not approved. This finding from UpGuard’s 2025 research means that in a company of 100 employees, approximately 80 are using AI tools outside of any governance framework. They are making individual decisions about what data to share, which tools to trust, and whether to disclose their usage.

59% of employees actively hide their AI usage. According to Cybernews research, more than half of employees are not just using unapproved AI, they are deliberately concealing it. They know they are operating outside of policy and are choosing not to disclose. This makes detection significantly harder.

67% of business leaders report employees using AI without formal guidance. Leadership is aware that ungoverned AI usage is happening, but most organizations have not implemented the policies, training, or enforcement mechanisms to address it.

52% of employees have entered confidential company information into AI tools. More than half of AI-using employees have shared sensitive data with AI platforms. This includes customer information, financial data, strategic plans, proprietary code, and other confidential materials.

91% of employees believe AI makes them more productive. This explains why shadow AI is so pervasive. Employees are not using AI maliciously. They are using it because it works. They get more done, faster, with better quality. The productivity benefits are real, which is why banning AI entirely is not a viable solution.

The math is simple: If you have 200 employees, approximately 160 are using unapproved AI tools, 118 are hiding it, and 104 have entered confidential data into platforms you have not vetted. This is happening today, whether you see it or not.

3. Why Employees Hide Their AI Usage

Understanding why employees conceal AI usage is essential to addressing the problem. Employees are not hiding AI usage because they are malicious. They are hiding it because of rational fears and misaligned incentives.

Fear of being seen as less competent. Many employees worry that admitting to AI assistance makes them look like they cannot do their job. They believe that work should appear effortless and entirely human-generated. Using AI feels like cheating, even when it is not.

Uncertainty about what is allowed. Most companies have not published clear AI usage policies. Employees do not know if AI is permitted, which tools are approved, or what data can be shared. In the absence of clarity, they use AI anyway and stay quiet to avoid potential trouble.

Previous negative experiences with IT restrictions. Employees remember when useful tools were banned because IT or compliance said no. They expect the same response to AI and preemptively hide their usage to avoid losing access to tools that make them more productive.

No mechanism for disclosure. Even employees who would disclose AI usage often have no clear way to do so. There is no checkbox, no form, no acknowledgment process. The path of least resistance is silence.

Perceived hypocrisy. Employees see leadership talking about AI transformation while simultaneously failing to provide approved tools or clear guidance. The mixed signals create a culture where unofficial usage is implicitly tolerated.

The insight here is important: Shadow AI is not primarily a technology problem. It is a policy, culture, and communication problem. Employees need clear guidance, approved tools, and a safe way to disclose usage. Without these, hiding is the rational choice.

4. 7 Real Risks of Shadow AI

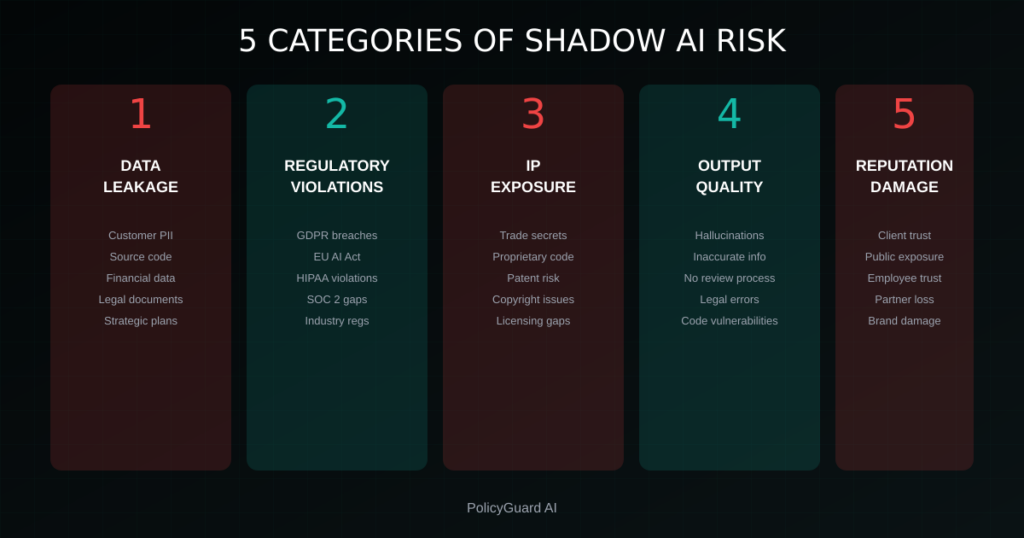

Shadow AI creates concrete risks that affect compliance, security, legal exposure, and business operations.

Risk 1: Data Leakage

When employees paste confidential information into AI tools, that data may be stored, logged, or used for model training depending on the platform’s terms of service. Consumer versions of most AI tools explicitly state that user inputs may be used to improve models.

This means customer PII, proprietary code, financial projections, legal documents, and strategic plans could become part of a training dataset accessible to the AI provider and potentially surfaced in outputs to other users.

Real example: Samsung banned employee use of ChatGPT after engineers uploaded proprietary source code and internal meeting notes. The data entered into the consumer platform was outside Samsung’s control.

Risk 2: Regulatory Violations

GDPR, CCPA, HIPAA, and other regulations require organizations to know where personal data is processed, ensure appropriate protections are in place, and maintain records of processing activities.

When employees use unapproved AI tools with personal data, the organization violates these requirements. You cannot demonstrate lawful processing if you do not know processing is occurring. You cannot ensure adequate protections if you have not vetted the AI provider.

The EU AI Act adds additional requirements around AI transparency and human oversight that cannot be met when AI usage is invisible.

Risk 3: Audit Failures

SOC 2, ISO 27001, and other compliance frameworks require organizations to demonstrate control over data processing and technology usage. Auditors ask questions like: “How do you govern AI usage?” and “Can you show me your AI policy enforcement?”

When shadow AI is pervasive, honest answers to these questions reveal control gaps. Audits fail or require significant remediation. Client security reviews surface problems that jeopardize business relationships.

Risk 4: Intellectual Property Exposure

Code, designs, business strategies, and other intellectual property entered into AI tools may lose trade secret protection. Trade secret law requires that information be subject to reasonable measures to maintain secrecy.

If employees routinely share proprietary information with third-party AI platforms, the argument that you took reasonable protective measures becomes difficult to sustain.

Risk 5: Inaccurate or Harmful Outputs

AI tools hallucinate. They generate plausible-sounding but incorrect information. When employees use AI without oversight and pass off outputs as their own work, errors propagate.

A legal brief with hallucinated case citations. A financial model with fabricated data points. Customer communications with incorrect information. Code with subtle security vulnerabilities. All of these have occurred when AI outputs were not properly reviewed.

Risk 6: Contractual Breaches

Many client contracts, NDAs, and vendor agreements include provisions about data handling, confidentiality, and use of subprocessors. Sharing client data with AI platforms may breach these agreements.

If your contract with a client says you will not share their data with third parties without consent, and your employees are pasting client information into ChatGPT, you are in breach.

Risk 7: Inconsistent Quality and Standards

When AI usage is hidden, organizations cannot establish consistent standards for when and how AI should be used. One employee uses AI for every customer email. Another refuses to use it at all. A third uses it but manually reviews everything.

This inconsistency affects quality, creates confusion about work standards, and makes it impossible to train employees effectively on AI best practices.

5. Shadow AI vs Shadow IT: What’s Different

Shadow AI is often compared to shadow IT, the use of unauthorized software, cloud services, and applications. The risks are related but shadow AI has unique characteristics that require specific attention.

Data exposure is more severe. Traditional shadow IT might involve using Dropbox instead of approved file storage. Shadow AI involves actively inputting data into systems designed to learn from that data. The exposure is not just access but ingestion.

Outputs become part of the organization. Shadow IT is about where data goes. Shadow AI is also about what comes back. AI-generated content, code, analysis, and recommendations become embedded in the organization’s work product, creating ongoing risks around accuracy and intellectual property.

Detection is harder. Shadow IT often involves installing software or accessing cloud services that network monitoring can detect. Shadow AI often happens through web browsers accessing publicly available AI platforms. There is no installation, no unusual network traffic, just a browser tab.

The productivity benefits are higher. Employees use shadow IT for convenience. Employees use shadow AI for transformation. The productivity gains from AI are so significant that employees are highly motivated to use these tools regardless of policy. This makes prohibition ineffective.

The regulatory landscape is specific. The EU AI Act, emerging state AI regulations, and evolving guidance from regulators specifically address AI governance in ways that go beyond general IT security requirements. Shadow AI creates compliance gaps specific to AI regulation.

6. How to Detect Shadow AI in Your Organization

Detecting shadow AI requires a combination of technical controls, policy mechanisms, and cultural approaches.

Technical Detection Methods

Browser extension monitoring. A browser extension that detects when employees visit AI tool domains provides visibility into which tools are being accessed, how often, and by whom. This is the most direct method of shadow AI detection.

PolicyGuard’s browser extension monitors 80+ AI tools and logs access without reading conversation content. When employees visit ChatGPT, Claude, Gemini, or other AI platforms, the access is detected and can trigger a policy acknowledgment.

Network traffic analysis. Review DNS queries and web traffic logs for connections to known AI platform domains. This provides aggregate visibility but does not identify individual users unless combined with user-level logging.

Endpoint detection. Some endpoint security tools can identify when AI applications are installed or AI websites are accessed. Check whether your existing security stack provides this visibility.

Cloud access security brokers (CASBs). If you use a CASB, configure it to detect and report on AI platform access. Many CASBs have added AI tool categories to their monitoring capabilities.

Policy-Based Detection

Disclosure requirements. Require employees to disclose when AI was used to create or assist with work products. This will not catch all shadow AI, but it establishes an expectation and catches employees who are willing to disclose but were not previously asked.

Attestation during onboarding and reviews. Include AI usage questions in onboarding, performance reviews, and compliance attestations. Ask employees to confirm they are using only approved AI tools for work purposes.

Anonymous reporting. Provide a mechanism for employees to report shadow AI they observe without fear of retaliation. Some employees will report if given a safe channel.

Cultural Detection

Just ask. Many organizations have never directly asked employees about their AI usage. Anonymous surveys or open conversations often reveal that AI usage is far more widespread than leadership realized.

Review work products. Look for signs of AI-generated content: unusual phrasing patterns, inconsistent style within documents, or content quality that does not match the employee’s typical output. This is not foolproof but can surface cases for follow-up.

Exit interviews. Departing employees are often more candid about practices that were common but unofficial. Include AI usage questions in exit interviews.

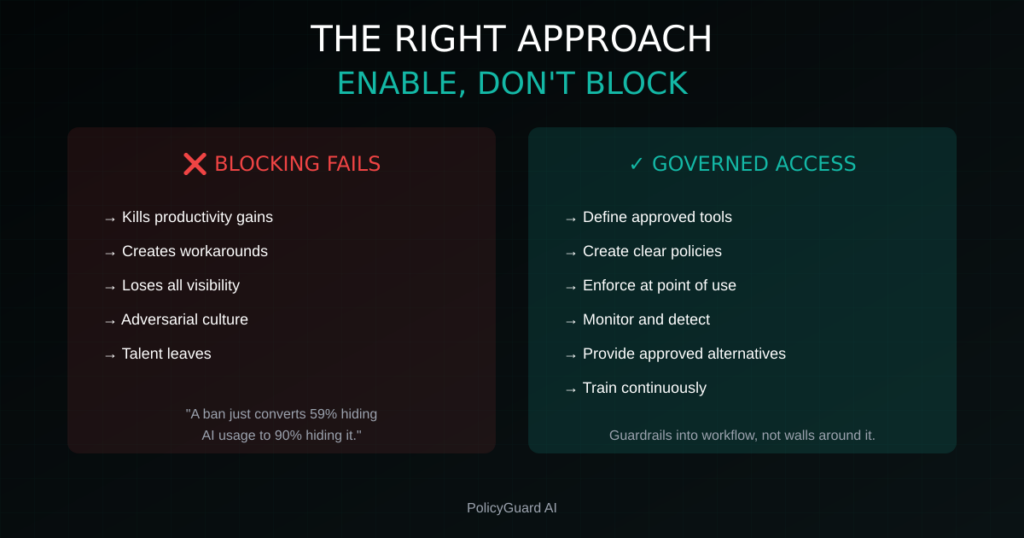

7. The Wrong Response: Banning AI Entirely

When organizations discover the scope of shadow AI, a common instinct is to ban AI tools entirely. Block the domains. Prohibit all usage. Eliminate the risk by eliminating the technology.

This approach fails for several reasons.

Employees will circumvent the ban. Personal phones, home computers, mobile hotspots, personal accounts. Employees who want to use AI will find a way. The ban drives usage further underground, making it even less visible.

You lose the productivity benefits. The 91% of employees who say AI makes them more productive are not wrong. AI assistance with writing, research, coding, and analysis delivers real efficiency gains. Banning AI means your competitors who embrace it will outperform you.

You create a culture of secrecy. Bans that employees view as unreasonable erode trust and encourage a culture where circumventing policy is normalized. This has spillover effects beyond AI usage.

You cannot ban AI embedded in tools you already use. Microsoft 365 Copilot, Notion AI, Grammarly, GitHub Copilot. AI is being embedded into the productivity tools you already rely on. You cannot ban AI without banning the tools themselves.

It signals that leadership is out of touch. Employees know AI is transforming work. A blanket ban signals that leadership does not understand modern tools or trust employees to use them responsibly.

The research is clear: prohibition does not reduce shadow AI. It just makes it more hidden.

8. The Right Response: Govern, Don’t Block

The effective response to shadow AI is governance: clear policies, approved tools, training, and enforcement that enables productive AI use while managing risks.

Establish Clear Policies

Create an AI acceptable use policy that tells employees exactly what is allowed. Which tools are approved? What data can be shared? When must AI usage be disclosed? What are the consequences for violations?

Ambiguity drives shadow AI. Clarity reduces it.

Related guide: AI Acceptable Use Policy Template: A Complete Guide

Provide Approved Tools

If employees need AI capabilities, give them approved options. Negotiate enterprise agreements with AI providers that include appropriate data protections. Provide access to tools that have been vetted for security and compliance.

Employees use shadow AI because they need AI. Give them a sanctioned alternative.

Train Employees

Explain why AI governance matters. Cover the risks of unapproved usage. Train employees on how to use approved tools appropriately. Make the training practical, not just policy recitation.

Employees who understand the risks make better decisions.

Enforce at the Point of Use

Policies that live in handbooks do not change behavior. Enforcement at the moment employees access AI tools creates awareness and accountability.

A browser extension that presents policy reminders when employees open AI tools and logs acknowledgments creates a governance layer that does not rely on employees remembering to follow policy.

Create Safe Disclosure Mechanisms

Make it easy for employees to disclose AI usage. Remove the stigma. Celebrate appropriate use. When employees see that disclosure is welcomed rather than punished, hidden usage decreases.

Monitor and Adapt

Shadow AI is not a problem you solve once. New AI tools launch constantly. Employee behavior evolves. Regulations change. Build monitoring into your program and review it regularly.

9. Building a Shadow AI Detection Program

Here is a practical roadmap for implementing shadow AI detection and governance.

Phase 1: Assess (Week 1-2)

Survey employees anonymously. Ask about AI tool usage, which tools, how often, for what purposes. The results will likely surprise you.

Review existing controls. What visibility do you currently have into AI tool access? Can your network monitoring, CASB, or endpoint tools provide data?

Identify high-risk departments. Engineering (code), legal (confidential documents), HR (personal data), and customer service (customer information) typically have the highest shadow AI risk.

Phase 2: Policy (Week 2-3)

Draft or update your AI policy. Define approved tools, prohibited uses, data classification, and disclosure requirements.

Get leadership buy-in. AI governance needs executive sponsorship. Present the risk data and proposed policy to leadership.

Communicate clearly. Announce the policy with context. Explain why it exists and how it enables rather than restricts AI usage.

Related guide: AI Policy for Employees: What to Include and How to Enforce It

Phase 3: Tools (Week 3-4)

Deploy detection capabilities. Implement browser extension monitoring, network analysis, or other technical controls to gain visibility.

Provision approved AI tools. Ensure employees have access to sanctioned AI tools that meet their needs.

Configure enforcement. Set up policy acknowledgment workflows, training assignments, and compliance tracking.

Phase 4: Training (Week 4-5)

Roll out AI training. Cover policy requirements, approved tools, data handling rules, and disclosure expectations.

Verify comprehension. Use quizzes or attestations to confirm employees understand the policy.

Make training ongoing. AI governance is not one-and-done. Schedule refresher training and updates when policies change.

Phase 5: Monitor (Ongoing)

Review detection data. Which unapproved tools are employees trying to access? Are new AI platforms emerging?

Track compliance metrics. What percentage of employees have acknowledged policies? Completed training? What is your overall compliance score?

Adapt as needed. Add newly discovered tools to your approved or blocked lists. Update policies based on emerging risks.

10. Getting Started Today

Shadow AI is not a theoretical risk. It is happening in your organization right now. The only question is whether you will govern it proactively or discover it during an audit, breach, or regulatory enforcement action.

Step 1: Accept that shadow AI exists in your organization. The statistics are too consistent to assume you are the exception.

Step 2: Gain visibility. You cannot manage what you cannot see. Deploy technical controls that show you which AI tools employees are accessing.

Step 3: Create or update your AI policy. Give employees clear guidance on what is allowed.

Step 4: Enforce at the point of use. A browser extension that presents policy reminders when employees access AI tools transforms a document into an active governance control.

Step 5: Track and report. Build compliance metrics that you can present to leadership, auditors, and clients.

PolicyGuard provides all of these capabilities in a single platform: 80+ AI tool detection, policy acknowledgment enforcement, training with quizzes, shadow AI alerts, and audit-ready reporting.

Start your free 14-day trial: getpolicyguard.com/register See the platform in action: getpolicyguard.com/demo

Frequently Asked Questions

What is shadow AI? Shadow AI is the use of AI tools by employees without organizational knowledge, approval, or oversight. It includes using unapproved AI platforms, using approved tools in unapproved ways, and hiding AI usage from employers. Research shows 80% of employees use unapproved AI tools and 59% actively conceal their usage.

Why is shadow AI dangerous? Shadow AI creates risks including data leakage to AI platforms, regulatory violations (GDPR, EU AI Act, HIPAA), audit failures, intellectual property exposure, inaccurate AI outputs entering business processes, and contractual breaches with clients. These risks are compounded by invisibility, as organizations cannot manage risks they cannot see.

How do I detect shadow AI? Detection methods include browser extension monitoring that logs AI tool access, network traffic analysis for AI platform domains, endpoint detection tools, policy-based disclosure requirements, anonymous employee surveys, and work product review for signs of AI generation. Technical monitoring combined with cultural approaches provides the most complete visibility.

Should I ban AI tools entirely? No. Banning AI does not reduce shadow AI, it drives usage further underground and makes detection harder. Employees will use personal devices and accounts to circumvent bans. The effective approach is governance: clear policies, approved tools, training, and enforcement that enables productive AI use while managing risks.

How do I reduce shadow AI? Reduce shadow AI by providing clear policies that define what is allowed, provisioning approved AI tools that meet employee needs, training employees on appropriate usage and risks, enforcing policies at the point of AI tool access, creating safe mechanisms for disclosure, and monitoring usage patterns to identify gaps.

What is the difference between shadow AI and shadow IT? Shadow IT is the use of unauthorized software and cloud services. Shadow AI is specifically about AI tools and has unique characteristics: data is actively ingested for training, AI outputs become embedded in work products, detection is harder because usage happens through standard web browsers, and AI-specific regulations (EU AI Act) create additional compliance requirements.

How common is shadow AI? Extremely common. Research consistently shows 80% of employees use AI tools their employer has not approved, 59% actively hide their usage, 67% of business leaders are aware employees use AI without guidance, and 52% of employees have entered confidential information into AI tools.

What should my AI policy include to address shadow AI? Your policy should include a clear list of approved AI tools, explicit prohibition of unapproved tools, data classification rules specifying what can and cannot be entered into AI tools, disclosure requirements for AI-assisted work, training requirements, and enforcement consequences. PolicyGuard provides 19+ expert-curated templates covering all of these elements.

Comments 2