This guide is part of our Complete Guide to AI Policy and Governance for Companies, the central resource for everything you need to know about AI compliance in 2026.

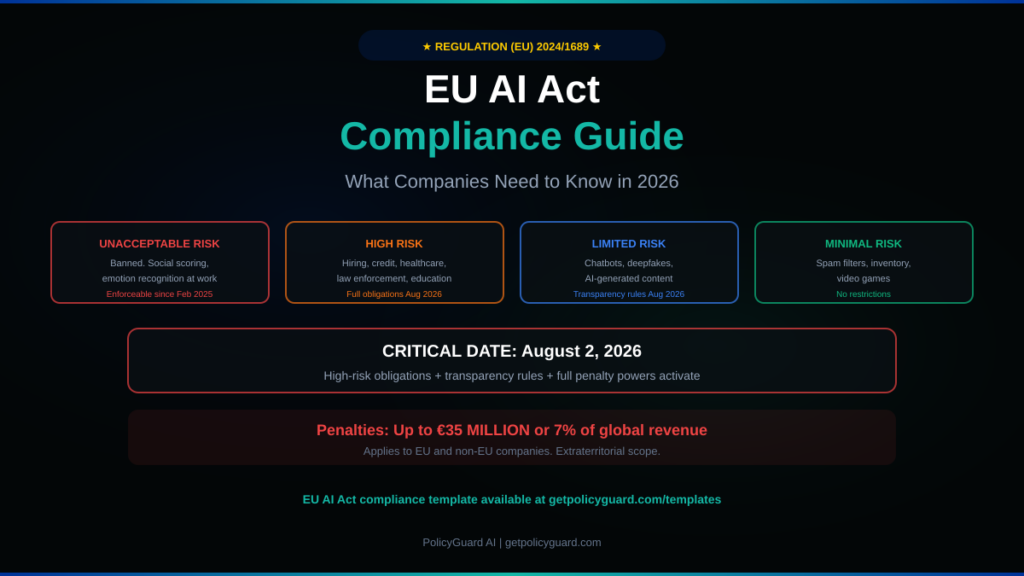

The EU AI Act is the world’s first comprehensive legal framework for regulating artificial intelligence. It became law on August 1, 2024, and is being enforced in phases. The first prohibitions took effect on February 2, 2025. The most consequential enforcement date — August 2, 2026 — is months away.

On that date, obligations for high-risk AI systems, full transparency requirements, and penalty enforcement powers all activate simultaneously. Fines reach up to €35 million or 7% of global annual revenue, whichever is higher. For context, 7% of revenue would cost Meta approximately $8.5 billion, Google $14 billion, and Microsoft $16 billion based on 2024 financials.

This is not theoretical. Finland designated its first national AI Act enforcer effective January 1, 2026. Other member states are following. Enforcement infrastructure is being built right now.

This guide breaks down what the EU AI Act requires, who it applies to, the exact timeline, the penalty structure, and precisely what your company needs to do to comply — whether you are based in the EU or not.

Who Does the EU AI Act Apply To?

The EU AI Act applies to any organization that develops, deploys, or uses AI systems that affect people within the European Union. This includes companies headquartered outside the EU, mirroring GDPR’s extraterritorial reach.

Specifically, the Act applies to three categories of organizations.

Providers are organizations that develop AI systems or have them developed, then place them on the EU market under their own name or trademark. If you build AI tools and sell or offer them to EU customers, you are a provider.

Deployers are organizations that use AI systems in a professional capacity within the EU. If your company uses ChatGPT, Copilot, Claude, or any AI tool to serve EU customers or make decisions affecting EU residents, you are a deployer.

Importers and distributors are organizations that bring AI systems into the EU market or make them available within the EU.

The critical point: if your company uses AI tools and serves EU customers, you have obligations under the EU AI Act regardless of where your company is headquartered. A US company with EU clients, a Nigerian company processing EU data, a Singapore company with EU operations — all are within scope.

The Four Risk Tiers

The EU AI Act classifies AI systems into four risk categories, with obligations scaling to match each tier.

Tier 1: Unacceptable Risk (Banned). These AI practices are prohibited entirely. They include social scoring systems that rank people based on personal characteristics, AI that uses subliminal or manipulative techniques to distort behavior, emotion recognition in workplaces and educational settings, untargeted scraping of facial images to build recognition databases, and real-time biometric identification in public spaces (with limited law enforcement exceptions). These prohibitions have been enforceable since February 2, 2025. Violation carries the maximum penalty: €35 million or 7% of global revenue.

Tier 2: High Risk (Strict Obligations). AI systems used in sensitive areas face comprehensive compliance requirements. These areas include biometric identification, critical infrastructure management, education and vocational training (admissions, assessment), employment (recruitment, hiring, performance evaluation, termination), access to essential services (credit scoring, insurance, social benefits), law enforcement (crime prediction, evidence analysis), migration and border control, and administration of justice and democratic processes. High-risk obligations activate on August 2, 2026. These include quality management systems, risk management frameworks, technical documentation, conformity assessments, EU database registration, human oversight provisions, and ongoing monitoring.

Tier 3: Limited Risk (Transparency Requirements). AI systems that interact with people or generate content must meet transparency obligations. Chatbots must disclose their artificial nature to users. Emotion recognition systems must notify users. AI-generated content (deepfakes, synthetic text, synthetic images) must be clearly labeled and carry machine-readable watermarks. Biometric categorization systems must disclose their use. These transparency obligations become enforceable on August 2, 2026.

Tier 4: Minimal Risk (No Restrictions). AI systems that pose minimal risk, such as spam filters, inventory management, or video game AI, face no specific obligations. Organizations can use these freely without compliance requirements.

Alt text: EU AI Act compliance guide showing the four risk tiers timeline and penalty structure for 2026

The Enforcement Timeline

Understanding the phased timeline is critical for prioritizing your compliance efforts.

February 2, 2025 (ALREADY IN EFFECT). Prohibited AI practices banned. AI literacy obligations began. Organizations using any banned AI practices face immediate penalties of up to €35 million or 7% of global revenue.

August 2, 2025 (ALREADY IN EFFECT). Governance provisions activated. General-purpose AI (GPAI) model obligations took effect. The AI Office became fully operational. Member states designated national competent authorities. Penalty rules at the member state level were established.

August 2, 2026 (UPCOMING — THE CRITICAL DATE). This is the most consequential enforcement date. High-risk AI system obligations under Annex III take full effect. Transparency obligations under Article 50 become enforceable. National AI regulatory sandboxes must be operational. Full penalty enforcement powers activate. This is the date your company must be prepared for.

August 2, 2027. Remaining provisions apply. High-risk AI systems embedded in regulated products (medical devices, vehicles, aviation) must comply. GPAI models placed on the market before August 2025 must reach full compliance.

August 2, 2030. Legacy public sector AI systems placed on the market before the Act must comply.

The Digital Omnibus proposal from November 2025 could potentially extend some high-risk deadlines to December 2027. However, the European Commission has rejected industry calls for blanket delays. Prudent compliance planning treats August 2, 2026 as the binding deadline.

Alt text: EU AI Act penalty structure showing three tiers of fines from 7.5 million to 35 million euros based on violation type

The Penalty Structure

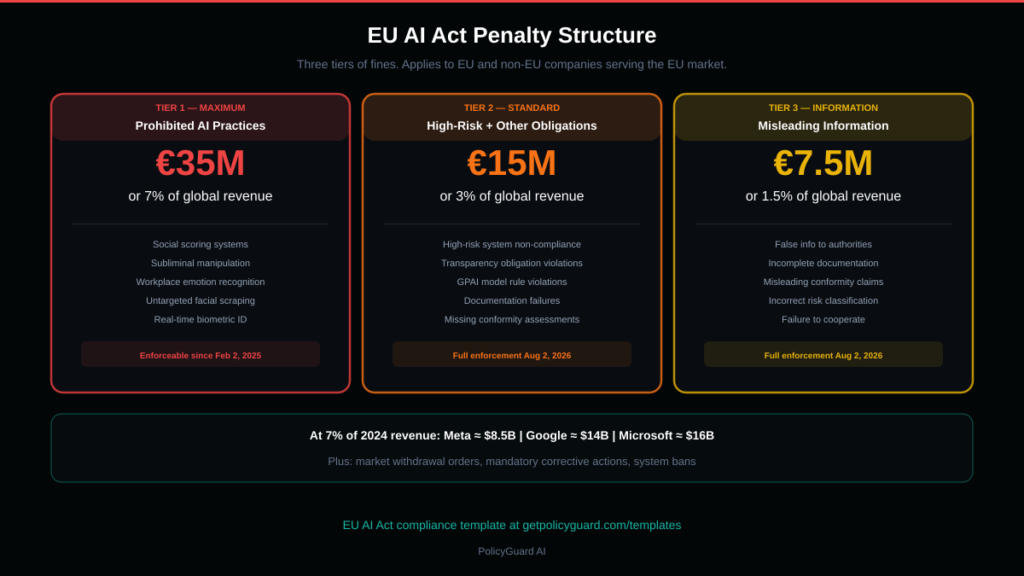

The EU AI Act’s penalties are designed to deter even the largest technology companies. Three tiers of fines exist.

Tier 1 — Prohibited practices. Up to €35 million or 7% of total worldwide annual turnover, whichever is higher. This applies to violations of Article 5 (banned AI systems like social scoring, subliminal manipulation, emotion recognition in workplaces).

Tier 2 — High-risk and other obligations. Up to €15 million or 3% of total worldwide annual turnover. This covers non-compliance with high-risk AI system requirements, transparency obligations, and general-purpose AI model rules.

Tier 3 — Incorrect or misleading information. Up to €7.5 million or 1.5% of total worldwide annual turnover. This applies to providing false, incomplete, or misleading information to regulatory authorities.

SMEs and startups face proportionally lower maximum fines, though specific thresholds are determined at the national level by each member state.

Beyond financial penalties, market surveillance authorities can order non-compliant AI systems withdrawn from the EU market, mandate corrective actions including model retraining, and prohibit the placement of new systems until compliance is demonstrated. A market withdrawal order can be more damaging than a fine for companies that depend on EU revenue.

What Most Companies Actually Need to Do

The EU AI Act is comprehensive, but the practical obligations for most companies fall into five areas. Here is what you need to do, in order of priority.

1. Conduct an AI System Inventory

Before you can comply, you need to know what you are complying for. Catalogue every AI system your organization provides, deploys, or uses. This includes commercial AI tools employees access (ChatGPT, Copilot, Claude, Gemini, Midjourney), AI features embedded in software you already use (CRM scoring, HR screening tools, automated customer service), AI systems you have built internally, and third-party AI tools integrated into your products or services.

For each system, document what it does, what data it processes, who it affects, and which risk tier it falls under. This inventory is the foundation of everything else.

2. Classify Risk Levels

Map each AI system in your inventory to the four-tier risk classification. Most commercial AI tools used by employees (ChatGPT for drafting, Copilot for coding, Grammarly for writing) fall under limited risk or minimal risk. AI tools used in hiring, credit decisions, performance evaluation, or customer scoring are likely high-risk. Any system matching the prohibited list must be discontinued immediately.

The classification determines your obligations. Get this wrong and you either over-invest in compliance for low-risk systems or under-invest for high-risk ones.

3. Implement AI Governance Documentation

Documentation is the most underestimated compliance burden. The EU AI Act requires more extensive documentation than most organizations maintain for traditional software. For high-risk systems, you need technical documentation describing how the system works, risk assessments covering potential impacts, data governance documentation, testing and validation records, quality management system documentation, human oversight provisions, and ongoing monitoring procedures.

For all AI systems, you need an AI usage policy that governs how employees interact with AI tools. This policy should cover approved tools, prohibited uses, data classification, disclosure requirements, and incident reporting. This is where PolicyGuard’s 28+ expert-curated templates are directly relevant — our EU AI Act template is specifically designed to address the Act’s documentation requirements.

4. Ensure AI Literacy Across Your Organization

Article 4 of the EU AI Act requires that all providers and deployers ensure their staff have a sufficient level of AI literacy. This has been enforceable since February 2, 2025. AI literacy is not optional. It is a legal requirement.

Practically, this means your employees need to understand what AI tools they are using, the risks those tools present, the rules governing their usage, and how to comply with your AI policy. Training must be documented. Comprehension should be verified. PolicyGuard includes training modules and scored quizzes that create the documentation trail needed to demonstrate AI literacy compliance.

5. Build Your Compliance Evidence Trail

The EU AI Act is not about having a policy. It is about proving compliance. When a regulator asks for evidence that your organization governs AI usage appropriately, you need to produce policy documents with version history, proof that employees received and acknowledged the policy, training completion records with comprehension verification, an inventory of AI systems with risk classifications, incident reports and corrective actions, and ongoing monitoring data.

This evidence trail must be comprehensive, organized, and exportable. If compiling this evidence takes your team days or weeks, your governance program has a tooling problem.

PolicyGuard consolidates all of this — policy acknowledgment timestamps, training records, quiz scores, AI tool access logs, and compliance dashboards — into a single platform with one-click audit reporting. This is not a nice-to-have for EU AI Act compliance. It is the difference between “we have governance” and “we can prove governance.”

Common Misconceptions

“We are not in the EU, so this does not apply to us.” If your AI systems affect people in the EU — if you have EU customers, process EU data, or offer services in the EU — you are within scope. The EU AI Act has extraterritorial reach, just like GDPR.

“We just use ChatGPT, we do not build AI.” Deployers have obligations too. Using AI tools in a professional capacity triggers transparency requirements and, depending on the use case, potentially high-risk obligations. If you use AI for hiring decisions, credit scoring, or performance evaluation, you may be operating a high-risk AI system even if you did not build it.

“The Digital Omnibus will delay everything.” The European Commission has rejected blanket delays. Even if specific high-risk deadlines shift, the prohibited practices, AI literacy requirements, and governance provisions are already enforceable. Counting on delays is not a compliance strategy.

“Our AI vendor handles compliance.” Vendors handle their obligations as providers. Your obligations as a deployer are separate. You need your own AI usage policy, your own training program, your own documentation, and your own evidence trail. Vendor compliance does not equal your compliance.

“We will start when enforcement actually begins.” Prohibited AI practices have been enforceable since February 2025. AI literacy has been required since February 2025. Finland has already designated its national enforcer. Enforcement is not upcoming. It is happening.

Alt text: EU AI Act compliance checklist for companies showing 8 essential steps from AI inventory to ongoing monitoring

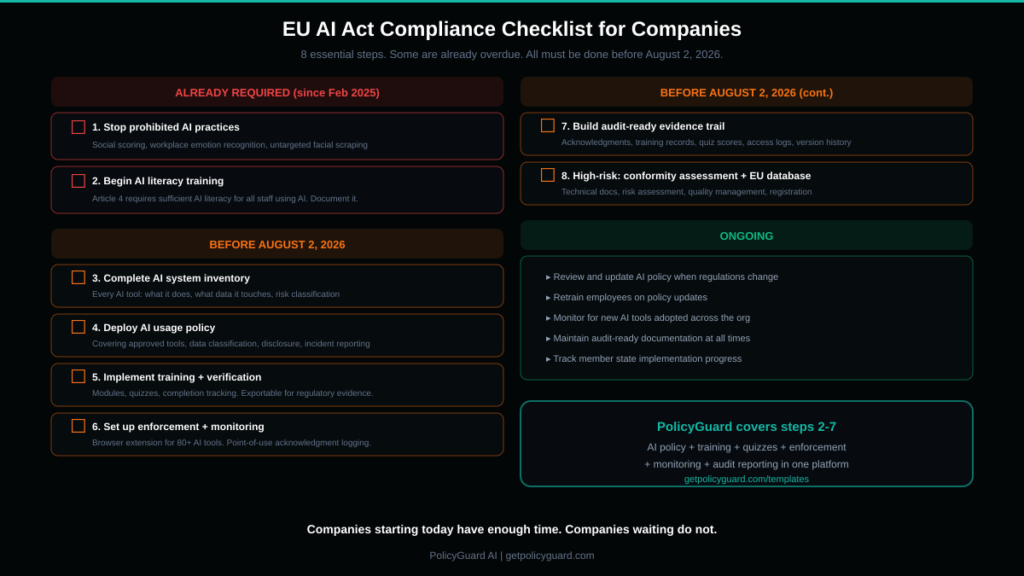

Your EU AI Act Compliance Checklist

Use this checklist to assess your current compliance posture and identify gaps.

Immediate (should already be done):

- Discontinued any prohibited AI practices (social scoring, workplace emotion recognition, untargeted facial scraping)

- Initiated AI literacy training for all staff involved in AI use

- Identified all AI systems in use across the organization

Before August 2, 2026:

- Completed full AI system inventory with risk classifications

- Implemented AI usage policy covering all employee AI tool usage

- Deployed training and comprehension verification for all employees

- Established incident reporting procedures for AI-related issues

- Created technical documentation for any high-risk AI systems

- Completed conformity assessments for high-risk systems

- Registered high-risk systems in the EU database

- Implemented ongoing monitoring and logging for AI tool usage

- Built an exportable compliance evidence trail (audit-ready reporting)

- Appointed internal responsibility for AI governance oversight

Ongoing:

- Review and update AI policy when regulations or tools change

- Retrain employees on policy updates

- Monitor for new AI tools being adopted across the organization

- Maintain audit-ready documentation at all times

- Track regulatory developments and EU member state implementation

How PolicyGuard Helps With EU AI Act Compliance

PolicyGuard directly addresses the practical compliance requirements that most companies face under the EU AI Act.

AI usage policy with an EU AI Act-specific template covering Article 4 (AI literacy), Article 50 (transparency), data handling provisions, and incident reporting aligned with the Act’s requirements. Written by compliance professionals, not AI. Available at getpolicyguard.com/templates.

AI literacy training with modules and scored quizzes that satisfy Article 4’s requirement for staff AI literacy. Completion records and quiz scores are timestamped and exportable for regulatory evidence.

AI tool monitoring across 80+ tools via browser extension, providing the AI system inventory and usage visibility the Act requires. You cannot govern what you cannot see.

Policy enforcement at the point of use, creating continuous compliance evidence. Every time an employee accesses an AI tool, they acknowledge the policy. Every acknowledgment is logged.

Audit-ready reporting with one-click export. When a national competent authority requests evidence of your AI governance, you produce a comprehensive report in under 60 seconds.

The August 2, 2026 deadline is months away. Companies starting today have enough time to implement a complete governance program. Companies waiting do not.

Start your free 14-day trial at getpolicyguard.com/register. Browse all templates at getpolicyguard.com/templates.

Frequently Asked Questions

When does the EU AI Act take full effect? The EU AI Act is being enforced in phases. Prohibited AI practices have been enforceable since February 2, 2025. AI literacy requirements also took effect on that date. The most significant enforcement milestone is August 2, 2026, when high-risk AI system obligations, transparency requirements, and full penalty enforcement powers all activate. Some provisions for AI embedded in regulated products extend to August 2, 2027.

What are the penalties under the EU AI Act? Penalties have three tiers. Violations of prohibited AI practices carry fines up to €35 million or 7% of global annual revenue. Non-compliance with high-risk and other obligations carries fines up to €15 million or 3% of global revenue. Providing incorrect or misleading information carries fines up to €7.5 million or 1.5% of global revenue. Beyond fines, authorities can order AI systems withdrawn from the EU market.

Does the EU AI Act apply to companies outside the EU? Yes. The EU AI Act has extraterritorial scope. Any organization that develops, deploys, or uses AI systems that affect people within the EU must comply, regardless of where the company is headquartered. This mirrors GDPR’s extraterritorial reach and means US, UK, Asian, and African companies with EU customers or operations are within scope.

What is AI literacy under the EU AI Act? Article 4 requires that all providers and deployers ensure their staff have a sufficient level of AI literacy, taking into account their technical knowledge, experience, education, and the context in which AI systems are used. This means employees using AI tools must understand what the tools do, the risks they present, and the rules governing their use. Training must be documented.

How do I know if my AI system is high-risk? High-risk AI systems are defined in Annex III of the Act. They include AI used for biometric identification, critical infrastructure, education and training, employment and worker management, access to essential services (credit, insurance, social benefits), law enforcement, migration and border control, and justice and democratic processes. If your company uses AI in any of these areas, those specific systems are likely high-risk and require comprehensive compliance.

What documentation does the EU AI Act require? For high-risk AI systems: technical documentation, risk assessments, data governance records, testing and validation records, quality management system documentation, conformity assessments, human oversight provisions, and ongoing monitoring records. For all organizations using AI: an AI usage policy, training records, and compliance evidence. The documentation burden is the most underestimated aspect of EU AI Act compliance.

Comments 2