This guide is part of our Complete Guide to AI Policy and Governance for Companies, the central resource for everything you need to know about AI compliance in 2026.

An AI compliance framework is the structure that turns good intentions into documented, provable, defensible governance.

Without a framework, AI governance is ad hoc. Policies exist in isolation. Training happens inconsistently. Enforcement depends on individual managers. When an auditor or regulator asks for evidence, you scramble to compile it from scattered sources.

With a framework, every component connects. Policies feed into training. Training enables enforcement. Enforcement generates audit trails. When someone asks “How do you govern AI?”, you have a system that produces the answer.

This guide walks you through building an AI compliance framework from scratch. Whether you are starting with nothing or formalizing existing practices, you will have a complete, operational framework by the end.

TABLE OF CONTENTS:

- What Is an AI Compliance Framework?

- Why You Need a Framework (Not Just Policies)

- The 6 Pillars of an AI Compliance Framework

- Pillar 1: Governance Structure

- Pillar 2: Policy Foundation

- Pillar 3: Risk Assessment

- Pillar 4: Training and Awareness

- Pillar 5: Enforcement and Controls

- Pillar 6: Monitoring and Audit

- Implementation Roadmap: 90 Days to Compliance

- Common Framework Mistakes to Avoid

- How PolicyGuard Accelerates Framework Implementation

- Frequently Asked Questions

1. What Is an AI Compliance Framework?

An AI compliance framework is a structured system of policies, procedures, controls, and documentation that governs how your organization uses artificial intelligence.

It answers four fundamental questions:

What are the rules? Policies that define acceptable AI use, data handling requirements, prohibited activities, and compliance obligations.

Who is responsible? Governance structure that assigns ownership, accountability, and decision-making authority for AI-related matters.

How do we enforce the rules? Controls that ensure policies are followed in practice, not just documented in theory.

How do we prove compliance? Audit trails, documentation, and reporting that demonstrate governance to regulators, auditors, and stakeholders.

A framework is more than a collection of documents. It is an operating system for AI governance that connects every component into a functioning whole.

Think of it this way: a policy tells employees what to do. A framework ensures they actually do it and creates evidence that they did.

2. Why You Need a Framework (Not Just Policies)

Most organizations have AI policies. Few have AI compliance frameworks. The difference matters.

Policies Without a Framework

- Policy exists in a handbook or wiki

- Employees may or may not have read it

- No verification that employees understood it

- No enforcement at point of AI use

- No audit trail of compliance

- When auditors ask for evidence, you search through emails and hope

Policies Within a Framework

- Policy is actively communicated and acknowledged

- Training verifies employee understanding

- Controls enforce policy at point of AI use

- Every interaction generates audit data

- When auditors ask for evidence, you generate a report in one click

The gap between these two scenarios is the difference between compliance theater and actual compliance.

Regulatory reality:

The EU AI Act, GDPR, HIPAA, SOC 2, and other frameworks do not just require policies. They require demonstrable compliance. Article 5(2) of GDPR explicitly states that controllers must be able to demonstrate compliance with data protection principles.

When a regulator investigates, “we have a policy” is not a defense. “Here is our framework, and here is the evidence it generates” is a defense.

Business reality:

Enterprise customers increasingly ask about AI governance in vendor assessments. “Do you have an AI policy?” is followed by “How do you enforce it?” and “Can you demonstrate compliance?”

A framework gives you answers. Policies alone give you awkward pauses.

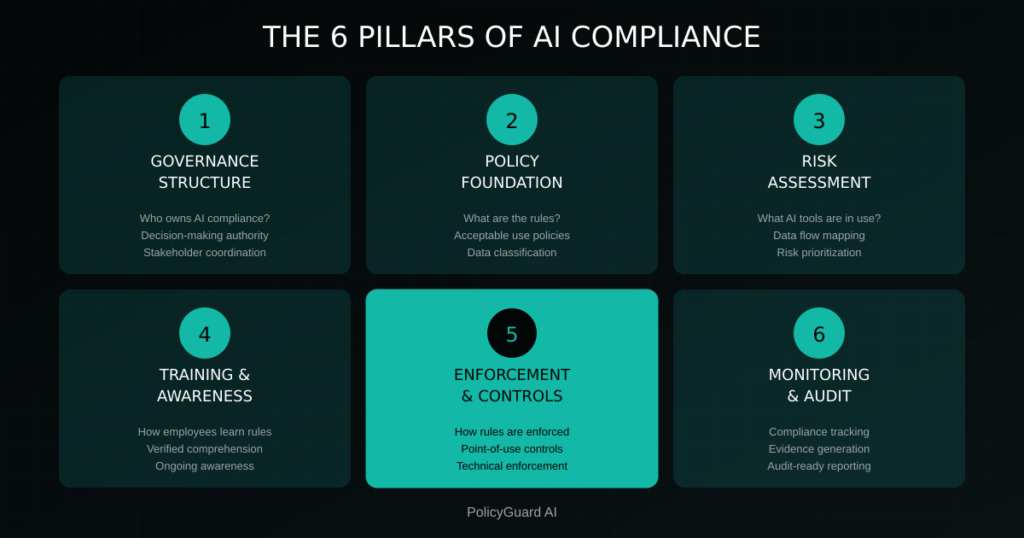

3. The 6 Pillars of an AI Compliance Framework

A complete AI compliance framework has six pillars. Each pillar addresses a specific aspect of governance, and all six work together.

Pillar 1: Governance Structure Who owns AI compliance? What is the decision-making structure? How do stakeholders coordinate?

Pillar 2: Policy Foundation What are the rules? What is acceptable use? What is prohibited? What are the consequences?

Pillar 3: Risk Assessment What AI tools are in use? What data flows through them? What are the risks? How are they prioritized?

Pillar 4: Training and Awareness How do employees learn the rules? How do you verify understanding? How do you maintain awareness?

Pillar 5: Enforcement and Controls How do you ensure policies are followed? What technical and procedural controls exist? What happens at point of AI use?

Pillar 6: Monitoring and Audit How do you track compliance? What evidence is generated? How do you report to stakeholders?

Missing any pillar creates a gap. A framework with strong policies but no enforcement is theater. A framework with enforcement but no monitoring cannot prove compliance. All six pillars must be present and connected.

4. Pillar 1: Governance Structure

Before you write policies or implement controls, you need to establish who owns AI compliance and how decisions get made.

Define Ownership

AI compliance needs a clear owner. Without ownership, governance becomes everyone’s responsibility and therefore no one’s responsibility.

Executive Sponsor: A C-level executive accountable for AI governance outcomes. This is typically the CISO, Chief Compliance Officer, Chief Risk Officer, or General Counsel. The executive sponsor:

- Has authority to mandate compliance across departments

- Allocates budget and resources

- Reports AI governance status to the board

- Is accountable for regulatory compliance

AI Governance Lead: A designated individual responsible for day-to-day framework operations. This may be a dedicated role or an addition to existing GRC responsibilities. The AI governance lead:

- Develops and maintains policies

- Coordinates across departments

- Manages training programs

- Oversees enforcement mechanisms

- Prepares audit documentation

Departmental Owners: Individuals in each business unit responsible for AI compliance within their teams. Departmental owners:

- Ensure team members complete required training

- Report AI usage and concerns upward

- Enforce policy compliance within their scope

- Participate in risk assessments

Establish a Governance Committee

For organizations with significant AI usage, a cross-functional AI governance committee provides coordination and oversight.

Committee composition:

- AI Governance Lead (chair)

- IT/Security representative

- Legal representative

- HR representative

- Compliance representative

- Business unit representatives (as needed)

Committee responsibilities:

- Review and approve AI policies

- Assess new AI tools and use cases

- Address cross-departmental issues

- Review compliance metrics and incidents

- Update framework based on evolving risks and regulations

Meeting cadence:

- Monthly for organizations with active AI initiatives

- Quarterly for organizations with stable AI usage

- Ad hoc for urgent issues or incidents

Document the Structure

Create a governance charter that documents:

- Roles and responsibilities

- Decision-making authority

- Escalation procedures

- Reporting relationships

- Meeting schedules and agendas

This documentation becomes part of your audit evidence, demonstrating that governance is intentional, not accidental.

5. Pillar 2: Policy Foundation

Policies are the rules that govern AI usage. A complete policy foundation covers acceptable use, data handling, output review, incident response, and compliance requirements.

Core Policy Documents

AI Acceptable Use Policy: The foundational document defining how employees may use AI tools. Covers:

- Approved AI tools and platforms

- Prohibited uses and activities

- Data classification and handling

- Output review requirements

- Disclosure obligations

- Consequences for violations

For detailed guidance, see our AI Acceptable Use Policy Template.

Data Classification for AI: Framework defining what data can and cannot enter AI systems. Typically includes:

- Public: No restrictions

- Internal: May be used with approved enterprise AI tools

- Confidential: Restricted use with specific approvals

- Restricted/Regulated: Prohibited from AI systems without explicit exception

AI Output Review Policy: Requirements for reviewing AI-generated content before use. Addresses:

- When review is required

- Who is qualified to review

- What review should verify

- Documentation requirements

AI Incident Response Policy: Procedures for handling AI-related compliance incidents. Covers:

- Incident definition and classification

- Reporting procedures

- Investigation process

- Remediation requirements

- Documentation and lessons learned

Policy Development Principles

Be specific, not vague: “Employees should be careful with sensitive data” is not actionable. “Employees must not enter customer PII, financial data, or confidential business information into AI tools” is actionable.

Be realistic, not aspirational: Policies that employees cannot reasonably follow will be ignored. If your policy prohibits all AI use but employees need AI to do their jobs, you will have shadow AI. Design policies that enable productive use while managing risk.

Be current, not static: AI tools, regulations, and risks evolve rapidly. Build review cycles into your framework. Annual review at minimum; quarterly for organizations with significant AI usage.

Be consistent, not contradictory: AI policies must align with other organizational policies (data protection, information security, acceptable use, HR). Contradictions create confusion and compliance gaps.

Policy Approval and Communication

Policies are only effective if they are approved, communicated, and acknowledged.

Approval: Policies should be formally approved by appropriate authority (governance committee, executive sponsor, legal review). Document the approval with date, approver, and version number.

Communication: Policies must be actively communicated, not just posted somewhere. Methods include:

- Email announcement to all employees

- Training modules introducing the policy

- Manager briefings

- Intranet/wiki publication

- New employee onboarding

Acknowledgment: Employees should acknowledge they have received, read, and understood the policy. This creates audit evidence and establishes accountability. Digital acknowledgment with timestamp is preferred.

For guidance on employee-facing policies, see AI Policy for Employees.

6. Pillar 3: Risk Assessment

Risk assessment identifies what AI tools are in use, what risks they create, and how to prioritize governance efforts.

AI Inventory

You cannot govern what you cannot see. Start by inventorying AI tools across your organization.

Discovery methods:

- Technical: Network logs, SSO records, browser monitoring

- Procurement: Software licenses, expense reports, vendor contracts

- Survey: Department interviews, employee questionnaires

- Shadow IT: Tools employees use without formal approval

Inventory data points:

- Tool name and vendor

- Business purpose

- Users (departments, roles, number)

- Data types processed

- Integration with other systems

- Approval status (sanctioned vs. shadow)

Risk Classification

For each AI tool or use case, assess risk based on:

Data sensitivity:

- What data enters the AI system?

- Is it public, internal, confidential, or regulated?

- Does it include personal data subject to GDPR, HIPAA, or other regulations?

Decision impact:

- What decisions does the AI output inform?

- Are these decisions reversible or irreversible?

- Do they affect customers, employees, or external parties?

Autonomy level:

- Does a human review every output?

- Are some outputs used without review?

- Does the AI take actions automatically?

Regulatory exposure:

- Does use fall under EU AI Act high-risk categories?

- Are there industry-specific regulations (healthcare, finance)?

- What are the potential penalties for non-compliance?

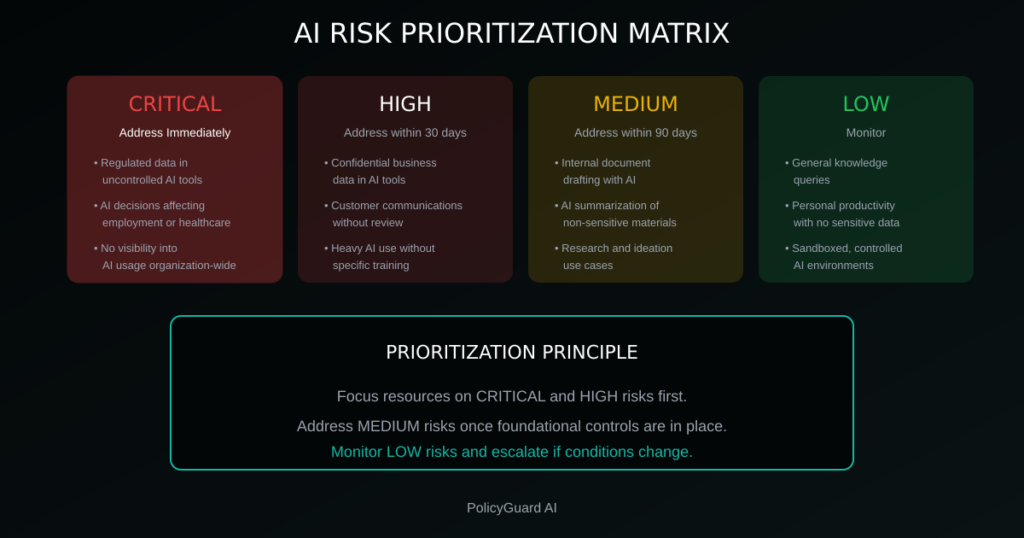

Risk Prioritization

Use a risk matrix to prioritize governance efforts:

Critical risks (address immediately):

- Regulated data in uncontrolled AI tools

- AI-assisted decisions affecting employment, credit, or healthcare

- No visibility into AI usage across organization

High risks (address within 30 days):

- Confidential business data in AI tools

- AI-generated customer communications without review

- Departments with heavy AI use but no specific training

Medium risks (address within 90 days):

- Internal document drafting with AI

- AI summarization of non-sensitive materials

- Research and ideation use cases

Low risks (monitor):

- General knowledge queries

- Personal productivity with no sensitive data

- Sandboxed, controlled AI environments

For comprehensive risk management guidance, see AI Risk Management: A Framework for Non-Technical Leaders.

7. Pillar 4: Training and Awareness

Training ensures employees understand AI policies, recognize risks, and know how to use AI responsibly.

Training Program Design

Baseline training (all employees):

- What AI governance means and why it matters

- Overview of AI acceptable use policy

- Data classification and handling requirements

- How to recognize AI errors and hallucinations

- Reporting procedures for concerns or incidents

- Consequences of policy violations

Role-specific training:

- Developers: Secure AI coding practices, IP considerations

- Customer-facing roles: AI disclosure requirements, output review

- HR: AI in hiring and employment decisions, bias risks

- Legal: Regulatory requirements, contract implications

- Executives: Governance oversight, liability considerations

Specialized training:

- High-risk AI users: Enhanced training for those using AI with sensitive data or high-stakes decisions

- Approvers: Training for those who approve AI use cases or exceptions

- Incident responders: Training for those who handle AI-related incidents

Training Delivery

Methods:

- E-learning modules: Scalable, trackable, self-paced

- Live sessions: Interactive, allows questions, builds culture

- Just-in-time: Reminders at point of AI use

- Refreshers: Regular updates as policies and tools evolve

Verification: Training without verification is attendance, not learning. Include:

- Quizzes to verify comprehension

- Minimum passing scores

- Remediation for those who fail

- Completion tracking and documentation

Awareness Maintenance

Training is not a one-time event. Ongoing awareness maintains compliance culture.

Awareness methods:

- Regular communications (newsletter, email updates)

- Policy reminders at point of AI tool access

- Case studies of AI incidents (internal or industry)

- Recognition for compliance champions

- Governance updates from leadership

Refresh cadence:

- Annual: Full policy re-acknowledgment and training refresh

- Quarterly: Awareness communications and updates

- As needed: Training on new tools, policies, or regulations

8. Pillar 5: Enforcement and Controls

Policies and training set expectations. Enforcement ensures they are met. This pillar is where most frameworks fail.

The Enforcement Gap

Research shows:

- 80% of employees use AI tools their organization has not approved

- 59% actively hide their AI usage from employers

- 67% of leaders say employees use AI without formal guidance

These numbers exist because most organizations have policies but not enforcement. The gap between policy and enforcement is where compliance failures occur.

For more on this challenge, see Shadow AI: The Hidden Risk in Every Company.

Control Types

Policy controls:

- Documented policies that define acceptable use

- Acknowledgment requirements

- Regular policy reviews and updates

Technical controls:

- Browser extensions that prompt policy acknowledgment at AI tool access

- DLP tools configured for AI tool domains

- Network controls restricting unapproved AI tools

- Enterprise AI tools with built-in data protection

Procedural controls:

- Approval workflows for AI use in high-risk contexts

- Required human review before AI outputs are used

- Exception request and documentation processes

- Regular compliance audits by department

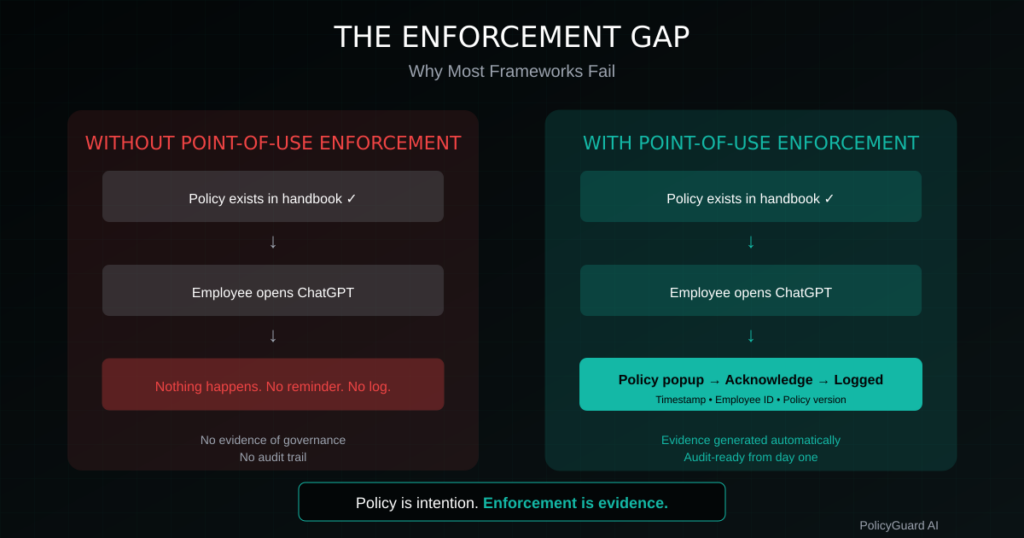

Point-of-use enforcement: This is the critical control most organizations lack. When an employee opens ChatGPT, what happens?

Without point-of-use enforcement:

- Nothing happens

- Employee uses AI without governance touchpoint

- No evidence of policy awareness

- No audit trail generated

With point-of-use enforcement:

- Policy highlights displayed

- Employee acknowledges before proceeding

- Timestamp recorded

- Audit trail created automatically

Point-of-use enforcement transforms governance from documentation to accountability.

Control Implementation Priority

If you are building enforcement from scratch, prioritize:

- Policy acknowledgment: Require all employees to acknowledge AI policy

- Training completion: Require AI governance training with verification

- Point-of-use reminders: Implement browser extension for policy acknowledgment at AI tool access

- Audit logging: Capture acknowledgments, training, and enforcement data

- Exception management: Create formal process for policy exceptions

This sequence builds foundational controls before advancing to more sophisticated enforcement.

9. Pillar 6: Monitoring and Audit

Monitoring tracks compliance status. Audit proves it to stakeholders.

Compliance Metrics

Track metrics that indicate framework health:

Policy metrics:

- Policy acknowledgment rate (target: 100%)

- Time to acknowledgment for new employees

- Policy version currency (are employees on current version?)

Training metrics:

- Training completion rate (target: 100%)

- Average quiz scores

- Time to completion for new employees

- Refresher training compliance

Enforcement metrics:

- Point-of-use acknowledgment rate

- AI tools detected (sanctioned vs. shadow)

- Exception requests and approvals

- Policy violations detected

Incident metrics:

- Number of AI-related incidents

- Time to detection

- Time to resolution

- Remediation effectiveness

Monitoring Cadence

Real-time:

- Point-of-use enforcement logs

- Shadow AI detection alerts

- Critical incident notifications

Weekly:

- Compliance dashboard review

- New employee onboarding status

- Training completion tracking

Monthly:

- Comprehensive metrics review

- Governance committee reporting

- Trend analysis and emerging risks

Quarterly:

- Framework effectiveness assessment

- Policy review and updates

- Risk reassessment

- Stakeholder reporting

Audit Readiness

The purpose of monitoring is audit readiness. When an auditor, regulator, or customer asks about AI governance, you should be able to produce evidence immediately.

Audit documentation:

- Current policies with version history

- Acknowledgment records (who, when, what version)

- Training completion records (who, when, scores)

- Enforcement logs (point-of-use acknowledgments)

- Exception documentation

- Incident records and remediation

- Governance committee meeting minutes

- Risk assessments and updates

Audit reporting: Your framework should generate audit reports on demand. Key reports:

- Employee compliance status (acknowledgments, training)

- AI tool inventory and risk classification

- Enforcement activity summary

- Incident history and resolution

- Policy version and review history

For detailed guidance on audit trails, see AI Audit Trail: What It Is and Why Regulators Want One.

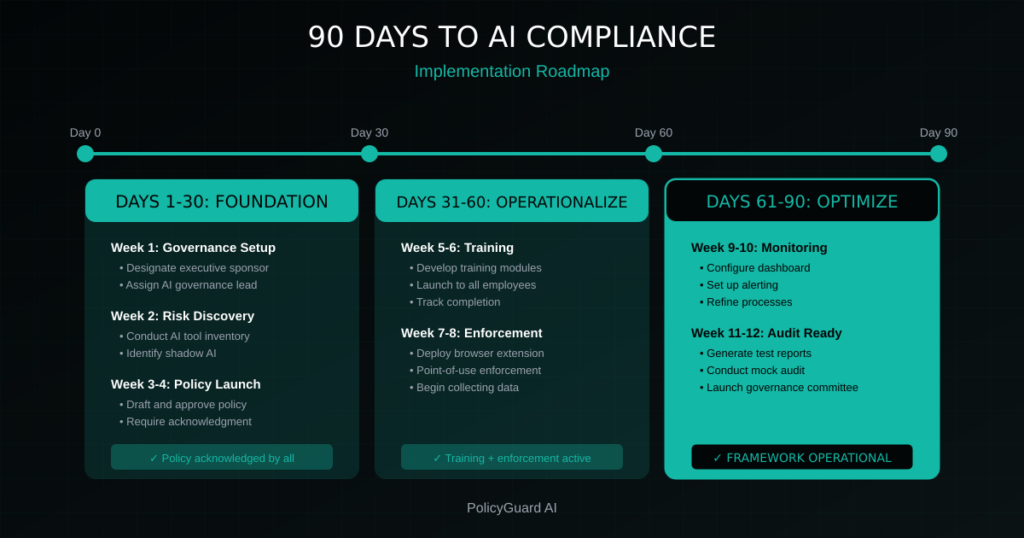

10. Implementation Roadmap: 90 Days to Compliance

Building a complete framework takes time, but you can achieve operational compliance in 90 days with focused effort.

Days 1-30: Foundation

Week 1: Governance setup

- Designate executive sponsor

- Assign AI governance lead

- Identify departmental owners

- Draft governance charter

Week 2: Risk discovery

- Conduct AI tool inventory

- Survey departments on AI usage

- Identify shadow AI tools

- Document initial risk assessment

Week 3: Policy development

- Draft AI acceptable use policy

- Draft data classification for AI

- Review with legal and compliance

- Prepare policy acknowledgment process

Week 4: Policy launch

- Obtain executive approval

- Communicate policy to all employees

- Require policy acknowledgment

- Track acknowledgment completion

Day 30 milestone: Policy acknowledged by all employees

Days 31-60: Operationalization

Week 5: Training development

- Develop baseline AI governance training

- Include quiz for verification

- Prepare role-specific modules (if needed)

- Set up training tracking

Week 6: Training launch

- Assign training to all employees

- Set completion deadlines

- Track completion rates

- Remediate non-completers

Week 7: Enforcement implementation

- Deploy point-of-use enforcement (browser extension)

- Configure for high-priority AI tools

- Test with pilot group

- Refine based on feedback

Week 8: Full enforcement rollout

- Deploy to all employees

- Monitor for issues

- Address technical problems

- Begin collecting enforcement data

Day 60 milestone: Training complete, enforcement active

Days 61-90: Optimization

Week 9: Monitoring setup

- Configure compliance dashboard

- Set up alerting for critical metrics

- Establish reporting cadence

- Train administrators on tools

Week 10: Process refinement

- Review initial compliance data

- Identify gaps and issues

- Refine policies based on feedback

- Update training as needed

Week 11: Audit preparation

- Generate test audit reports

- Verify documentation completeness

- Conduct mock audit

- Address gaps identified

Week 12: Governance committee launch

- Hold first governance committee meeting

- Review framework status

- Establish ongoing meeting cadence

- Document continuous improvement plan

Day 90 milestone: Complete framework operational, audit-ready

11. Common Framework Mistakes to Avoid

Organizations building AI compliance frameworks often make predictable mistakes. Awareness helps you avoid them.

Mistake 1: Starting with technology, not governance

The error: Buying AI governance tools before establishing governance structure, policies, and processes.

Why it fails: Tools cannot compensate for missing governance fundamentals. You end up with expensive technology enforcing unclear policies with no one accountable.

The fix: Establish governance structure and policies first. Then select tools that support your framework.

Mistake 2: Creating policies no one can follow

The error: Writing aspirational policies disconnected from operational reality.

Why it fails: Employees cannot comply with unrealistic requirements. They either ignore the policy or work around it, creating shadow compliance.

The fix: Involve operational stakeholders in policy development. Test policies against real use cases before finalizing.

Mistake 3: Training without verification

The error: Requiring training completion but not verifying comprehension.

Why it fails: Clicking through slides is not learning. Employees complete training without understanding, then violate policies unknowingly.

The fix: Include quizzes with minimum passing scores. Require remediation for failures. Verify comprehension, not just attendance.

Mistake 4: Policy without enforcement

The error: Investing in policies and training but not implementing enforcement controls.

Why it fails: Policies and training set expectations. Only enforcement ensures they are met. Without enforcement, compliance depends on individual motivation, which varies.

The fix: Implement point-of-use enforcement. Create accountability at the moment AI tools are accessed.

Mistake 5: One-time compliance

The error: Building a framework and considering it done.

Why it fails: AI tools, regulations, and risks evolve rapidly. A framework built in January may be outdated by June. Compliance degrades without maintenance.

The fix: Build continuous improvement into your framework. Regular reviews, updates, and refresh cycles keep governance current.

Mistake 6: Treating AI compliance as an IT project

The error: Delegating AI compliance entirely to IT or technical teams.

Why it fails: AI compliance is a business risk with legal, HR, compliance, and operational dimensions. Technical controls are necessary but not sufficient.

The fix: Ensure business leadership owns AI compliance with IT providing technical support. Cross-functional governance is essential.

12. How PolicyGuard Accelerates Framework Implementation

PolicyGuard provides the infrastructure to implement your AI compliance framework faster and maintain it with less effort.

Policy Foundation

PolicyGuard includes 19+ expert-curated policy templates covering:

- AI acceptable use

- Data classification for AI

- Role-specific policies

- Regulatory compliance (GDPR, EU AI Act, HIPAA, SOC 2)

Policies are written by compliance professionals, not generated by AI. You can customize templates for your organization and deploy within days.

Training and Awareness

Built-in training modules cover AI governance fundamentals with:

- Interactive content

- Quizzes for verification

- Completion tracking

- Automated reminders for non-completers

Training integrates with policies, so employees learn the rules you have established.

Enforcement and Controls

PolicyGuard’s browser extension provides point-of-use enforcement:

- Detects access to 80+ AI tools

- Displays policy highlights

- Requires acknowledgment before proceeding

- Logs every interaction

This creates the enforcement layer most frameworks lack.

Monitoring and Audit

Real-time compliance dashboard shows:

- Policy acknowledgment status

- Training completion rates

- Enforcement activity

- Compliance gaps requiring attention

One-click audit reports generate documentation for regulators, auditors, or customers on demand.

The Result

What typically takes 6-12 months to build internally, PolicyGuard enables in weeks. You get:

- Framework infrastructure without building from scratch

- Policies without starting from blank documents

- Enforcement without custom development

- Audit readiness from day one

Start your free 14-day trial and accelerate your AI compliance framework implementation.

Frequently Asked Questions

What is an AI compliance framework? An AI compliance framework is a structured system of policies, procedures, controls, and documentation that governs how your organization uses artificial intelligence. It connects governance structure, policies, risk assessment, training, enforcement, and monitoring into an integrated system that ensures compliance and generates evidence.

Why do I need a framework instead of just policies? Policies define the rules. A framework ensures the rules are followed and creates evidence that they are. Without a framework, policies are documentation. With a framework, policies become operational compliance. Regulators and auditors expect demonstrable compliance, not just documented policies.

What are the main components of an AI compliance framework? A complete framework has six pillars: governance structure (who owns compliance), policy foundation (what are the rules), risk assessment (what are the risks), training and awareness (how employees learn the rules), enforcement and controls (how rules are enforced), and monitoring and audit (how compliance is tracked and proven).

How long does it take to build an AI compliance framework? With focused effort, you can achieve operational compliance in 90 days. The first 30 days establish governance and launch policies. Days 31-60 operationalize training and enforcement. Days 61-90 optimize monitoring and achieve audit readiness. Ongoing maintenance continues indefinitely.

Who should own AI compliance in an organization? AI compliance needs an executive sponsor (typically CISO, CCO, or CRO) for accountability and authority, an AI governance lead for day-to-day management, and departmental owners for team-level compliance. A cross-functional governance committee coordinates across the organization.

How do I enforce AI policies? Enforcement requires controls beyond documentation. Key controls include policy acknowledgment requirements, training with verified completion, point-of-use enforcement (browser extensions that prompt acknowledgment when accessing AI tools), and procedural controls like approval workflows. Point-of-use enforcement is the most critical and most often missing control.

What evidence do auditors expect from an AI compliance framework? Auditors expect documentation of policies, acknowledgment records showing employees received and acknowledged policies, training completion records with verification, enforcement logs showing governance is active, exception documentation, incident records, and governance committee activities. Your framework should generate this evidence automatically.

Related Resources

- The Complete Guide to AI Policy and Governance for Companies — The pillar guide covering all aspects of AI governance

- AI Acceptable Use Policy Template: A Complete Guide — How to create the foundational policy document

- AI Policy for Employees: What to Include and How to Enforce It — Detailed guidance on employee-facing AI policies

- AI Risk Management: A Framework for Non-Technical Leaders — Risk assessment and prioritization

- Shadow AI: The Hidden Risk in Every Company — Understanding and governing unapproved AI tool usage

- AI Audit Trail: What It Is and Why Regulators Want One — Building audit-ready AI governance documentation

- EU AI Act Compliance: What Companies Need to Do — Regulatory requirements affecting AI governance