This guide is part of our Complete Guide to AI Policy and Governance for Companies, the central resource for everything you need to know about AI compliance in 2026.

You do not need to understand how large language models work to manage the risks they create.

AI risk management is not a technical discipline. It is a business discipline. The same executives who manage financial risk, operational risk, and reputational risk can manage AI risk using frameworks they already understand.

This guide provides a practical AI risk management framework designed for non-technical leaders: board members, executives, compliance officers, HR directors, and legal counsel. No machine learning background required. Just clear thinking about risk, controls, and accountability.

TABLE OF CONTENTS:

- Why AI Risk Management Is a Leadership Issue

- The 5 Categories of AI Risk

- The AI Risk Management Framework

- Step 1: Identify AI Exposure

- Step 2: Assess Risk Severity

- Step 3: Implement Controls

- Step 4: Monitor and Detect

- Step 5: Respond and Improve

- Who Owns AI Risk in Your Organization?

- Common Mistakes Leaders Make

- How PolicyGuard Supports AI Risk Management

- Frequently Asked Questions

1. Why AI Risk Management Is a Leadership Issue

AI risk is not an IT problem. It is a business problem that happens to involve technology.

When an employee pastes confidential client data into ChatGPT, that is a data protection issue. When AI-generated content contains errors that affect customers, that is a quality and liability issue. When your organization cannot demonstrate AI governance to regulators, that is a compliance issue.

These are leadership issues. They belong in the boardroom, not just the server room.

Three forces have elevated AI risk to a leadership priority:

Force 1: Ubiquitous adoption

AI tools are no longer experimental. ChatGPT reached 100 million users faster than any technology in history. Your employees are using AI tools whether you have sanctioned them or not. Research shows 80% of employees use AI tools their organization has not approved, and 59% actively hide their usage.

This is not a future risk to plan for. It is a current exposure to manage.

Force 2: Regulatory pressure

The EU AI Act became enforceable in February 2025 with penalties up to €35 million or 7% of global annual revenue. GDPR authorities issued €1.2 billion in fines in 2024. Regulators are no longer asking “Do you have an AI policy?” They are asking “Can you prove it is followed?”

Force 3: Stakeholder expectations

Customers, partners, and investors increasingly expect organizations to demonstrate responsible AI governance. “How do you manage AI risk?” is becoming a standard question in vendor assessments, partnership discussions, and board meetings.

Leaders who treat AI risk as “an IT thing” are delegating accountability for a business-critical issue. Leaders who own AI risk are positioned to turn governance into competitive advantage.

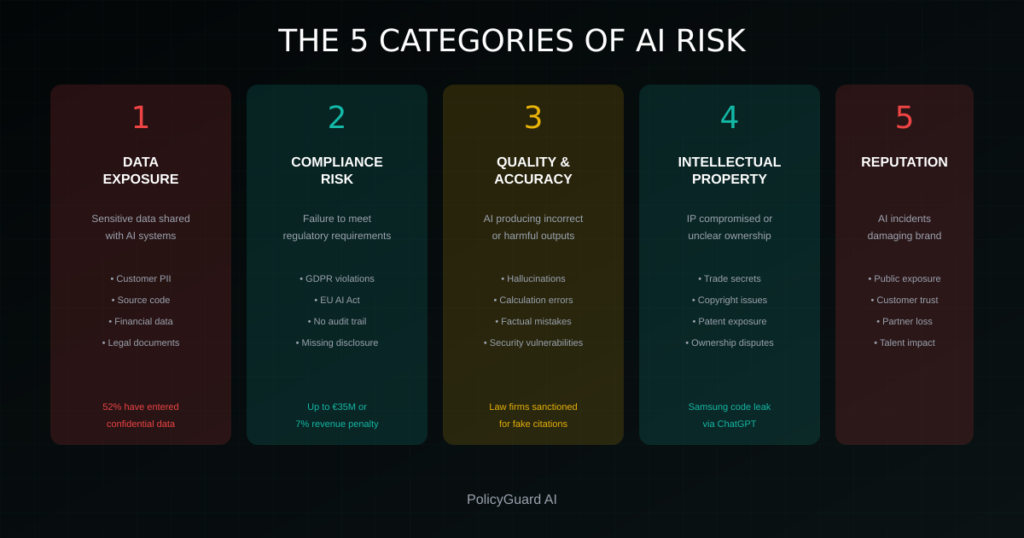

2. The 5 Categories of AI Risk

AI risk is not monolithic. It breaks down into five distinct categories, each with different drivers, impacts, and controls.

Category 1: Data Exposure Risk

What it is: Sensitive information being shared with AI systems, potentially exposing it to unauthorized access, training data inclusion, or breach.

Examples:

- Employee pastes customer PII into ChatGPT

- Developer shares proprietary source code with AI coding assistant

- Legal team uploads confidential contracts for AI summarization

- HR enters employee performance data into AI analysis tool

Business impact: Data breaches, regulatory fines, loss of intellectual property, competitive disadvantage, customer trust erosion.

Key statistic: 52% of employees have entered confidential company data into AI tools.

Category 2: Compliance Risk

What it is: Failure to meet regulatory requirements related to AI usage, data processing, transparency, or documentation.

Examples:

- Processing personal data through AI without proper legal basis (GDPR violation)

- Using AI in high-risk contexts without required documentation (EU AI Act violation)

- Failing to disclose AI-generated content where required

- Inability to produce audit evidence when requested

Business impact: Regulatory fines, enforcement actions, operational restrictions, reputational damage.

Key statistic: EU AI Act penalties can reach €35 million or 7% of global annual revenue.

Category 3: Quality and Accuracy Risk

What it is: AI systems producing incorrect, misleading, or harmful outputs that affect business decisions or customer experiences.

Examples:

- AI-generated legal citations that do not exist (hallucinations)

- Financial analysis based on AI outputs with calculation errors

- Customer communications with factual inaccuracies

- Code suggestions that introduce security vulnerabilities

Business impact: Professional liability, customer harm, operational failures, malpractice claims.

Key statistic: Multiple law firms have faced sanctions for submitting court filings with AI-generated citations to non-existent cases.

Category 4: Intellectual Property Risk

What it is: AI usage that compromises proprietary information, creates unclear ownership of outputs, or infringes on third-party rights.

Examples:

- Trade secrets shared with AI systems potentially accessible to competitors

- Uncertainty about copyright ownership of AI-generated content

- AI outputs that inadvertently reproduce copyrighted material

- Patent-sensitive information exposed through AI tool usage

Business impact: Loss of trade secret protection, IP disputes, licensing complications, competitive disadvantage.

Category 5: Reputational Risk

What it is: AI-related incidents that damage organizational reputation, brand trust, or stakeholder confidence.

Examples:

- Public disclosure of data exposure through AI tools

- AI-generated content that is offensive, biased, or inappropriate

- High-profile compliance failures related to AI governance

- Employee actions with AI that contradict organizational values

Business impact: Customer attrition, partnership losses, talent acquisition challenges, stock price impact, brand damage.

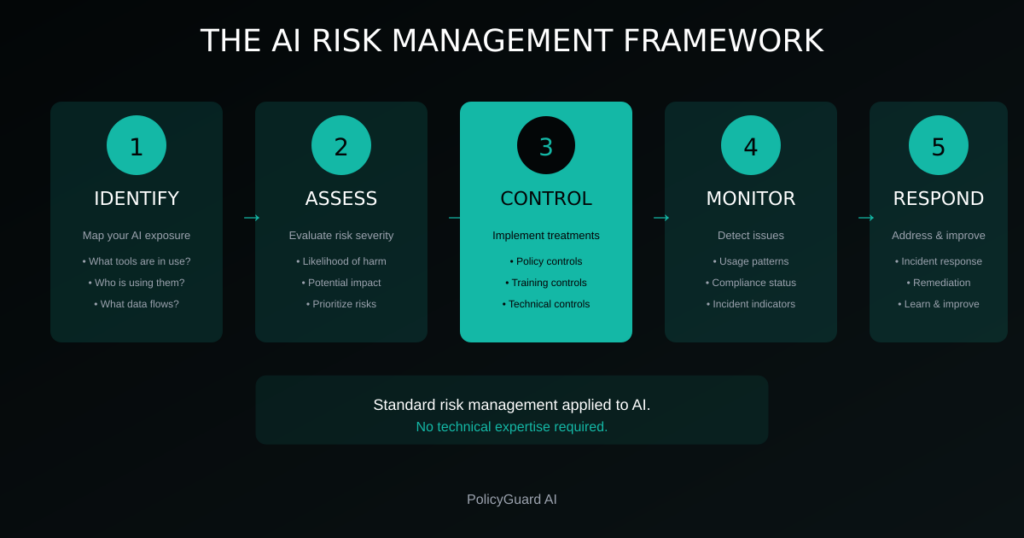

3. The AI Risk Management Framework

Effective AI risk management follows the same structure as any risk management discipline: identify, assess, control, monitor, respond.

The framework has five steps:

- Identify: Map your AI exposure. What tools are in use? Who is using them? What data flows through them?

- Assess: Evaluate risk severity. Which exposures create the highest potential impact? What is the likelihood of harm?

- Control: Implement risk treatments. Policies, training, technical controls, and enforcement mechanisms.

- Monitor: Detect issues. Ongoing visibility into AI usage, compliance status, and emerging risks.

- Respond: Address incidents. Clear procedures for when things go wrong, plus continuous improvement.

This is not new. It is standard risk management applied to a new risk category. The difference is that AI risk often spans multiple traditional risk domains (data, compliance, operational, reputational) and requires coordinated response.

4. Step 1: Identify AI Exposure

You cannot manage risk you cannot see. The first step is mapping your organization’s AI exposure.

What to Identify

AI tools in use:

- Sanctioned/approved AI tools (ChatGPT Enterprise, Microsoft Copilot, etc.)

- Unsanctioned/shadow AI tools employees use without approval

- AI features embedded in existing software (increasingly common)

Who is using AI:

- Which departments have the heaviest AI usage

- Which roles interact with AI most frequently

- Which employees have received AI governance training

Data flows:

- What types of data enter AI systems

- How sensitive is that data (public, internal, confidential, regulated)

- Where does AI-processed data go afterward

Use cases:

- What tasks are employees using AI for

- Which use cases involve sensitive data or high-stakes decisions

- Which use cases have no human review of AI outputs

How to Identify

Technical discovery:

- Network logs showing connections to AI tool domains

- Browser extension monitoring (like PolicyGuard) that detects AI tool access

- SSO/authentication logs for sanctioned enterprise AI tools

Human discovery:

- Employee surveys asking about AI tool usage

- Department interviews with managers

- Review of tool procurement and expense reports

Gap to watch: Technical discovery reveals behavior. Human discovery reveals intent. You need both. Employees may use AI tools you cannot see technically (personal devices, mobile apps), and technical logs may not reveal what data employees are entering.

The goal of identification is a clear picture: What AI tools are in our environment? Who uses them? What data do they touch? Where are the gaps in our visibility?

5. Step 2: Assess Risk Severity

Not all AI exposure creates equal risk. Assessment prioritizes where to focus controls and resources.

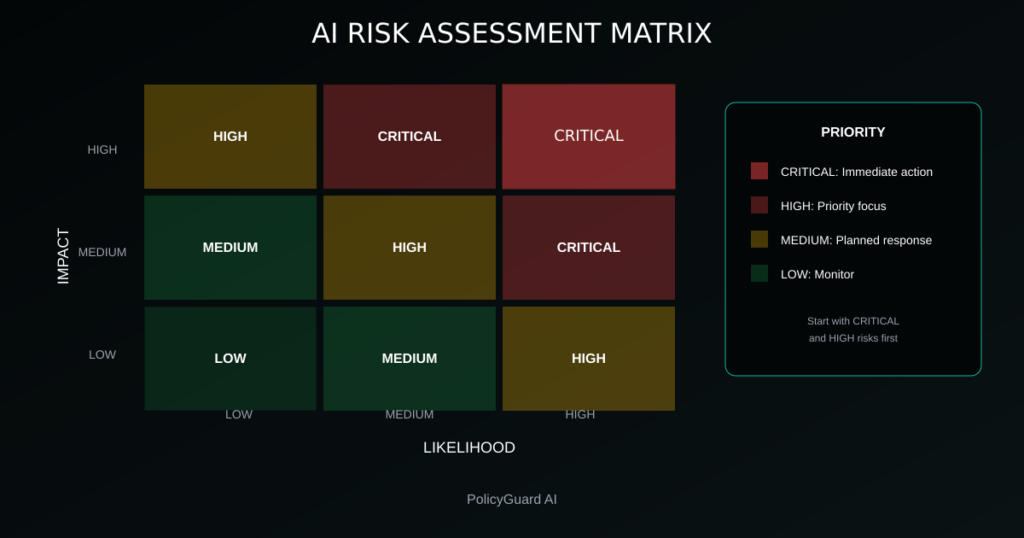

Risk Assessment Matrix

Assess each identified AI exposure on two dimensions:

Likelihood: How probable is a negative outcome?

- High: Exposure involves frequent use, sensitive data, or no controls

- Medium: Moderate use with some controls in place

- Low: Limited use, non-sensitive data, strong controls

Impact: How severe would a negative outcome be?

- High: Regulatory penalties, significant financial loss, major reputational damage

- Medium: Operational disruption, moderate financial impact, recoverable reputation impact

- Low: Minor inconvenience, minimal financial impact, no lasting damage

Risk Severity Categories

Critical (High Likelihood + High Impact):

- Confidential data regularly entering uncontrolled AI tools

- AI usage in regulated contexts (healthcare, finance) without documentation

- No visibility into AI tool usage across the organization

High (High Likelihood + Medium Impact OR Medium Likelihood + High Impact):

- Employees using AI for customer-facing content without review

- AI coding assistants with access to proprietary codebases

- Departments with heavy AI use but no specific training

Medium (Medium Likelihood + Medium Impact):

- Internal document drafting with AI assistance

- AI summarization of non-sensitive materials

- Research and ideation use cases

Low (Low Likelihood + Low Impact):

- AI used for general knowledge queries

- Personal productivity uses with no sensitive data

- Highly controlled, sandboxed AI environments

Prioritization

Focus your initial efforts on Critical and High severity risks. These are where controls will have the greatest risk reduction impact per unit of effort.

Do not ignore Medium and Low risks, but address them after Critical and High risks have controls in place.

6. Step 3: Implement Controls

Controls are the treatments you apply to reduce risk. For AI risk, controls fall into four categories.

Control Type 1: Policy Controls

Define what is and is not acceptable regarding AI usage.

Essential policy elements:

- Which AI tools are approved for use

- What data can and cannot be entered into AI tools

- Required review processes for AI-generated outputs

- Disclosure requirements for AI-assisted work

- Consequences for policy violations

Policy effectiveness depends on: Communication, acknowledgment, and training. A policy that exists but is not known or understood provides no risk reduction.

For detailed guidance, see our AI Acceptable Use Policy Template and AI Policy for Employees.

Control Type 2: Training Controls

Ensure employees understand AI risks and know how to work responsibly with AI tools.

Training should cover:

- What data is appropriate to share with AI (and what is not)

- How to recognize AI errors and hallucinations

- When human review of AI outputs is required

- How to report AI-related concerns or incidents

- Specific requirements for regulated data (HIPAA, GDPR, etc.)

Training effectiveness depends on: Relevance, frequency, and verification. Annual compliance videos are less effective than role-specific guidance reinforced regularly.

Control Type 3: Technical Controls

Use technology to enforce policy and reduce human error.

Technical control options:

- Browser extensions that prompt policy acknowledgment at AI tool access

- Data loss prevention (DLP) tools configured for AI tool domains

- Network controls that restrict access to unapproved AI tools

- Enterprise AI tools with built-in data protection (vs. consumer versions)

- Logging and monitoring of AI tool usage

Technical effectiveness depends on: Coverage and usability. Controls that are easily bypassed or create excessive friction often fail.

Control Type 4: Process Controls

Build AI governance into business workflows.

Process control examples:

- Required human review before AI-generated content is published

- Approval workflows for AI use in high-risk contexts

- Regular AI usage audits by department

- Incident response procedures for AI-related issues

- Periodic policy and risk assessment reviews

Process effectiveness depends on: Integration with existing workflows and clear accountability. Processes that feel like extra work get skipped.

Control Implementation Priority

Start with high-impact, low-friction controls:

- Policy: Create and communicate a clear AI acceptable use policy

- Acknowledgment: Require employees to acknowledge the policy

- Training: Provide AI risk awareness training

- Technical: Implement point-of-use reminders (browser extension)

- Audit trail: Capture evidence of acknowledgment and training

This foundation addresses Critical and High risks while building infrastructure for more advanced controls.

7. Step 4: Monitor and Detect

Controls are not “set and forget.” Ongoing monitoring detects control failures, emerging risks, and compliance drift.

What to Monitor

AI usage patterns:

- Volume of AI tool usage across the organization

- Departments or individuals with unusual usage patterns

- New AI tools appearing in the environment

Compliance status:

- Policy acknowledgment rates

- Training completion rates

- Enforcement control effectiveness

Incident indicators:

- Reports of AI-related errors or issues

- Near-misses or control bypasses

- External signals (news about AI risks, regulatory guidance)

Monitoring Methods

Automated monitoring:

- Dashboard tracking policy acknowledgment and training completion

- Alerts for new AI tools detected in network traffic

- Periodic reports on AI governance metrics

Manual monitoring:

- Quarterly reviews of AI risk assessments

- Department check-ins on AI usage and challenges

- Review of AI-related incidents and near-misses

External monitoring:

- Regulatory guidance and enforcement actions

- Industry incidents and lessons learned

- Evolving best practices and standards

Red Flags to Watch

- Policy acknowledgment rates below 90%

- Departments with high AI usage but low training completion

- Increasing use of unsanctioned AI tools

- Reports of AI errors affecting customers or decisions

- Inability to produce audit documentation quickly

These signals indicate control gaps that need attention before they become incidents.

8. Step 5: Respond and Improve

Even with strong controls, incidents will occur. Response capability determines whether incidents are minor setbacks or major crises.

Incident Response Framework

Detection: How you learn about AI-related incidents

- Employee reports through defined channels

- Monitoring alerts from technical controls

- External notification (customer complaint, regulatory inquiry)

Assessment: Evaluating incident severity and scope

- What data or systems are affected?

- What is the potential impact?

- What is the regulatory notification requirement?

Containment: Stopping ongoing harm

- Revoking access if needed

- Preserving evidence

- Notifying affected parties if required

Remediation: Fixing the underlying issue

- Addressing the specific incident

- Closing the control gap that allowed it

- Updating policies or training as needed

Documentation: Recording what happened and what you did

- Incident timeline and facts

- Actions taken

- Lessons learned

- Evidence of response (for regulatory purposes)

Continuous Improvement

Every incident is a learning opportunity. After remediation:

- Conduct a brief post-incident review

- Identify what controls failed or were missing

- Update risk assessments based on new information

- Adjust controls to prevent recurrence

- Share lessons (appropriately anonymized) across the organization

Organizations that learn from incidents build increasingly resilient AI governance over time.

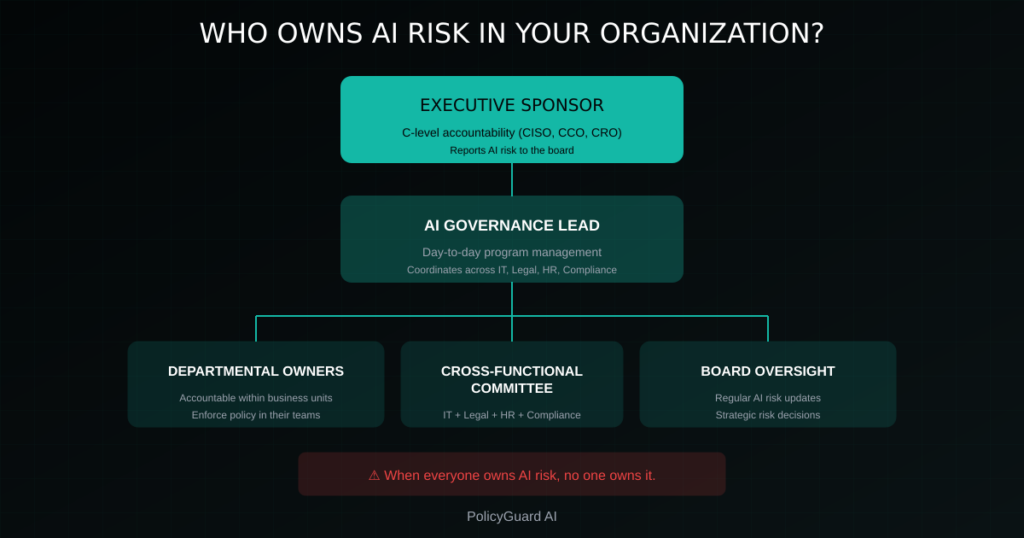

9. Who Owns AI Risk in Your Organization?

AI risk spans multiple domains: data, compliance, legal, HR, IT, operations. This creates a common problem: diffused ownership where no one is clearly accountable.

The Ownership Problem

When everyone owns AI risk, no one owns it.

Common failure modes:

- IT thinks Compliance owns it; Compliance thinks IT owns it

- Everyone assumes Legal will handle it

- Executives delegate to middle management without accountability

- No one has budget or authority to implement controls

Recommended Ownership Structure

Executive sponsor: A C-level executive accountable for AI governance

- Often the CISO, Chief Compliance Officer, or Chief Risk Officer

- Has authority to mandate controls across departments

- Reports AI risk status to the board

AI governance lead: Day-to-day responsibility for AI risk program

- May be a dedicated role or added to existing GRC function

- Coordinates across IT, Legal, HR, Compliance

- Manages policy, training, and monitoring

Departmental owners: Accountability within each business unit

- Ensure department-specific AI risks are identified and controlled

- Enforce policy compliance within their teams

- Report incidents and concerns upward

Cross-functional committee: Coordination body for AI governance

- Representatives from IT, Legal, HR, Compliance, key business units

- Reviews policy, addresses emerging risks, resolves conflicts

- Meets regularly (monthly or quarterly)

Board Oversight

The board should receive regular updates on AI risk:

- AI risk assessment summary

- Control effectiveness metrics

- Significant incidents and responses

- Regulatory developments and compliance status

- Emerging risks and planned responses

AI risk is a business risk. It belongs on the board agenda alongside financial, operational, and strategic risks.

10. Common Mistakes Leaders Make

Leaders new to AI risk management often make predictable mistakes. Awareness helps avoid them.

Mistake 1: Treating AI risk as purely technical

The error: Delegating AI risk entirely to IT or technical teams.

The problem: AI risk is a business risk with legal, compliance, HR, and operational dimensions. Technical controls alone cannot address it.

The fix: Ensure business leaders own AI risk with technical teams providing support, not the reverse.

Mistake 2: Banning AI tools

The error: Prohibiting AI usage to eliminate risk.

The problem: Bans do not stop usage; they drive it underground. Shadow AI becomes invisible shadow AI. You lose all visibility and governance capability.

The fix: Enable governed AI usage rather than banning. Make the compliant path easier than the non-compliant path.

For more on this, see Shadow AI: The Hidden Risk in Every Company.

Mistake 3: One-time compliance

The error: Creating a policy once and considering AI governance done.

The problem: AI tools, regulations, and risks evolve rapidly. A governance program that is not maintained becomes ineffective within months.

The fix: Build ongoing monitoring, regular review, and continuous improvement into your governance program.

Mistake 4: Policy without enforcement

The error: Having a written policy but no mechanism to ensure it is followed.

The problem: A policy that exists only on paper provides documentation but not risk reduction. Employees may not know it, understand it, or follow it.

The fix: Implement acknowledgment, training, and point-of-use enforcement. Build an audit trail that proves governance is active.

Mistake 5: Waiting for perfect information

The error: Delaying governance until you fully understand AI or have complete visibility into usage.

The problem: AI adoption is happening now. Every day without governance is a day of uncontrolled risk.

The fix: Start with basic controls (policy, acknowledgment, training) and improve iteratively. Imperfect governance now beats perfect governance later.

11. How PolicyGuard Supports AI Risk Management

PolicyGuard provides the infrastructure for each step of the AI risk management framework.

Identify

PolicyGuard’s browser extension detects when employees access 80+ AI tools, giving you visibility into AI usage across your organization, including shadow AI tools you may not know about.

Assess

Usage data helps you understand where AI exposure is highest, which departments need attention, and where to prioritize controls.

Control

- Policy controls: 19+ expert-curated policy templates covering AI acceptable use, data classification, and regulatory requirements (GDPR, EU AI Act, HIPAA, SOC 2, etc.)

- Training controls: Built-in training modules with quizzes that verify comprehension

- Technical controls: Browser extension that prompts policy acknowledgment at point of AI tool use

- Process controls: Workflow for policy updates, version control, and re-acknowledgment

Monitor

Real-time dashboard showing:

- Policy acknowledgment rates

- Training completion status

- AI tool usage across the organization

- Compliance gaps that need attention

Respond

- Complete audit trail for incident investigation

- One-click compliance reports for regulators or auditors

- Documentation of your governance program for due diligence

The Result

AI risk management without building infrastructure from scratch. PolicyGuard provides the foundation so you can focus on governance decisions rather than tool building.

Start your free 14-day trial and implement your AI risk management framework today.

Frequently Asked Questions

What is AI risk management? AI risk management is the discipline of identifying, assessing, controlling, monitoring, and responding to risks created by artificial intelligence usage in your organization. It applies standard risk management principles to the specific challenges AI creates: data exposure, compliance obligations, quality issues, intellectual property concerns, and reputational risks.

Who should own AI risk in an organization? AI risk should have clear executive sponsorship (often the CISO, Chief Compliance Officer, or Chief Risk Officer) with day-to-day management by a dedicated AI governance lead or GRC function. Departmental owners should be accountable for AI risks within their business units, with a cross-functional committee coordinating across the organization.

What are the main categories of AI risk? AI risk falls into five categories: data exposure risk (sensitive information shared with AI systems), compliance risk (failure to meet regulatory requirements), quality and accuracy risk (AI producing incorrect outputs), intellectual property risk (IP compromised or unclear ownership), and reputational risk (AI incidents damaging organizational reputation).

How do I assess AI risk severity? Assess each AI exposure on two dimensions: likelihood (how probable is a negative outcome) and impact (how severe would it be). High likelihood plus high impact equals critical risk requiring immediate attention. Prioritize controls based on severity, addressing critical and high risks before medium and low.

What controls reduce AI risk? Four types of controls address AI risk: policy controls (defining acceptable use), training controls (ensuring employees understand risks), technical controls (using technology to enforce policy), and process controls (building governance into workflows). Effective AI risk management uses all four in combination.

Why do AI bans not work? Banning AI tools does not eliminate usage; it drives usage underground. Shadow AI becomes invisible, and you lose all visibility and governance capability. Research shows 59% of employees already hide their AI usage. A ban converts visible shadow AI into invisible shadow AI, increasing rather than decreasing risk.

How often should AI risk assessments be updated? AI risk assessments should be reviewed at least quarterly, with additional reviews when significant changes occur: new AI tools adopted, new regulations enacted, significant incidents, or organizational changes. AI evolves rapidly; annual assessments are insufficient.

Related Resources

- The Complete Guide to AI Policy and Governance for Companies — The pillar guide covering all aspects of AI governance

- AI Acceptable Use Policy Template: A Complete Guide — How to create the foundational policy document

- AI Policy for Employees: What to Include and How to Enforce It — Detailed guidance on employee-facing AI policies

- Shadow AI: The Hidden Risk in Every Company — Understanding and governing unapproved AI tool usage

- AI Audit Trail: What It Is and Why Regulators Want One — Building audit-ready AI governance documentation

- EU AI Act Compliance: What Companies Need to Do — Regulatory requirements affecting AI governance