This guide is part of our Complete Guide to AI Policy and Governance for Companies, the central resource for everything you need to know about AI compliance in 2026.

The NIST AI Risk Management Framework (AI RMF) has become the de facto standard for AI governance in the United States and increasingly worldwide.

Released by the National Institute of Standards and Technology in January 2023, the AI RMF provides a structured approach to managing AI risks throughout the AI lifecycle. Unlike prescriptive regulations, it offers flexible guidance that organizations can adapt to their specific context, size, and risk tolerance.

But flexibility can be a double-edged sword. Many organizations read the framework, agree with its principles, and then struggle to translate it into operational reality.

This guide bridges that gap. We will walk through each component of the NIST AI RMF and show you exactly how to implement it in your organization, with practical steps, real examples, and clear milestones.

TABLE OF CONTENTS:

- What Is the NIST AI Risk Management Framework?

- Why NIST AI RMF Matters for Your Organization

- The Four Core Functions: Govern, Map, Measure, Manage

- Function 1: GOVERN – Establishing AI Governance

- Function 2: MAP – Understanding AI Context and Risks

- Function 3: MEASURE – Assessing and Analyzing AI Risks

- Function 4: MANAGE – Treating and Monitoring AI Risks

- Implementation Roadmap: Phased Approach

- NIST AI RMF and Other Frameworks (EU AI Act, ISO 42001)

- Common Implementation Challenges and Solutions

- How PolicyGuard Supports NIST AI RMF Implementation

- Frequently Asked Questions

1. What Is the NIST AI Risk Management Framework?

The NIST AI Risk Management Framework is a voluntary framework designed to help organizations manage risks associated with AI systems throughout their lifecycle.

Developed through extensive public consultation with industry, academia, civil society, and government, the AI RMF reflects consensus on best practices for trustworthy AI development and deployment.

Key Characteristics

Voluntary, not mandatory: The AI RMF is guidance, not regulation. Organizations adopt it because it represents best practice, not because they are legally required to. However, regulatory alignment is increasing, and the framework is often referenced in procurement requirements and industry standards.

Risk-based approach: The framework does not prescribe specific technical requirements. Instead, it provides a methodology for identifying, assessing, and managing AI risks based on your organization’s context and risk tolerance.

Lifecycle coverage: The AI RMF addresses risks across the entire AI lifecycle: design, development, deployment, operation, and decommissioning. This comprehensive scope ensures risks are managed from conception to retirement.

Flexible and scalable: The framework applies to organizations of any size, from startups to enterprises, and to AI systems of any complexity. Implementation scales to match your resources and risk profile.

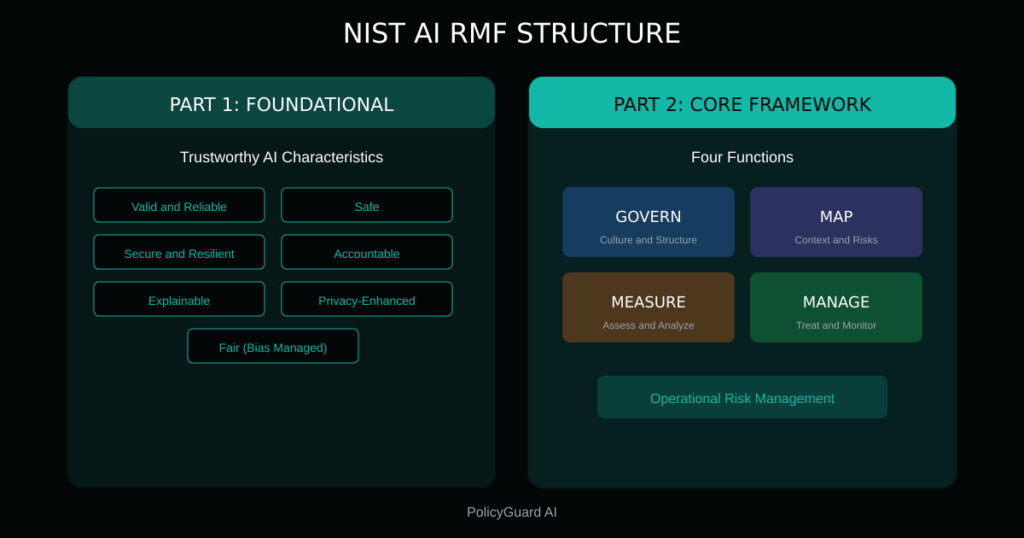

Framework Structure

The AI RMF consists of two main parts:

Part 1: Foundational Information Explains key concepts, defines terminology, and establishes the characteristics of trustworthy AI systems: valid and reliable, safe, secure and resilient, accountable and transparent, explainable and interpretable, privacy-enhanced, and fair with harmful bias managed.

Part 2: Core Framework Provides the operational structure for managing AI risks through four functions: Govern, Map, Measure, and Manage. Each function contains categories and subcategories that guide implementation.

2. Why NIST AI RMF Matters for Your Organization

Even though the AI RMF is voluntary, there are compelling reasons to implement it.

Regulatory Alignment

The NIST AI RMF aligns with emerging AI regulations worldwide:

United States:

- Executive Order 14110 on AI Safety (October 2023) references NIST standards

- Federal agencies are increasingly requiring AI RMF alignment from vendors

- State-level AI legislation often points to NIST guidance

European Union:

- The EU AI Act’s risk-based approach parallels NIST AI RMF principles

- Organizations implementing NIST AI RMF are better positioned for EU AI Act compliance

- ISO 42001 (AI Management Systems) draws from similar concepts

International:

- OECD AI Principles align with NIST AI RMF characteristics

- G7 Hiroshima AI Process references similar frameworks

- Global enterprises use NIST AI RMF as a common baseline

Business Benefits

Beyond regulatory alignment, the AI RMF delivers practical business value:

Risk reduction: Systematic identification and management of AI risks reduces the likelihood of costly incidents, from biased decisions to data breaches to operational failures.

Stakeholder trust: Demonstrating AI governance builds trust with customers, partners, investors, and regulators. “We follow the NIST AI RMF” is a credible statement that carries weight.

Operational efficiency: A structured approach to AI governance reduces ad hoc decision-making, clarifies responsibilities, and creates repeatable processes.

Competitive advantage: As AI governance becomes a differentiator, organizations with mature frameworks win contracts and partnerships that others cannot.

The Cost of Not Implementing

Organizations without AI risk management face increasing exposure:

- Regulatory penalties as AI-specific laws come into force

- Reputational damage from AI incidents

- Lost business opportunities requiring governance proof

- Higher costs from reactive incident response vs. proactive risk management

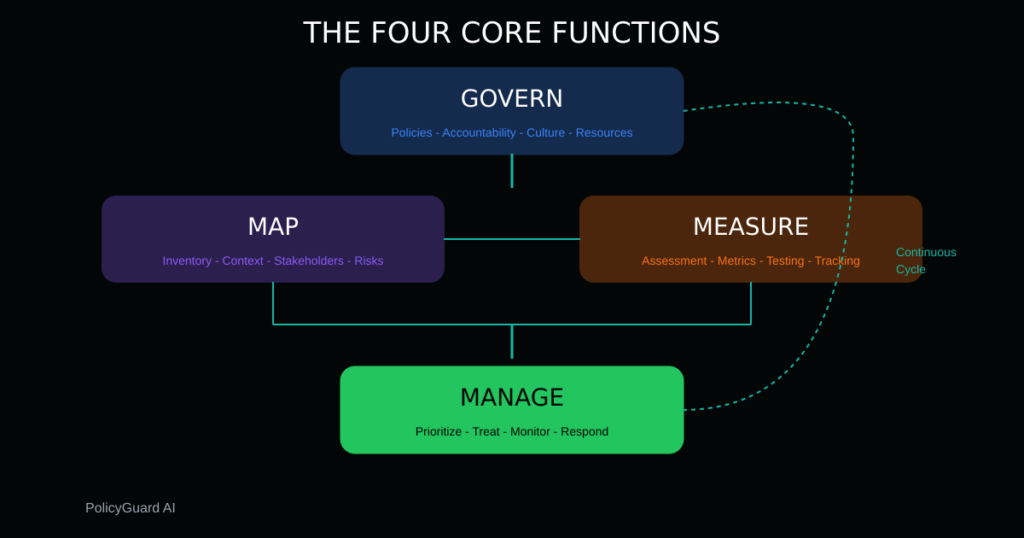

3. The Four Core Functions: Govern, Map, Measure, Manage

The NIST AI RMF organizes AI risk management into four interconnected functions.

GOVERN

Purpose: Establish the culture, structure, and processes for AI risk management.

Key question: Who is responsible for AI risks, and how do we make decisions?

Govern is the foundational function that enables all others. It establishes:

- Organizational commitment to trustworthy AI

- Roles, responsibilities, and accountability

- Policies and procedures for AI governance

- Resources and capabilities for risk management

Govern is not a one-time setup. It requires ongoing attention to maintain governance effectiveness as AI usage evolves.

MAP

Purpose: Understand the context in which AI systems operate and identify potential risks.

Key question: What AI systems do we have, and what risks do they create?

Map creates visibility into your AI landscape:

- Inventory of AI systems and use cases

- Understanding of how AI systems work and their limitations

- Identification of stakeholders affected by AI

- Cataloging of potential risks and impacts

Map is the discovery function. You cannot manage risks you do not know exist.

MEASURE

Purpose: Assess, analyze, and track identified AI risks.

Key question: How significant are our AI risks, and are they changing?

Measure quantifies and monitors risks:

- Assessment methodologies and metrics

- Testing and evaluation of AI systems

- Tracking of risk indicators over time

- Documentation of measurement results

Measure turns qualitative concerns into actionable data.

MANAGE

Purpose: Prioritize and act on AI risks based on assessment results.

Key question: What are we doing about our AI risks?

Manage is where action happens:

- Risk treatment decisions (accept, mitigate, transfer, avoid)

- Implementation of controls and safeguards

- Monitoring of treatment effectiveness

- Response to incidents and emerging risks

Manage closes the loop, ensuring risks are actually addressed, not just documented.

How the Functions Connect

The four functions are not sequential steps. They operate continuously and interact with each other:

- Govern enables and oversees the other three functions

- Map feeds risk information into Measure

- Measure results inform Manage decisions

- Manage outcomes update Map and may trigger Govern changes

This continuous cycle ensures AI risk management remains current as systems, contexts, and threats evolve.

4. Function 1: GOVERN – Establishing AI Governance

Govern creates the foundation for everything else. Without effective governance, Map, Measure, and Manage efforts will be inconsistent and unsustainable.

GOVERN Categories

The AI RMF organizes Govern into six categories:

GOVERN 1: Policies, processes, procedures, and practices Documented governance artifacts that guide AI risk management.

GOVERN 2: Accountability structures Clear assignment of roles, responsibilities, and decision-making authority.

GOVERN 3: Workforce diversity, equity, inclusion, and accessibility Ensuring diverse perspectives in AI development and governance.

GOVERN 4: Organizational culture Fostering a culture that values trustworthy AI and risk awareness.

GOVERN 5: Stakeholder engagement Involving affected parties in AI governance decisions.

GOVERN 6: Risk management integration Connecting AI risk management with enterprise risk management.

Practical Implementation Steps

Step 1: Establish executive sponsorship

AI governance needs C-level commitment. Identify an executive sponsor (CISO, Chief Risk Officer, Chief AI Officer, or similar) who will:

- Champion AI governance across the organization

- Allocate resources and budget

- Remove organizational barriers

- Report to the board on AI risks

Step 2: Define governance structure

Create clear accountability:

| Role | Responsibility |

|---|---|

| Executive Sponsor | Overall accountability, board reporting |

| AI Governance Lead | Day-to-day program management |

| AI Risk Committee | Cross-functional oversight and decisions |

| System Owners | Risk management for specific AI systems |

| Users | Compliance with policies and procedures |

Step 3: Develop core policies

Document your AI governance expectations:

- AI Acceptable Use Policy: What is and is not permitted

- AI Risk Assessment Policy: How risks are identified and evaluated

- AI Development Standards: Requirements for building or procuring AI

- AI Incident Response Policy: How to handle AI-related incidents

For guidance on policy development, see our AI Acceptable Use Policy Template.

Step 4: Allocate resources

Governance without resources is aspiration. Ensure you have:

- Personnel with time dedicated to AI governance

- Budget for tools, training, and external expertise

- Executive attention on a regular cadence

Step 5: Integrate with enterprise risk management

AI risks should not be managed in isolation. Connect AI governance with:

- Enterprise risk management framework

- Information security program

- Compliance management system

- Internal audit function

GOVERN Deliverables

By the end of GOVERN implementation, you should have:

- Documented executive sponsorship and commitment

- Defined governance structure with clear roles

- Core AI policies approved and communicated

- Resource allocation for AI governance

- Integration with enterprise risk management

- Regular governance review cadence established

5. Function 2: MAP – Understanding AI Context and Risks

Map creates visibility into your AI landscape. It answers the question: “What AI do we have, and what could go wrong?”

MAP Categories

The AI RMF organizes Map into five categories:

MAP 1: Context establishment Understanding the business context, intended uses, and operational environment for AI systems.

MAP 2: AI system categorization Classifying AI systems based on their characteristics, capabilities, and risk profiles.

MAP 3: AI system capabilities, limitations, and risks Understanding what AI systems can and cannot do, and what risks they create.

MAP 4: Stakeholder identification Identifying who is affected by AI systems and how.

MAP 5: Impact characterization Understanding the potential positive and negative impacts of AI systems.

Practical Implementation Steps

Step 1: Create an AI inventory

You cannot manage what you cannot see. Document all AI systems in your organization:

| Field | Description |

|---|---|

| System Name | Identifier for the AI system |

| Description | What the system does |

| Owner | Accountable individual or team |

| Type | Developed, procured, embedded, API |

| Status | Development, pilot, production, retired |

| Users | Who uses the system |

| Data | What data the system processes |

| Decisions | What decisions the system informs or makes |

Include:

- AI systems you developed

- AI features in procured software

- AI APIs and services you consume

- Employee use of general-purpose AI tools (ChatGPT, Claude, etc.)

For managing employee AI tool usage, see Shadow AI: The Hidden Risk in Every Company.

Step 2: Understand each system’s context

For each AI system, document:

- Business purpose: Why does this system exist? What problem does it solve?

- Intended use: How is the system supposed to be used?

- Operational environment: Where and how is the system deployed?

- User population: Who interacts with the system?

- Decision scope: What decisions does the system influence or make?

Step 3: Identify stakeholders

Map everyone affected by each AI system:

- Direct users: People who interact with the AI

- Subjects: People about whom AI makes decisions or predictions

- Beneficiaries: People who benefit from AI outputs

- Affected parties: People impacted by AI decisions, even indirectly

- Oversight bodies: Regulators, auditors, governance functions

Step 4: Catalog risks

For each AI system, identify potential risks across the trustworthy AI characteristics:

| Characteristic | Risk Questions |

|---|---|

| Valid & Reliable | Could the system produce incorrect outputs? Under what conditions? |

| Safe | Could the system cause physical, psychological, or financial harm? |

| Secure & Resilient | Could the system be attacked, manipulated, or fail under stress? |

| Accountable & Transparent | Can we explain who is responsible and how decisions are made? |

| Explainable & Interpretable | Can users understand why the system produced specific outputs? |

| Privacy-Enhanced | Does the system protect personal information appropriately? |

| Fair (Bias Managed) | Could the system discriminate against individuals or groups? |

Step 5: Document system limitations

AI systems have boundaries. Document:

- Conditions where performance degrades

- Data types or scenarios not covered by training

- Known failure modes

- Dependencies on external systems or data

- Assumptions that must hold for reliable operation

MAP Deliverables

By the end of MAP implementation, you should have:

- Complete AI system inventory

- Context documentation for each system

- Stakeholder maps for each system

- Risk catalogs identifying potential issues

- Limitation documentation for each system

- Process for updating MAP artifacts as systems change

6. Function 3: MEASURE – Assessing and Analyzing AI Risks

Measure quantifies risks identified in Map. It transforms qualitative concerns into data that supports decision-making.

MEASURE Categories

The AI RMF organizes Measure into four categories:

MEASURE 1: Measurement approaches Methodologies for assessing AI risks, including metrics, testing, and evaluation methods.

MEASURE 2: AI system evaluation Testing and assessment of AI systems against requirements and trustworthiness characteristics.

MEASURE 3: Risk tracking Ongoing monitoring of risk indicators and system performance.

MEASURE 4: Feedback mechanisms Collecting input from users, affected parties, and other stakeholders.

Practical Implementation Steps

Step 1: Define risk assessment methodology

Establish a consistent approach to evaluating AI risks:

Risk dimensions:

- Likelihood: How probable is the risk event?

- Impact: How severe would the consequences be?

- Velocity: How quickly could the risk materialize?

- Detectability: How easily can we identify when the risk occurs?

Risk scoring: Create a scoring system that enables comparison across risks:

| Score | Likelihood | Impact |

|---|---|---|

| 1 – Low | Unlikely to occur | Minimal consequences |

| 2 – Medium | May occur | Moderate consequences |

| 3 – High | Likely to occur | Significant consequences |

| 4 – Critical | Expected to occur | Severe consequences |

Risk rating: Combine likelihood and impact into an overall risk rating:

- Critical (address immediately)

- High (address within 30 days)

- Medium (address within 90 days)

- Low (monitor)

For detailed risk assessment guidance, see AI Risk Management: A Framework for Non-Technical Leaders.

Step 2: Conduct system-level assessments

Evaluate each AI system against your risk methodology:

- Review system documentation and design

- Test system performance under various conditions

- Assess alignment with trustworthiness characteristics

- Identify gaps between intended and actual behavior

- Document assessment results and evidence

Step 3: Establish metrics and monitoring

Define metrics that track AI risk status:

System metrics:

- Accuracy, precision, recall (performance)

- Error rates by category

- Fairness metrics across demographic groups

- Response time and availability

- Incident frequency and severity

Governance metrics:

- Policy acknowledgment rates

- Training completion rates

- Risk assessment currency

- Control effectiveness

- Audit finding trends

Step 4: Implement feedback mechanisms

Create channels for stakeholder input:

- User feedback on AI system behavior

- Incident reporting procedures

- Regular surveys of affected parties

- Review of complaints and appeals

- External research and regulatory guidance

Step 5: Document and communicate results

Measurement is only valuable if results are communicated:

- Regular risk reports to governance committee

- Dashboard visibility for key metrics

- Escalation procedures for critical findings

- Documentation for audit purposes

MEASURE Deliverables

By the end of MEASURE implementation, you should have:

- Documented risk assessment methodology

- Completed risk assessments for all AI systems

- Defined metrics and monitoring approach

- Operational feedback mechanisms

- Regular risk reporting cadence

- Risk documentation supporting audit needs

7. Function 4: MANAGE – Treating and Monitoring AI Risks

Manage is where action happens. It translates risk assessment into risk treatment and ensures treatments remain effective.

MANAGE Categories

The AI RMF organizes Manage into four categories:

MANAGE 1: Risk prioritization Determining which risks to address first based on assessment results.

MANAGE 2: Risk treatment Selecting and implementing strategies to address prioritized risks.

MANAGE 3: Risk monitoring Tracking the effectiveness of risk treatments and watching for emerging risks.

MANAGE 4: Risk communication Sharing risk information with relevant stakeholders.

Practical Implementation Steps

Step 1: Prioritize risks

Not all risks can be addressed simultaneously. Prioritize based on:

- Risk rating (from MEASURE)

- Regulatory requirements

- Stakeholder impact

- Treatment feasibility

- Resource availability

Create a prioritized risk register that guides action:

| Rank | Risk | System | Rating | Treatment Status |

|---|---|---|---|---|

| 1 | PII exposure via AI chat | ChatGPT | Critical | In progress |

| 2 | Bias in hiring recommendations | HR AI | High | Planned |

| 3 | Inaccurate financial projections | Forecasting AI | High | Planned |

Step 2: Select treatment strategies

For each prioritized risk, choose a treatment approach:

Accept: Acknowledge the risk and continue without additional controls. Appropriate for low risks where treatment cost exceeds benefit.

Mitigate: Implement controls to reduce likelihood or impact. Most common approach for significant risks.

Transfer: Shift risk to another party through insurance, contracts, or outsourcing. Does not eliminate risk but changes who bears it.

Avoid: Eliminate the risk by discontinuing the activity. Appropriate when risk exceeds all potential benefits.

Step 3: Implement controls

For risks being mitigated, implement appropriate controls:

Technical controls:

- Access restrictions

- Data masking or anonymization

- Model constraints and guardrails

- Monitoring and alerting

- Automated testing

Procedural controls:

- Human review requirements

- Approval workflows

- Escalation procedures

- Incident response plans

- Regular audits

Policy controls:

- Acceptable use policies

- Data handling requirements

- Output review mandates

- Training requirements

- Accountability assignments

For point-of-use policy enforcement, see AI Audit Trail: What It Is and Why Regulators Want One.

Step 4: Monitor treatment effectiveness

Implement controls is not the end. Verify they work:

- Test controls regularly

- Track control metrics

- Review incidents for control failures

- Assess controls against evolving threats

- Update controls based on findings

Step 5: Maintain risk communication

Keep stakeholders informed:

- Regular reports to governance committee

- Updates to system owners and users

- Notifications when risk status changes

- Documentation for regulatory inquiries

- Transparency with affected parties where appropriate

MANAGE Deliverables

By the end of MANAGE implementation, you should have:

- Prioritized risk register

- Documented treatment decisions for each risk

- Implemented controls for mitigated risks

- Control monitoring and testing procedures

- Regular risk communication cadence

- Incident response capability

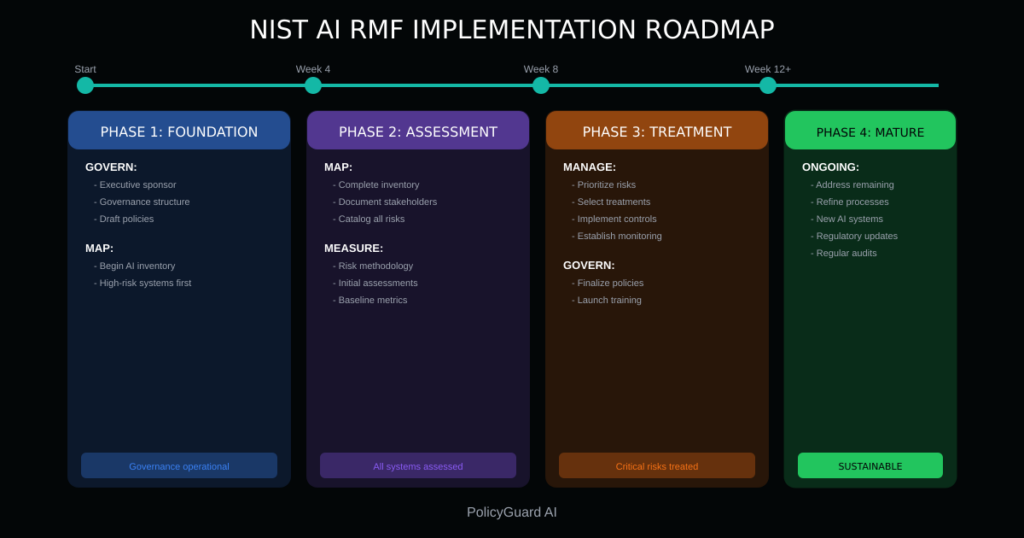

8. Implementation Roadmap: Phased Approach

Implementing the full NIST AI RMF takes time. A phased approach makes the effort manageable and delivers value incrementally.

Phase 1: Foundation (Weeks 1-4)

Focus: Establish governance and initial visibility

GOVERN activities:

- Identify executive sponsor

- Define governance structure

- Draft core policies

- Establish governance committee

MAP activities:

- Begin AI system inventory

- Focus on highest-risk systems first

- Document business context

Milestone: Governance structure operational, initial inventory complete

Phase 2: Assessment (Weeks 5-8)

Focus: Understand risks across AI portfolio

MAP activities:

- Complete AI inventory

- Document stakeholders and impacts

- Catalog risks for each system

MEASURE activities:

- Define risk assessment methodology

- Conduct initial risk assessments

- Establish baseline metrics

Milestone: All AI systems assessed, risk ratings assigned

Phase 3: Treatment (Weeks 9-12)

Focus: Address highest-priority risks

MANAGE activities:

- Prioritize risks based on assessments

- Select treatment strategies

- Implement controls for critical and high risks

- Establish monitoring for key metrics

GOVERN activities:

- Finalize and approve policies

- Communicate policies to organization

- Launch training program

Milestone: Critical risks treated, policies operational

Phase 4: Maturation (Ongoing)

Focus: Continuous improvement and expansion

All functions:

- Address remaining medium and low risks

- Refine processes based on experience

- Expand coverage to new AI systems

- Update for regulatory changes

- Conduct regular reviews and audits

Milestone: Mature, sustainable AI risk management program

Timeline Considerations

The 12-week timeline assumes:

- Dedicated resources for implementation

- Moderate AI portfolio complexity

- Existing enterprise risk management foundation

- Executive support and organizational readiness

Adjust timeline based on your context:

- Larger AI portfolios may require longer MAP phase

- Less mature organizations may need extended GOVERN phase

- Highly regulated industries may require more rigorous MEASURE phase

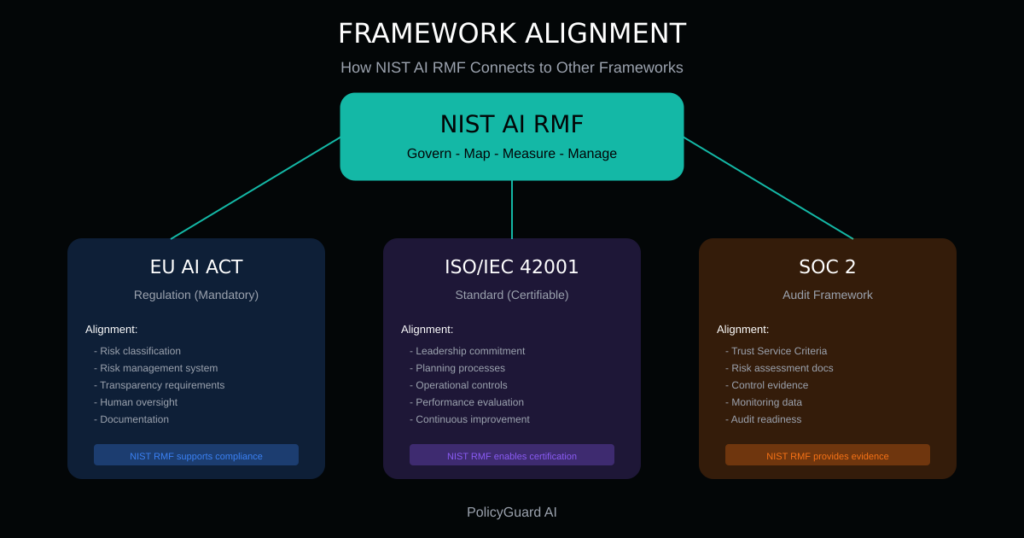

9. NIST AI RMF and Other Frameworks

The NIST AI RMF does not exist in isolation. It relates to and can support compliance with other AI governance frameworks.

EU AI Act

The EU AI Act is a regulation, not voluntary guidance. However, NIST AI RMF implementation positions you well for EU AI Act compliance.

Alignment areas:

| EU AI Act Requirement | NIST AI RMF Support |

|---|---|

| Risk classification | MAP function categorizes systems by risk |

| Risk management system | All four functions together |

| Data governance | MAP and MEASURE address data quality |

| Transparency | GOVERN policies, MAP documentation |

| Human oversight | MANAGE controls, GOVERN accountability |

| Accuracy and robustness | MEASURE testing and monitoring |

| Record-keeping | All functions generate documentation |

Gaps to address:

- EU AI Act has specific requirements for high-risk systems

- Conformity assessments may require additional documentation

- Prohibited practices must be explicitly addressed

For EU AI Act compliance details, see EU AI Act Compliance: What Companies Need to Do.

ISO/IEC 42001

ISO 42001 is an international standard for AI management systems. It takes a management system approach (like ISO 27001 for information security) to AI governance.

Relationship to NIST AI RMF:

- Both are risk-based approaches

- ISO 42001 provides certifiable requirements

- NIST AI RMF provides implementation guidance

- Organizations often use NIST AI RMF to implement ISO 42001

Alignment areas:

| ISO 42001 Element | NIST AI RMF Support |

|---|---|

| Leadership and commitment | GOVERN function |

| Planning | MAP and MEASURE functions |

| Support | GOVERN resources and competence |

| Operation | MANAGE function |

| Performance evaluation | MEASURE monitoring |

| Improvement | Continuous cycle across functions |

SOC 2

SOC 2 is an auditing standard for service organizations. AI governance increasingly appears in SOC 2 assessments.

NIST AI RMF supports SOC 2:

- Trust Service Criteria alignment through GOVERN policies

- Risk assessment documentation from MAP and MEASURE

- Control evidence from MANAGE

- Monitoring data from MEASURE

Practical Integration

Rather than implementing multiple frameworks separately:

- Use NIST AI RMF as your operational foundation

- Map NIST AI RMF activities to other framework requirements

- Generate documentation that serves multiple purposes

- Identify gaps and address them specifically

This integrated approach reduces duplication and ensures consistency.

10. Common Implementation Challenges and Solutions

Organizations implementing NIST AI RMF encounter predictable challenges. Awareness helps you navigate them.

Challenge 1: “We don’t know what AI we have”

Problem: Shadow AI makes inventory difficult. Employees use AI tools the organization does not know about.

Solution:

- Technical discovery (network logs, browser monitoring)

- Employee surveys and department interviews

- Procurement and expense report review

- Amnesty period for self-reporting

- Ongoing monitoring for new tools

See Shadow AI: The Hidden Risk in Every Company for detailed guidance.

Challenge 2: “We don’t have AI expertise”

Problem: AI risk assessment seems to require deep technical knowledge.

Solution:

- Focus on business risks, not technical details

- Use questionnaires and frameworks that translate technical issues

- Engage vendors for information about their systems

- Build expertise incrementally

- Consider external expertise for complex assessments

Remember: AI risk management is a business discipline, not a technical one.

Challenge 3: “We have too many AI systems”

Problem: Large AI portfolios make comprehensive assessment overwhelming.

Solution:

- Prioritize by risk (start with highest-risk systems)

- Group similar systems for efficient assessment

- Use tiered assessment depth (detailed for high-risk, lighter for low-risk)

- Build assessment capacity over time

- Automate where possible

Challenge 4: “We don’t have resources”

Problem: AI governance competes with other priorities for limited resources.

Solution:

- Start small and demonstrate value

- Integrate with existing risk management

- Use tools that reduce manual effort

- Focus on highest-impact activities

- Build the business case with incident data and regulatory trends

Challenge 5: “The framework is too abstract”

Problem: NIST AI RMF guidance is principles-based, not prescriptive.

Solution:

- Use this guide and similar resources for concrete steps

- Start with templates and adapt

- Connect with peers implementing the framework

- Focus on outcomes, not perfect compliance

- Iterate and improve over time

Challenge 6: “We can’t keep up with changes”

Problem: AI tools, regulations, and risks evolve rapidly.

Solution:

- Build review cycles into your framework

- Monitor regulatory developments

- Participate in industry groups

- Design flexible processes

- Accept that governance is ongoing, not a project

11. How PolicyGuard Supports NIST AI RMF Implementation

PolicyGuard provides infrastructure that accelerates NIST AI RMF implementation and reduces ongoing effort.

GOVERN Support

Policy foundation: PolicyGuard includes 19+ expert-curated policy templates aligned with NIST AI RMF requirements:

- AI Acceptable Use Policy

- Data Classification for AI

- AI Risk Assessment Policy

- AI Incident Response Policy

Policies are written by compliance professionals, not generated by AI, ensuring accuracy and defensibility.

Accountability documentation: Policy acknowledgment creates documented evidence of governance communication. Every employee’s acknowledgment is timestamped and logged.

Training integration: Built-in training modules support the GOVERN requirement for workforce competence, with completion tracking and verification.

MAP Support

AI tool visibility: PolicyGuard’s browser extension detects when employees access 80+ AI tools, creating visibility into AI usage across your organization, including shadow AI.

Usage documentation: Enforcement logs show which AI tools are used, by whom, and when, supporting AI inventory and context understanding.

MEASURE Support

Compliance metrics: Real-time dashboard tracks:

- Policy acknowledgment rates

- Training completion status

- Enforcement activity

- Compliance gaps

These metrics support MEASURE’s requirement for ongoing monitoring.

Risk indicators: Usage patterns can indicate risk concentrations (departments with heavy AI use, tools with high data sensitivity) that inform risk assessment.

MANAGE Support

Control implementation: Point-of-use policy enforcement is a control that addresses multiple risks:

- Ensures employees are aware of policies before using AI

- Creates accountability for policy compliance

- Generates audit evidence automatically

Audit readiness: One-click reports provide documentation for:

- Regulatory inquiries

- Internal audits

- Customer assessments

- Board reporting

The Result

PolicyGuard does not replace NIST AI RMF implementation. It provides the operational infrastructure that makes implementation faster, more consistent, and more sustainable.

Start your free 14-day trial and accelerate your NIST AI RMF implementation.

Frequently Asked Questions

What is the NIST AI Risk Management Framework? The NIST AI RMF is a voluntary framework developed by the National Institute of Standards and Technology to help organizations manage risks associated with AI systems. It provides a structured approach through four functions: Govern, Map, Measure, and Manage. Released in January 2023, it has become a leading standard for AI governance in the United States and internationally.

Is the NIST AI RMF mandatory? The NIST AI RMF is voluntary guidance, not a legal requirement. However, it is increasingly referenced in federal procurement requirements, industry standards, and state-level legislation. Organizations adopt it because it represents best practice and supports compliance with emerging AI regulations.

How does NIST AI RMF relate to the EU AI Act? The NIST AI RMF and EU AI Act share a risk-based approach to AI governance. Implementing NIST AI RMF positions organizations well for EU AI Act compliance, though the EU AI Act has specific requirements (particularly for high-risk systems) that go beyond NIST guidance. Organizations subject to both should map NIST AI RMF implementation to EU AI Act requirements.

What are the four functions of the NIST AI RMF? The four functions are: GOVERN (establishing governance structure, policies, and culture), MAP (understanding AI context, systems, and risks), MEASURE (assessing and monitoring risks), and MANAGE (treating risks and maintaining controls). These functions operate continuously and interact with each other throughout the AI lifecycle.

How long does it take to implement NIST AI RMF? A phased implementation can achieve foundational governance in 12 weeks: foundation (weeks 1-4), assessment (weeks 5-8), and treatment (weeks 9-12). Full maturation is ongoing. Timeline varies based on AI portfolio size, organizational readiness, and available resources.

Do I need technical AI expertise to implement NIST AI RMF? No. AI risk management is a business discipline. While technical input is valuable for specific assessments, the framework can be implemented by risk, compliance, and governance professionals. Focus on business risks and use questionnaires and frameworks that translate technical issues into business terms.

How does NIST AI RMF work with ISO 42001? ISO 42001 is an international standard for AI management systems that provides certifiable requirements. NIST AI RMF provides implementation guidance that supports ISO 42001 compliance. Organizations often use NIST AI RMF as the operational foundation for achieving ISO 42001 certification.

Related Resources

- The Complete Guide to AI Policy and Governance for Companies — The pillar guide covering all aspects of AI governance

- Building an AI Compliance Framework: Step-by-Step Guide — Comprehensive framework implementation guide

- AI Risk Management: A Framework for Non-Technical Leaders — Risk assessment and prioritization

- AI Acceptable Use Policy Template: A Complete Guide — Policy development guidance

- Shadow AI: The Hidden Risk in Every Company — Managing unapproved AI tool usage

- AI Audit Trail: What It Is and Why Regulators Want One — Audit documentation requirements

- EU AI Act Compliance: What Companies Need to Do — European regulatory requirements