PolicyGuard AI is an AI compliance software platform that helps companies enforce AI usage policies across their workforce using expert-curated templates, automatic employee training, browser extension enforcement, and audit-ready compliance reports. This guide covers everything you need to know about AI policy and governance for companies in 2026.

Your company almost certainly has employees using AI tools right now. ChatGPT, Claude, Gemini, Copilot, Midjourney, Perplexity. The question is not whether AI is being used. The question is whether you can prove your AI usage policy is being followed when an auditor, regulator, or client asks.

This guide covers everything: what AI governance is, why it matters, what regulations require it, what your AI policy should include, how to enforce it, how to train employees, and how to prove compliance. Whether you are starting from scratch or tightening existing policies, this is the resource you need.

TABLE OF CONTENTS:

- What Is AI Governance and Why Does It Matter?

- The Real Cost of Getting AI Governance Wrong

- What Regulations Require AI Governance?

- 12 Essential Sections Every AI Policy Must Include

- How to Enforce Your AI Policy (Not Just Publish It)

- Training Employees on AI Policy Compliance

- Building an AI Audit Trail That Regulators Accept

- Shadow AI: The Risk You Cannot See

- AI Governance Frameworks: Choosing the Right Approach

- No AI Inside: Why Deterministic Enforcement Matters

- Getting Started: From Zero to Audit-Ready in 5 Minutes

- Frequently Asked Questions

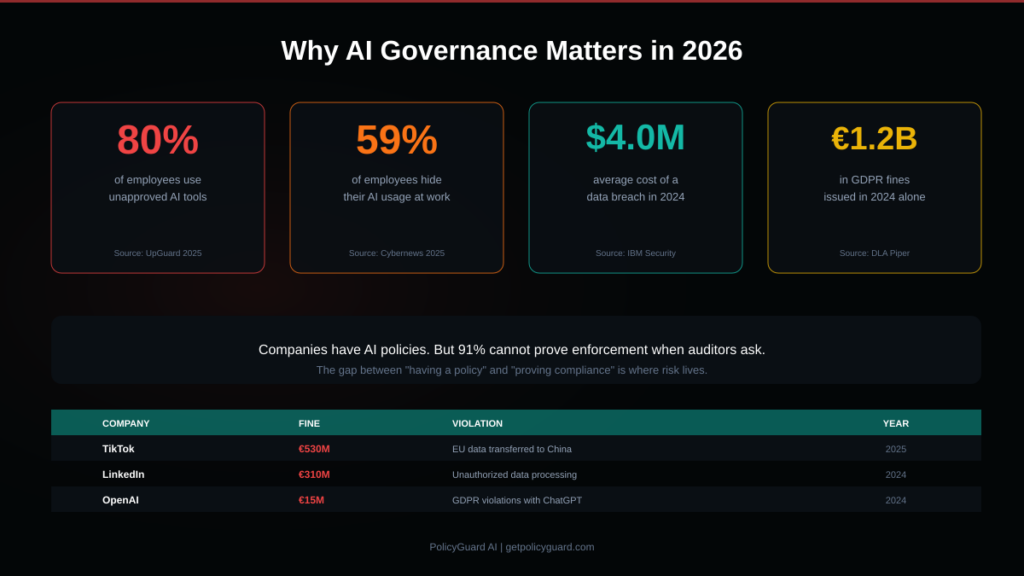

Alt text: Statistics showing why AI governance matters: 80% of employees use unapproved AI tools, 59% hide AI usage, $4.0M average data breach cost, and 1.2 billion euros in GDPR fines in 2024

1. What Is AI Governance and Why Does It Matter?

AI governance is the system of policies, processes, and controls that defines how your organization uses artificial intelligence tools. It answers three fundamental questions: what AI tools are employees allowed to use, what data can and cannot be shared with those tools, and how do you prove compliance when someone asks.

This is not a theoretical exercise. According to UpGuard’s 2025 research, 80% of employees are already using AI tools that their employer has not approved. Cybernews found that 59% of employees actively hide their AI usage from their employer. Your employees are using AI whether you have a policy or not. The difference is whether you have documented, provable governance in place.

AI governance matters for three practical reasons.

First, regulatory pressure is accelerating. The EU AI Act is now enforceable. GDPR authorities have issued over 1.2 billion euros in fines in 2024 alone, with AI-related violations becoming a specific focus. In the US, the NIST AI Risk Management Framework provides a structured approach that auditors increasingly reference. Companies without documented AI governance are exposed.

Second, data leakage through AI tools is a real and growing risk. When an employee pastes customer data, source code, financial projections, or legal documents into ChatGPT, that data has left your controlled environment. Without a policy defining what can and cannot be shared, and without enforcement ensuring the policy is followed, you have no protection against this risk.

Third, clients and partners are asking. If your company handles data for other organizations, those organizations are increasingly asking for evidence of AI governance as part of vendor assessments, security questionnaires, and contract negotiations. Having a documented, enforced AI policy is becoming a competitive requirement.

Related guide: AI Risk Management: A Framework for Non-Technical Leaders

2. The Real Cost of Getting AI Governance Wrong

The financial consequences of AI governance failures are not theoretical. Here are real fines from real companies:

- TikTok was fined 530 million euros in 2025 for transferring EU user data to China

- LinkedIn was fined 310 million euros in 2024 for unauthorized data processing

- Uber was fined 290 million euros in 2024 for transferring driver data to the US

- OpenAI was fined 15 million euros in 2024 for GDPR violations related to ChatGPT

- Clearview AI has been fined over 90 million euros across multiple jurisdictions for facial recognition data scraping

Beyond fines, the average cost of a data breach reached $4.0 million in 2024 according to IBM Security, with shadow AI usage adding an estimated $200,000 premium to that cost.

The pattern across all of these cases is the same: organizations failed to document and prove how data was being processed. When regulators investigated, there was no evidence trail showing that policies were communicated, acknowledged, and enforced.

This is exactly the gap that AI governance closes. Not by preventing AI usage entirely, but by creating documented proof that every employee knows the rules and has acknowledged them.

Alt text: Overview of AI regulations by region showing EU regulations like the EU AI Act and GDPR, US regulations like NIST AI RMF and CCPA, and global standards like ISO 42001 and SOC 2

3. What Regulations Require AI Governance?

The regulatory landscape for AI is fragmented across jurisdictions, but every major framework requires the same fundamental thing: documented evidence that your organization governs AI usage responsibly.

European Union

The EU AI Act is the most comprehensive AI-specific regulation in the world. It classifies AI systems by risk level (unacceptable, high, limited, minimal) and imposes specific obligations at each level. For most companies, the key requirements are transparency obligations, human oversight requirements, and documentation of AI usage in the workplace. GDPR adds additional requirements around personal data processing in AI tools, particularly Articles 5 (data processing principles), 13 (information to data subjects), 22 (automated decision-making), and 35 (data protection impact assessments).

Deep dive: EU AI Act Compliance: What Companies Need to Do in 2026

United States

The US does not have a single federal AI law, but the regulatory landscape is dense. The NIST AI Risk Management Framework provides a voluntary but increasingly referenced structure with four core functions: Govern, Map, Measure, and Manage. State-level regulations are moving fast: California’s CCPA/CPRA covers AI data processing, New York City’s Local Law 144 requires bias audits for AI in hiring, and Colorado, Illinois, and other states have enacted AI-specific requirements. For companies handling health data, HIPAA applies to any AI tool that processes protected health information. For financial services, SEC, FINRA, and OCC guidance applies.

Deep dive: NIST AI Risk Management Framework: A Practical Implementation Guide

Global Standards

ISO/IEC 42001 provides an international standard for AI management systems. SOC 2 Trust Services Criteria increasingly includes AI-specific controls. The OECD AI Principles provide a high-level international framework that many national regulations reference. For organizations operating across multiple jurisdictions, these global standards provide a common baseline.

The common thread: Regardless of which regulations apply to your organization, they all require documented policies, employee training, ongoing monitoring, and auditable evidence of compliance. The specific sections differ, but the fundamental requirement is the same: prove that your AI policy is being followed.

Browse templates for every regulation: PolicyGuard AI Policy Templates

Alt text: Infographic showing the 12 essential sections every AI policy must include: purpose and scope, definitions, approved AI tools, prohibited uses, data handling rules, human oversight, disclosure requirements, training requirements, compliance monitoring, incident reporting, enforcement, and policy governance

4. 12 Essential Sections Every AI Policy Must Include

A comprehensive AI policy is more than a one-page memo. It needs to be specific enough that employees know exactly what they can and cannot do, and detailed enough that auditors can verify compliance. Here are the 12 sections every AI policy should include.

Section 1: Purpose and Scope. Define why the policy exists, who it applies to (all employees, specific departments, contractors), and what AI tools and use cases it covers. Be specific. “This policy applies to all employees and contractors who use AI-powered tools including but not limited to ChatGPT, Claude, Gemini, Copilot, and Midjourney in the course of their work.”

Section 2: Definitions. Define key terms so there is no ambiguity. What counts as an “AI tool”? What is “personal data” under your policy? What does “confidential information” include? Clear definitions prevent disputes later.

Section 3: Approved AI Tools. List every AI tool that is approved for use, along with any conditions. Some tools might be approved for general use while others require manager approval. Specify which tools are prohibited entirely.

Section 4: Prohibited Uses. Be explicit about what employees must never do. Common prohibitions include: entering personal data of customers or employees, sharing proprietary source code, uploading confidential financial data, using AI outputs in legal or medical decisions without human review, and sharing trade secrets or strategic plans.

Section 5: Data Handling Rules. This is the most critical section for regulatory compliance. Specify exactly what data types can and cannot be entered into AI tools. Create clear categories: public data (allowed), internal data (conditional), confidential data (prohibited), personal data (prohibited without specific approval), and regulated data like health information or financial records (strictly prohibited).

Section 6: Human Oversight Requirements. Define when AI outputs must be reviewed by a human before being used. This is required by GDPR Article 22 and the EU AI Act for many use cases. Specify who reviews, what they check for, and how review is documented.

Section 7: Disclosure and Transparency. Define when employees must disclose that AI was used. This includes customer-facing communications, published content, code contributions, and any context where a reasonable person would expect to know AI was involved.

Section 8: Training Requirements. Specify what training employees must complete before using AI tools, how often training must be refreshed, and what the minimum passing score is for any quizzes or assessments.

Section 9: Compliance Monitoring. Describe how the organization monitors AI tool usage and policy compliance. This might include browser extension enforcement, periodic audits, usage analytics, or self-reporting requirements.

Section 10: Incident Reporting. Define what constitutes a policy violation, how employees should report incidents (including their own accidental violations), and what the investigation process looks like. Make it clear that reporting is encouraged, not punished.

Section 11: Enforcement and Consequences. Specify the consequences for policy violations, ranging from retraining requirements for minor violations to disciplinary action for serious or repeated violations. This section needs to be clear but fair.

Section 12: Policy Governance. Define who owns the policy, how often it is reviewed (at minimum annually, and whenever a relevant regulation changes), and what the approval process is for updates.

Writing these 12 sections from scratch takes weeks. That is why PolicyGuard provides 28+ expert-curated policy templates that include all 12 sections, pre-written by compliance professionals, with built-in training modules and quiz questions. You pick a template, customize it for your company, and publish. The hard work is already done.

Related guide: AI Acceptable Use Policy Template: A Complete Guide for 2026

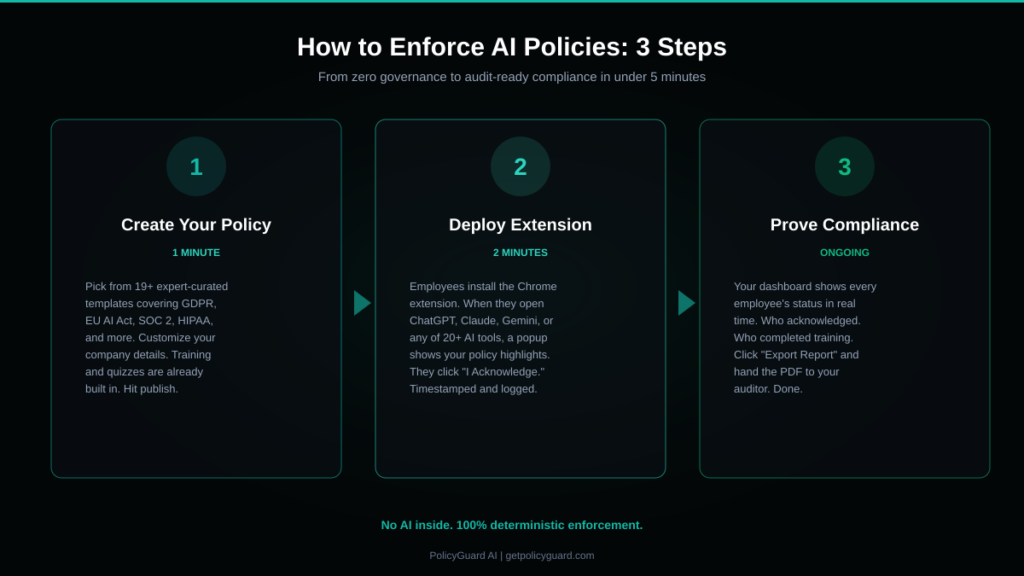

Alt text: Three-step process for enforcing AI policies showing step 1 create your policy in 1 minute, step 2 deploy the browser extension in 2 minutes, and step 3 prove compliance on an ongoing basis

5. How to Enforce Your AI Policy (Not Just Publish It)

Here is the uncomfortable truth about AI governance: having a policy means nothing if you cannot prove it is being enforced. Most companies create a PDF, email it to employees, and call it done. When an auditor asks for proof of enforcement, they scramble.

Effective enforcement requires three things working together.

Step 1: Create and communicate the policy. The policy needs to be accessible, understandable, and actively communicated. Not buried in a shared drive. Not hidden in an employee handbook that nobody reads. It needs to be surfaced at the point of use, when an employee is about to use an AI tool.

Step 2: Enforce at the point of use. This is where most companies fail. The most effective enforcement happens in the browser, at the moment an employee opens an AI tool. A browser extension that detects when an employee visits ChatGPT, Claude, Gemini, or any other AI tool and presents a policy acknowledgment popup is the most reliable enforcement mechanism available. The employee sees the policy highlights, clicks “I Acknowledge,” and the acknowledgment is timestamped and logged. No extra steps for the employee. No hoping they remember the email from three months ago.

Step 3: Document everything. Every policy acknowledgment, every training completion, every quiz score needs to be logged with a timestamp, the employee’s identity, and the specific policy version. This log becomes your audit trail. When an auditor asks “How do you know your employees follow your AI policy?”, you pull up the dashboard, show the data, and export a report. Conversation over.

The gap between “we have a policy” and “we can prove enforcement” is where compliance risk lives. The companies that close this gap are the ones that survive audits.

See how PolicyGuard enforces AI policies: Watch the Demo

6. Training Employees on AI Policy Compliance

Publishing a policy is not enough. Employees need to understand what the policy means, why it exists, and how to follow it in their daily work. Effective AI policy training has four components.

Component 1: Initial training when the policy is published. Every employee who is subject to the policy should complete a training module that walks through the key sections. This is not a one-hour lecture. It should be a focused 10-15 minute module that covers the essentials: what tools are approved, what data cannot be shared, when disclosure is required, and how to report issues.

Component 2: Comprehension verification. Training without verification is just a presentation. Include a quiz at the end of each training module that tests whether the employee actually understands the key points. Set a minimum passing score (80% is standard) and require employees who do not pass to retake the quiz.

Component 3: Ongoing reinforcement. AI governance is not a one-time event. The browser extension policy popup serves as ongoing reinforcement, reminding employees of the key rules every time they use an AI tool. Periodic refresher training (quarterly or when the policy updates) keeps knowledge current.

Component 4: Documentation and tracking. Every training completion and quiz score needs to be logged and trackable. Your compliance dashboard should show at a glance: how many employees have completed training, who has not, what their quiz scores were, and when training was last completed.

PolicyGuard includes training modules and quiz questions built into every policy template. When you publish a policy, the associated training is automatically assigned to the relevant employees. Completion and quiz scores are tracked in your dashboard and included in audit reports.

7. Building an AI Audit Trail That Regulators Accept

An audit trail is a chronological record of compliance activities that can be presented as evidence during an audit, investigation, or legal proceeding. For AI governance, your audit trail needs to answer five questions.

Who acknowledged the policy? (Employee name, email, department) What did they acknowledge? (Policy name, version number, specific sections) When did they acknowledge it? (Exact timestamp, not “sometime in Q1”) Where did the acknowledgment happen? (Which AI tool triggered it, which device) How was compliance verified? (Training completion, quiz score, extension status)

A good audit trail is factual, timestamped, and immutable. It records what happened, not what an AI model thinks happened. This is a critical distinction. Some governance platforms use AI to generate compliance summaries, which means the audit trail itself could contain errors or hallucinations. When your evidence for compliance is AI-generated, and the topic being audited is your governance of AI, you have a credibility problem.

Your audit trail should be exportable as a PDF or document that you can hand to an auditor. It should include per-employee compliance status, policy acknowledgment history, training completion records, and a summary of organizational compliance posture.

Related guide: AI Audit Trail: What It Is and Why Regulators Are Asking for One

8. Shadow AI: The Risk You Cannot See

Shadow AI is the use of AI tools by employees without organizational knowledge or approval. It is the AI equivalent of shadow IT, and it is happening at scale.

The numbers are stark. 80% of employees use AI tools their employer has not approved. 59% actively hide their AI usage. This means the majority of AI usage in your organization is invisible to you, uncontrolled by your policy, and creating compliance risk you cannot quantify.

Shadow AI creates three specific risks.

Data leakage risk. When employees use unapproved AI tools, you have no control over what data they share. Customer information, source code, financial data, and strategic plans could be entering AI systems without any safeguards.

Compliance risk. If a regulator asks how you govern AI usage and your answer is “we have a policy but we don’t know who’s actually using AI tools or what they’re doing with them,” that is not a defensible position.

Quality and liability risk. AI-generated outputs that are not reviewed by humans can contain errors, biases, or fabricated information. If employees are using AI without oversight and those outputs reach customers, partners, or legal proceedings, the liability falls on your organization.

Detecting and managing shadow AI requires visibility into what AI tools employees are actually using. A browser extension that monitors AI tool access (without reading conversations or logging keystrokes) provides this visibility. When a new, unapproved AI tool is detected, administrators are alerted and can take action.

Related guide: Shadow AI: The Hidden Risk in Every Company Using AI Tools

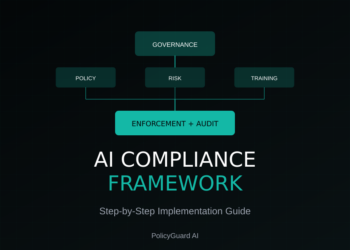

9. AI Governance Frameworks: Choosing the Right Approach

An AI governance framework provides the structure for your entire AI governance program. Several frameworks exist, and the right choice depends on your regulatory requirements, industry, and organizational maturity.

NIST AI Risk Management Framework. The NIST AI RMF is a voluntary framework with four core functions: Govern (establish policies and accountability), Map (understand the AI context and risks), Measure (assess and monitor risks), and Manage (prioritize and respond to risks). It is the most widely referenced framework in the US and is increasingly cited by auditors. If you are a US-based company or serve US clients, this is a strong starting point.

ISO/IEC 42001. This international standard provides a formal AI management system framework. It is more prescriptive than NIST and includes requirements for risk assessment, impact analysis, and continuous improvement. If you operate internationally or want a certifiable standard, ISO 42001 is the path.

EU AI Act Risk-Based Approach. The EU AI Act itself provides a framework organized around risk levels. If you sell products or services in the EU, or process data of EU residents, aligning your governance with the EU AI Act risk categories is essential.

Custom Governance Framework. For many companies, especially those in the 50-500 employee range, a custom framework that borrows from NIST, ISO, and the EU AI Act is the most practical approach. You do not need to implement every element of every framework. You need the elements that match your specific regulatory obligations and risk profile.

The common elements across all frameworks: documented policies, employee training, ongoing monitoring, risk assessment, incident response, and auditable evidence. PolicyGuard covers the policy, training, monitoring, and evidence components regardless of which framework you adopt.

Related guide: AI Compliance Framework: How to Build One From Scratch

10. No AI Inside: Why Deterministic Enforcement Matters

This is where PolicyGuard takes a fundamentally different approach from other governance platforms.

Most AI governance tools use AI to generate policies, score compliance, and summarize audit trails. This creates a problem: you are using a black box to govern the use of black boxes. When an auditor asks how you know your policies are correct, and your answer is “our AI wrote them,” that is not reassuring. When they ask how you know your compliance scores are accurate, and your answer is “our AI calculated them,” that is not evidence.

PolicyGuard intentionally has no AI inside the platform.

Every policy template is written by compliance professionals and reviewed by regulatory experts. Not generated by AI. Every enforcement decision (did the employee acknowledge? did they complete training? did they pass the quiz?) is made by deterministic code. No probabilistic scoring. No AI interpretation. No hallucinations. Every audit trail entry is a factual timestamp: who did what, when, with which policy version. Not an AI-generated summary that might be inaccurate.

You cannot solve the black box problem with another black box. Compliance demands certainty, and certainty requires deterministic systems.

This is not a limitation. It is the product.

11. Getting Started: From Zero to Audit-Ready in 5 Minutes

If you have read this far, you understand why AI governance matters and what it requires. Here is how to actually implement it.

Step 1 (1 minute): Pick a template. Go to the PolicyGuard template library and select the template that matches your primary regulatory requirement. If you are not sure, start with the General AI Acceptable Use Policy. It covers the fundamentals that every company needs.

Step 2 (2 minutes): Customize and publish. Add your company name, list of approved AI tools, and any department-specific rules. The training modules and quiz questions are already built into the template. Hit publish and your policy is live.

Step 3 (2 minutes): Deploy the extension. Have your employees install the PolicyGuard Chrome extension. The next time they open ChatGPT, Claude, Gemini, or any supported AI tool, they will see your policy highlights and acknowledge them with one click.

From this point forward: Every acknowledgment, every training completion, and every quiz score is logged in your dashboard. When an auditor asks for proof of AI policy compliance, you click Export and hand them the report.

That is the difference between “we have a policy” and “we can prove enforcement.”

Start your free 14-day trial: getpolicyguard.com See pricing: getpolicyguard.com/pricing

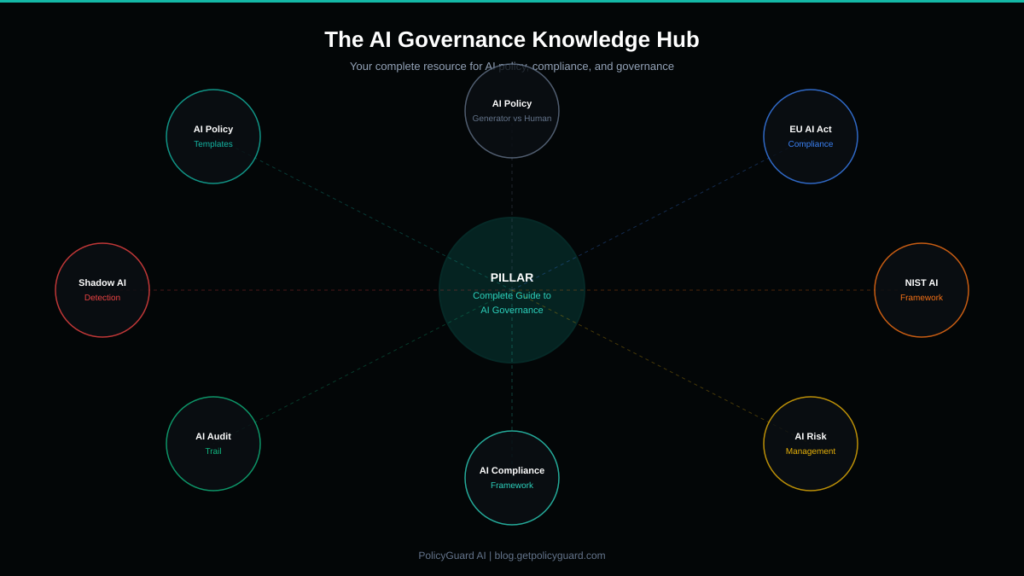

Alt text: Diagram showing the AI Governance Knowledge Hub with the pillar guide at the center connecting to cluster posts on AI policy templates, EU AI Act compliance, shadow AI detection, NIST AI framework, AI audit trail, AI compliance framework, AI risk management, and AI policy generator comparison

12. Related Resources

This guide is the central hub of our AI Governance Knowledge Hub. For deeper coverage of specific topics, explore these resources:

- AI Acceptable Use Policy Template: A Complete Guide – Everything you need to know about creating an AI acceptable use policy, with a free template preview.

- EU AI Act Compliance: What Companies Need to Do in 2026 – A practical breakdown of the EU AI Act requirements and how to comply.

- NIST AI Risk Management Framework: Practical Implementation Guide – How to implement the NIST AI RMF in your organization.

- Shadow AI: The Hidden Risk in Every Company Using AI Tools – Understanding and managing unapproved AI tool usage.

- AI Audit Trail: What It Is and Why Regulators Are Asking for One – How to build an audit trail that satisfies regulators.

- AI Compliance Framework: How to Build One From Scratch – Step-by-step guide to building your organization’s compliance framework.

- AI Risk Management: A Framework for Non-Technical Leaders – AI risk management explained for executives and managers.

- AI Policy Generator vs Expert-Curated Templates: Why We Chose Humans – Why PolicyGuard uses human-authored templates instead of AI generation.

Frequently Asked Questions

What is AI governance? AI governance is the system of policies, processes, and controls that defines how an organization uses artificial intelligence tools. It covers which AI tools are approved, what data can be shared with them, how employees are trained, how compliance is monitored, and how enforcement is documented. Effective AI governance produces auditable evidence that your AI usage policy is being followed.

What is an AI acceptable use policy? An AI acceptable use policy is a document that defines the rules for how employees can use AI tools in the workplace. It typically covers approved tools, prohibited uses, data handling requirements, disclosure obligations, training requirements, and enforcement consequences. PolicyGuard provides 19+ expert-curated templates that include all of these sections.

Do I need an AI policy for GDPR compliance? Yes. GDPR requires organizations to document how personal data is processed, and this includes personal data entered into AI tools. GDPR Articles 5, 13, 22, and 35 all have direct implications for AI usage. Without a documented AI policy that addresses data processing in AI tools, you are exposed to GDPR enforcement action.

What is the NIST AI Risk Management Framework? The NIST AI RMF is a voluntary framework published by the National Institute of Standards and Technology that provides a structured approach to managing AI risks. It has four core functions: Govern, Map, Measure, and Manage. While voluntary, it is increasingly referenced by auditors and regulators as a best practice standard.

How do you enforce an AI policy? The most effective enforcement happens at the point of use. A browser extension that detects when employees open AI tools and presents a policy acknowledgment popup ensures every employee sees and acknowledges your policy before using AI. Combined with automatic training assignments and compliance dashboards, this creates a complete enforcement system with a documented audit trail.

What is shadow AI? Shadow AI is the use of AI tools by employees without organizational knowledge or approval. Research shows 80% of employees use unapproved AI tools and 59% actively hide their usage. Shadow AI creates data leakage, compliance, and liability risks that most organizations cannot see or quantify without specialized detection tools.

What does “no AI inside” mean for PolicyGuard? PolicyGuard intentionally uses no AI inside the platform. Policy templates are written by compliance professionals, not generated by AI. Enforcement decisions are made by deterministic code, not AI scoring. Audit trails are factual timestamps, not AI-generated summaries. This means zero hallucination risk, zero black box decisions, and zero dependency on third-party AI APIs for your compliance data.

Estimated reading time: 20 minutes

Comments 6