This guide is part of our Complete Guide to AI Policy and Governance.

The 2026 AI Compliance Reality

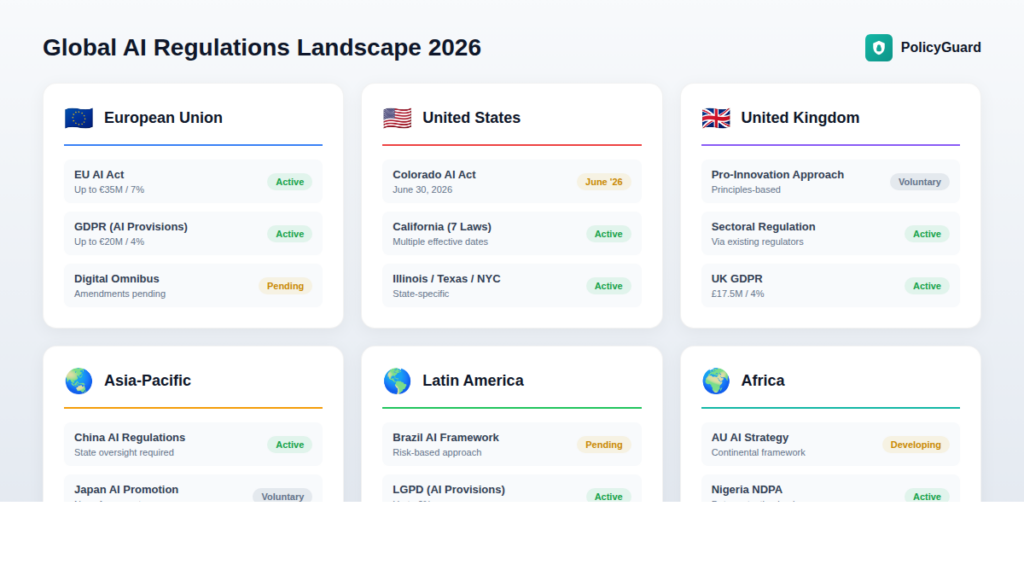

Seventy-two countries have launched over 1,000 AI policy initiatives. The European Union enforces binding regulations with penalties reaching 7% of global revenue. Colorado became the first US state to enact comprehensive AI legislation. California has seven active AI laws. Illinois, Texas, and New York have joined with their own requirements.

And yet, more than half of organizations lack a complete inventory of the AI systems they currently use.

This guide breaks down every regulation that matters in 2026. Not theoretical frameworks or future proposals. The actual laws that are enforceable now or will be within months, the penalties attached to them, and the practical steps to achieve compliance.

If your organization uses AI tools for any business purpose, at least one of these regulations applies to you.

EU AI Act: The Global Standard

The EU AI Act entered into force on August 1, 2024, and will be fully applicable on August 2, 2026. It is the most comprehensive AI regulation in the world and, like GDPR before it, has extraterritorial reach. If your AI systems affect EU residents, you are in scope regardless of where your company is headquartered.

Risk-Based Classification

The EU AI Act classifies AI systems into four risk tiers:

Unacceptable Risk (Prohibited): Social scoring systems, real-time biometric identification in public spaces for law enforcement (with exceptions), manipulation techniques exploiting vulnerabilities, emotion recognition in workplaces and schools. These prohibitions became effective February 2, 2025.

High-Risk: AI systems used in employment decisions, credit assessments, education, healthcare, critical infrastructure, and law enforcement. These require conformity assessments, technical documentation, risk management systems, human oversight, and registration in the EU database. Full requirements apply August 2, 2026 for standalone systems and August 2, 2027 for systems embedded in regulated products.

Limited Risk: AI systems with transparency obligations, including chatbots (must disclose they are AI), deepfakes (must be labeled), and emotion recognition systems (must inform users).

Minimal Risk: All other AI systems with no additional requirements.

Key Deadlines

| Date | Requirement |

|---|---|

| February 2, 2025 | Prohibitions on unacceptable-risk AI in effect |

| August 2, 2025 | Governance rules and GPAI model obligations in effect |

| August 2, 2026 | High-risk AI system requirements for Annex III systems |

| August 2, 2026 | AI regulatory sandboxes required in each member state |

| August 2, 2027 | High-risk requirements for AI embedded in regulated products |

Penalties

The EU AI Act penalty structure makes non-compliance a board-level concern:

- Prohibited AI practices: Up to €35 million or 7% of global annual turnover, whichever is higher

- High-risk non-compliance: Up to €15 million or 3% of global annual turnover

- Incorrect information to authorities: Up to €7.5 million or 1.5% of global annual turnover

For context: Meta’s 7% would exceed $8.5 billion. Google’s would be approximately $14 billion. Microsoft’s would approach $16 billion.

Digital Omnibus Update

In November 2025, the European Commission proposed the Digital Omnibus package, which could postpone high-risk obligations for Annex III systems until December 2027. However, this requires trilogue negotiation and is not guaranteed. Organizations should treat August 2, 2026 as the binding deadline until formal legislation confirms otherwise.

What You Actually Need to Do

- Inventory all AI systems that interact with EU residents or process EU-sourced data

- Classify each system against the risk tiers

- Document high-risk systems with technical specifications, training data provenance, and risk assessments

- Implement human oversight mechanisms for high-risk deployments

- Establish AI governance with cross-functional accountability

- Train employees on AI literacy requirements (effective February 2, 2025)

- Prepare for conformity assessments before August 2026

Related guide: EU AI Act Compliance: What Companies Need to Know in 2026

Colorado AI Act: First Comprehensive US State Law

Colorado’s SB 24-205, the Colorado Artificial Intelligence Act, is the first enacted comprehensive state law regulating high-risk AI systems in the United States. After a postponement due to failed compromise negotiations, the law takes effect June 30, 2026.

Scope

The Colorado AI Act applies to any entity that:

- Develops high-risk AI systems used in Colorado

- Deploys high-risk AI systems to make consequential decisions affecting Colorado consumers

- Does business in Colorado

A “high-risk” AI system is any system that makes, or is a substantial factor in making, a “consequential decision.” Consequential decisions include those with material effects on:

- Education enrollment or opportunity

- Employment or employment opportunity

- Financial or lending services

- Essential government services

- Healthcare services

- Housing

- Insurance

- Legal services

Requirements for Developers

- Use reasonable care to protect consumers from algorithmic discrimination

- Provide deployers with documentation necessary to complete impact assessments

- Publish summaries of high-risk systems made available and risk management approaches

- Disclose to the attorney general any discovered algorithmic discrimination within 90 days

Requirements for Deployers

- Implement risk management policies for high-risk AI systems

- Complete annual impact assessments

- Disclose to consumers when AI is used in consequential decisions

- Provide information about the nature and extent of AI system use

- Disclose discovered algorithmic discrimination to the attorney general within 90 days

Safe Harbor

There is a rebuttable presumption that a developer or deployer used reasonable care if they:

- Comply with a nationally or internationally recognized risk management framework (NIST AI RMF, ISO 42001)

- Take measures to discover and correct violations

This safe harbor makes framework alignment a practical necessity, not just a best practice.

Penalties

The Colorado Attorney General has exclusive enforcement authority. Violations are subject to penalties under the Colorado Consumer Protection Act.

California: Seven AI Laws in Effect

California has enacted multiple AI-related laws addressing different use cases, creating layered compliance requirements for companies operating in the state.

SB 53: Transparency in Frontier AI Act

Effective January 1, 2026. Applies to developers of “frontier” AI models meeting certain capability thresholds. Requires:

- Safety governance frameworks

- Transparency reporting on model capabilities and limitations

- Incident response protocols

SB 942: California AI Transparency Act

Effective August 2, 2026 (delayed from January 1, 2026 by AB 853). Requires:

- Large AI platforms to provide free AI content detection tools

- Manifest and latent watermarks in AI-generated content

- System provenance data for online platforms

AB 2013: GAI Training Data Transparency Act

Effective January 1, 2026. Requires generative AI developers to publish summaries of:

- Training data sources

- Licensing arrangements

- Personal and synthetic data usage

- Modifications made to training data

SB 243: Companion Chatbots Act

Effective January 1, 2026. Mandates:

- Chatbot disclosure requirements

- Safety protocols against suicidal or harmful content

- Protections for minors (content limits and break reminders)

AB 489: Healthcare AI Disclosure Act

Prohibits AI from falsely claiming healthcare licenses and requires disclosures when AI communicates with patients.

Civil Rights Department AI Regulations

Effective October 1, 2025. Restricts discriminatory use of AI in employment decisions. Makes bias testing (or lack thereof) explicitly relevant to employment discrimination claims. Extends recordkeeping requirements for automated-decision system data.

CCPA and CPPA Regulations

The California Consumer Privacy Act and California Privacy Protection Agency regulations require:

- Automated decision-making disclosures

- Opt-out rights for certain AI-driven decisions

- Enhanced transparency when automated technologies replace human decision-making for employment decisions

Illinois: AI Video Interview Act

Illinois’ Artificial Intelligence Video Interview Act requires employers to:

- Notify candidates when AI analyzes video interviews

- Obtain consent before AI-based evaluation

- Comply with data retention and destruction requirements

- Delete AI-analyzed video upon candidate request (within 30 days)

The law took effect January 1, 2026, for new disclosure and consent requirements.

Texas: Responsible AI Governance Act

Texas’s RAIGA took effect January 1, 2026, and applies to developers and deployers that:

- Conduct business in Texas

- Provide products or services used by Texas residents

- Develop or deploy AI systems within Texas

The law prohibits AI systems for “restricted purposes,” including:

- Encouragement of self-harm, violence, or criminality

- Creation or distribution of AI-generated child sexual abuse material

- Unlawful deepfakes

- Communications impersonating minors in explicit contexts

New York City: Local Law 144

New York City’s Local Law 144, in effect since July 2023, regulates automated employment decision tools (AEDTs). Requirements include:

- Annual bias audits by independent auditors

- Public posting of audit results

- Candidate notification of AEDT use

- Alternative selection process availability upon request

While limited to New York City, this law has influenced vendor practices nationally.

GDPR: The AI Implications

The General Data Protection Regulation predates the EU AI Act but directly applies to any AI system processing personal data of EU residents.

Article 22: Automated Decision-Making

Individuals have the right not to be subject to decisions based solely on automated processing that significantly affect them, unless:

- The decision is necessary for a contract

- Authorized by law

- Based on explicit consent

This applies directly to AI-driven hiring, credit scoring, and similar consequential decisions.

Requirements for AI Systems

- Lawful basis: Clear legal justification for processing personal data with AI

- Purpose limitation: Data used only for specified purposes disclosed to individuals

- Data protection impact assessments (DPIAs): Required for high-risk processing

- Data subject rights: Access, rectification, erasure, and portability apply to AI-processed data

- Cross-border transfers: Standard contractual clauses or adequacy decisions required

GDPR + EU AI Act Overlap

Organizations must comply with both regulations simultaneously. The EU AI Act does not replace GDPR. AI systems handling personal data must meet requirements from both frameworks, and penalties can be assessed under each.

Sector-Specific Regulations

Beyond general AI laws, certain industries face additional compliance requirements.

Healthcare

- HIPAA: Applies to AI systems handling protected health information (PHI)

- FDA regulations: AI/ML-based medical devices require clearance or approval

- State telehealth laws: May restrict AI involvement in patient care

Financial Services

- Fair lending laws (ECOA, Fair Housing Act): Prohibit discriminatory AI-driven lending decisions

- SEC and FINRA guidance: AI in trading and investment recommendations subject to existing securities regulations

- Bank examination: Federal regulators increasingly scrutinize AI in credit decisions

Employment

- Title VII, ADA, ADEA: AI systems producing discriminatory outcomes create legal liability regardless of intent

- EEOC guidance: Employers responsible for vendor AI that discriminates

- State employment laws: California, Illinois, New York, and others add specific AI employment requirements

Federal AI Executive Actions

On December 11, 2025, President Trump signed an Executive Order titled “Ensuring a National Policy Framework for Artificial Intelligence.” The order signals intent to:

- Consolidate AI oversight at the federal level

- Counter the expanding patchwork of state AI rules

- Preempt state laws deemed inconsistent with federal policy

- Maintain US global AI dominance

The order directs the FTC to issue a policy statement by March 11, 2026, describing how the FTC Act applies to AI and when state laws may be preempted.

What This Means Practically

Courts will determine if and how the Executive Order affects state AI laws. Until judicial clarity emerges, organizations should:

- Maintain flexible compliance programs

- Continue preparing for state law requirements

- Monitor federal preemption developments

- Document good-faith compliance efforts

The Executive Order does not provide a basis for ignoring state laws currently in effect.

International Regulations

Canada: AIDA (Proposed)

Canada’s Artificial Intelligence and Data Act would require:

- Risk management for high-impact AI systems

- Transparency and fairness obligations

- Rights protections for citizens

The legislation has faced delays but remains under consideration.

UK: Principles-Based Approach

The UK pursues a “compliance-lite” strategy without EU-style prescriptive penalties. Existing sectoral regulators apply five cross-sectoral principles:

- Safety, security, and robustness

- Transparency and explainability

- Fairness

- Accountability and governance

- Contestability and redress

China: AI Governance with State Oversight

China has enacted regulations covering:

- Algorithmic recommendations

- Synthetic media (deepfakes)

- Generative AI services

Compliance requires balancing state oversight requirements with technical capabilities.

Japan: AI Promotion Act

Japan’s approach emphasizes voluntary guidelines with no enforcement mechanism. However, alignment with international standards may become competitive necessity for companies operating in Japan.

Building a Multi-Jurisdictional Compliance Program

Organizations operating across multiple jurisdictions face overlapping and sometimes conflicting requirements. A practical compliance program addresses this through systematic governance.

Step 1: Inventory All AI Systems

Document every AI system in use, including:

- Vendor-provided tools

- Internally developed systems

- AI features embedded in other software

- Employee use of consumer AI tools (shadow AI)

Most organizations underestimate AI usage by 3x or more.

Step 2: Map Jurisdictional Exposure

For each AI system, identify:

- Where the system operates

- Whose data it processes

- Who is affected by its outputs

- Which jurisdictions have regulatory authority

A US company using AI for hiring that processes applications from EU residents is subject to both EU AI Act and GDPR, in addition to any applicable US state laws.

Step 3: Classify by Risk Level

Apply the most restrictive applicable classification:

- EU AI Act risk tiers

- Colorado “consequential decision” criteria

- Sector-specific high-risk categories

When classifications conflict, default to the higher-risk designation.

Step 4: Implement Framework Alignment

Align with recognized frameworks to establish safe harbor protections:

- NIST AI Risk Management Framework: Widely recognized in the US

- ISO/IEC 42001: International certification standard

- EU AI Act compliance documentation: Required for EU market access

Framework alignment provides demonstrable evidence of reasonable care.

Step 5: Establish Governance Structure

Create a cross-functional AI governance team with representatives from:

- Legal and compliance

- IT and security

- HR (if using AI in employment)

- Product (if embedding AI in offerings)

- Privacy

This team owns policies, approves higher-risk deployments, and coordinates compliance efforts.

Step 6: Document Everything

Regulatory compliance requires auditable evidence:

- Policy acknowledgments with timestamps

- Training completion records

- Risk assessments for each AI system

- Impact assessments (where required)

- Incident reports and remediation actions

PolicyGuard provides the infrastructure to capture and maintain this documentation automatically.

Related guide: AI Compliance Framework: How to Build One From Scratch

Common Compliance Gaps

Analysis of organizational readiness reveals consistent weaknesses:

No AI Inventory

More than half of organizations lack systematic inventories of AI systems in production or development. Without knowing what AI exists within the enterprise, risk classification and compliance planning is impossible.

Treating AI as Traditional Software

Many organizations apply standard software development and procurement practices to AI without recognizing unique regulatory requirements.

Missing Design History

Organizations cannot demonstrate that compliance considerations were integrated into AI system development from the design stage.

Vendor Assumption

Organizations assume their AI vendors handle compliance. The Colorado AI Act, EU AI Act, and most other regulations place obligations on deployers, not just developers. Using compliant vendor tools does not automatically make your usage compliant.

No Employee Training

AI literacy is now a compliance requirement under the EU AI Act (effective February 2025). Most organizations have not implemented training programs covering regulatory requirements and responsible use principles.

Compliance Timeline: What to Do When

Immediate (Already Required)

- EU AI Act prohibited AI practices compliance

- EU AI Act AI literacy training

- GDPR Article 22 compliance for automated decisions

- NYC Local Law 144 bias audits

- California Civil Rights Department AI employment regulations

Q1 2026

- Illinois AI Video Interview Act full compliance

- Texas RAIGA compliance

- California Frontier AI Act compliance

- California Training Data Transparency Act compliance

- California Companion Chatbots Act compliance

Q2 2026

- Colorado AI Act compliance (effective June 30, 2026)

- EU AI Act high-risk system preparation

Q3 2026

- EU AI Act high-risk AI system requirements (August 2, 2026)

- California AI Transparency Act compliance (August 2, 2026)

- EU AI regulatory sandboxes operational in all member states

Ongoing

- Monitor Digital Omnibus negotiations

- Track federal preemption developments

- Update policies as regulations evolve

- Conduct regular impact assessments

How PolicyGuard Supports Regulatory Compliance

Compliance with multiple AI regulations requires systematic documentation, employee training with verifiable acknowledgments, and audit-ready evidence. PolicyGuard provides:

Policy templates aligned with regulations: 19+ expert-curated templates covering EU AI Act, Colorado AI Act, GDPR, and sector-specific requirements. No AI generation, no hallucinated regulatory references, no liability ambiguity.

Training and acknowledgment tracking: Assign policies by department, track completion rates, and maintain timestamped records of who acknowledged what and when.

Audit trail documentation: Every policy view, acknowledgment, and training completion is logged with immutable timestamps. When regulators ask for evidence, you have it.

Browser extension enforcement: Detect when employees access AI tools and present policy acknowledgments at the point of use. Prove that employees saw and accepted your AI policy before using AI.

Explore PolicyGuard Templates →

Frequently Asked Questions

Which AI regulations apply to my company?

Jurisdictional applicability depends on where your AI systems operate, whose data they process, and who is affected by their outputs. Most organizations are subject to multiple overlapping regulations. The EU AI Act applies if you affect EU residents, regardless of company location. State laws apply if you serve residents of those states. Sector-specific regulations add additional requirements.

Do I need to comply with the EU AI Act if I’m a US company?

Yes, if your AI systems are used within the EU or produce outputs that affect EU residents. A US company using AI for loan approvals that serves European customers falls within scope even if the AI runs on US servers.

What’s the difference between the EU AI Act and GDPR?

GDPR governs personal data processing and includes specific provisions for automated decision-making (Article 22). The EU AI Act governs AI systems regardless of whether they process personal data. Organizations using AI that processes personal data must comply with both. The EU AI Act does not replace GDPR.

How do I achieve safe harbor under the Colorado AI Act?

Align with a recognized risk management framework (NIST AI RMF or ISO 42001), implement the framework’s requirements, and take measures to discover and correct any algorithmic discrimination. This creates a rebuttable presumption that you used reasonable care.

What is algorithmic discrimination?

Under the Colorado AI Act, algorithmic discrimination is any differential treatment or impact that disfavors individuals based on protected characteristics (age, race, disability, etc.) resulting from AI system use. Similar concepts appear in other regulations under different terms.

How do I track compliance across multiple regulations?

Start with an AI system inventory, map jurisdictional exposure, apply the most restrictive applicable classification, implement framework alignment, and maintain comprehensive documentation. PolicyGuard centralizes this documentation and provides audit-ready evidence across regulatory requirements.