This guide is part of our Complete Guide to AI Policy and Governance for Companies, the central resource for everything you need to know about AI governance in 2026.

Healthcare is adopting AI faster than almost any other industry. From clinical decision support to administrative automation, AI promises to improve patient outcomes while reducing costs.

But healthcare is also one of the most regulated industries on earth. HIPAA, FDA oversight, state medical board requirements, and now the EU AI Act create a complex compliance landscape that generic AI governance approaches cannot address.

This guide provides healthcare-specific AI governance guidance. We cover the unique risks, regulatory requirements, and practical steps for building an AI governance program that protects patients, satisfies regulators, and enables innovation.

TABLE OF CONTENTS:

- Why Healthcare AI Governance Is Different

- The Healthcare AI Landscape in 2026

- Regulatory Framework for Healthcare AI

- Healthcare-Specific AI Risks

- Building a Healthcare AI Governance Program

- AI Policies for Healthcare Organizations

- Clinical AI vs Administrative AI: Different Governance Needs

- HIPAA and AI: What You Need to Know

- FDA Regulation of AI Medical Devices

- EU AI Act Impact on Healthcare

- Training Healthcare Staff on AI

- Audit and Documentation Requirements

- How PolicyGuard Supports Healthcare AI Governance

- Frequently Asked Questions

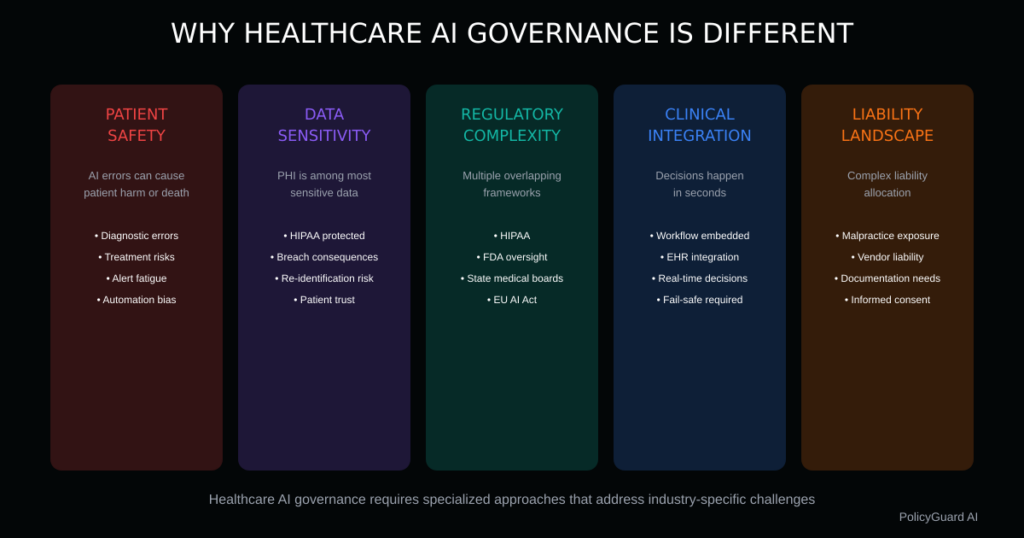

1. Why Healthcare AI Governance Is Different

AI governance in healthcare is not simply “regular AI governance plus HIPAA.” Healthcare presents unique challenges that require specialized approaches.

Patient Safety Stakes

In most industries, AI errors cause financial loss or inconvenience. In healthcare, AI errors can cause patient harm or death.

A clinical decision support system that recommends the wrong medication dosage, a diagnostic AI that misses cancer indicators, or an administrative AI that denies necessary care creates risks that extend far beyond regulatory penalties.

This elevates AI governance from a compliance exercise to a patient safety imperative.

Data Sensitivity

Healthcare data is among the most sensitive information that exists. Protected Health Information (PHI) includes not just medical records but any information that could identify a patient in connection with their health status.

When employees use AI tools in healthcare settings, the risk of PHI exposure is constant. A nurse asking ChatGPT to help draft a patient summary, a billing specialist pasting claim details into an AI tool, or a researcher using AI to analyze patient data all create potential HIPAA violations.

Regulatory Complexity

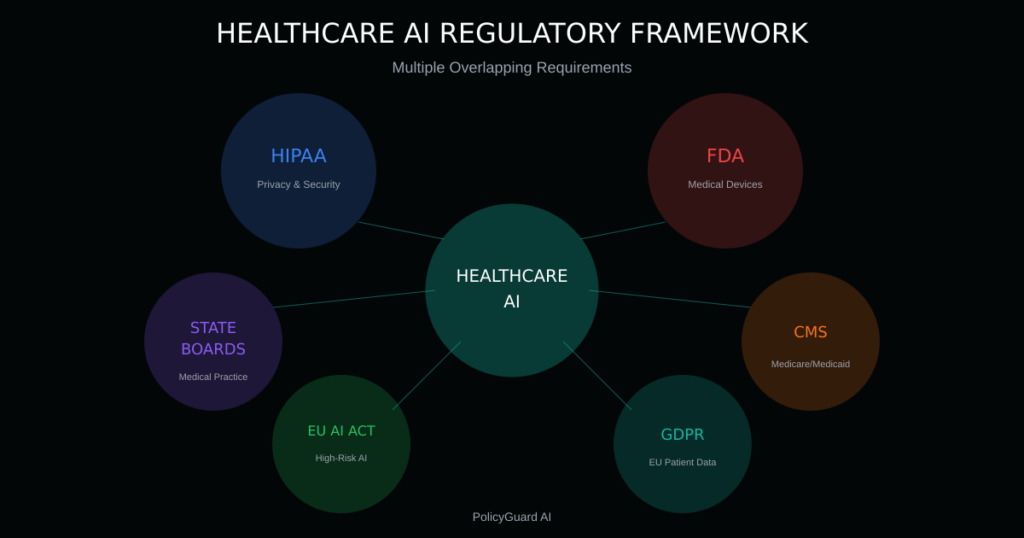

Healthcare AI sits at the intersection of multiple regulatory frameworks:

- HIPAA governs health information privacy and security

- FDA regulates AI systems that qualify as medical devices

- State medical boards oversee clinical practice standards

- CMS sets requirements for reimbursement

- EU AI Act classifies most healthcare AI as high-risk

- GDPR applies to EU patient data

No single regulatory body owns healthcare AI governance. Organizations must navigate overlapping requirements from multiple authorities.

Clinical Workflow Integration

Healthcare AI often integrates into clinical workflows where decisions happen in seconds. A physician reviewing AI-generated recommendations during a patient encounter does not have time to consult governance policies.

Governance must be embedded in systems and workflows, not just documented in handbooks.

Liability Landscape

When AI contributes to patient harm, liability questions multiply. Is the provider liable for following AI recommendations? Is the AI vendor liable for system errors? Is the organization liable for inadequate oversight?

Healthcare AI governance must address liability allocation, documentation requirements, and insurance considerations that other industries rarely face.

2. The Healthcare AI Landscape in 2026

AI adoption in healthcare has accelerated dramatically. Understanding where AI is being used helps identify governance priorities.

Clinical Applications

Diagnostic AI: AI systems that analyze medical images (radiology, pathology, dermatology), interpret lab results, or identify disease patterns. These systems directly influence clinical decisions.

Clinical Decision Support: AI that provides treatment recommendations, drug interaction alerts, or care pathway suggestions. Physicians rely on these systems but retain final decision authority.

Predictive Analytics: AI that predicts patient deterioration, readmission risk, or disease progression. Used for resource allocation and intervention timing.

Precision Medicine: AI that analyzes genetic data, biomarkers, and patient characteristics to personalize treatment plans.

Administrative Applications

Revenue Cycle Management: AI for coding, billing, claims processing, and denial management. Includes the controversial AI claim denial systems that have drawn regulatory scrutiny.

Scheduling and Resource Optimization: AI that manages appointment scheduling, staff allocation, and bed management.

Documentation: AI scribes, ambient clinical intelligence, and documentation assistants that reduce administrative burden on clinicians.

Prior Authorization: AI systems that process prior authorization requests, both for payers and providers.

Employee AI Tool Usage

Beyond purpose-built healthcare AI systems, healthcare employees use general-purpose AI tools:

ChatGPT and similar LLMs: Staff using AI for drafting communications, summarizing information, answering questions, and general productivity.

AI writing assistants: Tools like Grammarly, Jasper, or Copy.ai used for creating content.

AI coding assistants: IT staff using GitHub Copilot or similar tools for development.

This “shadow AI” usage in healthcare creates HIPAA risks that many organizations have not addressed.

3. Regulatory Framework for Healthcare AI

Healthcare AI governance must satisfy multiple regulatory frameworks simultaneously.

HIPAA (Health Insurance Portability and Accountability Act)

HIPAA does not mention AI specifically, but its requirements apply fully to AI systems that process PHI.

Privacy Rule implications:

- AI systems accessing PHI must have proper authorization

- Minimum necessary standard applies to AI data access

- Patient rights (access, amendment, accounting of disclosures) extend to AI-processed data

- Business Associate Agreements required for AI vendors handling PHI

Security Rule implications:

- AI systems must meet administrative, physical, and technical safeguard requirements

- Risk analysis must include AI systems

- Access controls must govern AI system access to PHI

- Audit controls must track AI system activity

Breach Notification Rule implications:

- PHI exposure through AI tools constitutes a potential breach

- Employees entering PHI into unauthorized AI tools may trigger breach notification requirements

FDA Regulation

The FDA regulates AI systems that meet the definition of medical devices. Not all healthcare AI is FDA-regulated, but clinical AI often is.

Software as a Medical Device (SaMD): AI systems intended for diagnosis, treatment, or prevention of disease may qualify as medical devices requiring FDA clearance or approval.

FDA AI/ML Action Plan: The FDA has established a framework for AI and machine learning in medical devices, addressing:

- Predetermined change control plans for adaptive AI

- Good machine learning practice (GMLP)

- Transparency and real-world performance monitoring

Clinical Decision Support exemptions: Some clinical decision support software is exempt from FDA regulation if it meets specific criteria, including displaying the basis for recommendations and allowing independent clinician review.

State Medical Board Requirements

State medical boards govern the practice of medicine, which increasingly involves AI:

- Standards for physician oversight of AI-assisted care

- Requirements for informed consent when AI is used in treatment

- Liability frameworks for AI-related adverse events

- Telemedicine regulations that may affect remote AI monitoring

CMS Requirements

Centers for Medicare and Medicaid Services requirements affect healthcare AI:

- Conditions of Participation may require human oversight of AI-driven decisions

- Recent rules address AI in prior authorization and claims processing

- Quality reporting programs may incorporate AI governance metrics

EU AI Act

For healthcare organizations with EU patients or operations, the EU AI Act has significant implications:

High-risk classification: Most healthcare AI qualifies as high-risk under the EU AI Act, including:

- AI systems intended for use as safety components in medical devices

- AI systems intended for determining access to health services

- AI systems intended for evaluating health risks or predicting emergencies

High-risk requirements:

- Risk management system

- Data governance and quality requirements

- Technical documentation

- Record-keeping and traceability

- Transparency and information to users

- Human oversight provisions

- Accuracy, robustness, and cybersecurity requirements

Penalties: Up to €35 million or 7% of global annual turnover for violations.

4. Healthcare-Specific AI Risks

Healthcare AI governance must address risks that are unique to or amplified in healthcare settings.

Patient Safety Risks

Diagnostic errors: AI systems may miss diagnoses, provide false positives, or make recommendations based on incomplete data. When clinicians over-rely on AI, these errors translate to patient harm.

Treatment recommendation errors: AI may recommend inappropriate treatments, incorrect dosages, or fail to account for patient-specific contraindications.

Alert fatigue: Excessive AI-generated alerts lead clinicians to ignore or override warnings, including legitimate safety alerts.

Automation bias: Clinicians may defer to AI recommendations even when their clinical judgment suggests otherwise, or fail to apply appropriate scrutiny to AI outputs.

Privacy and Security Risks

PHI exposure through AI tools: Employees entering patient information into general-purpose AI tools like ChatGPT expose PHI to unauthorized systems, creating HIPAA violations.

Research indicates this is widespread. Staff use AI to draft notes, summarize cases, answer clinical questions, and perform other tasks that may involve PHI.

Training data risks: AI systems trained on patient data raise questions about consent, de-identification, and secondary use of health information.

Inference risks: AI systems may infer sensitive health information from seemingly non-sensitive data, creating privacy concerns even with de-identified datasets.

Bias and Equity Risks

Algorithmic bias: AI systems may perform differently across patient populations, potentially exacerbating health disparities. Well-documented examples include:

- Pulse oximetry algorithms with reduced accuracy for darker skin tones

- Risk prediction algorithms that underestimate needs of Black patients

- Diagnostic AI with lower accuracy for underrepresented populations

Access disparities: AI-enhanced care may be available only to patients at well-resourced institutions, widening care quality gaps.

Language and literacy: AI systems designed for English speakers or health-literate populations may poorly serve diverse patient populations.

Operational Risks

System dependencies: Over-reliance on AI systems creates operational risks when systems fail or become unavailable.

Integration failures: AI systems that don’t properly integrate with EHRs or clinical workflows may create information gaps or conflicting recommendations.

Vendor risks: Dependence on AI vendors creates risks related to vendor viability, data practices, and system changes.

Liability Risks

Malpractice exposure: When AI contributes to adverse outcomes, liability allocation between clinicians, organizations, and vendors is often unclear.

Documentation requirements: Inadequate documentation of AI use, recommendations, and clinical decisions may create legal exposure.

Informed consent: Failure to disclose AI involvement in care may create consent-related liability.

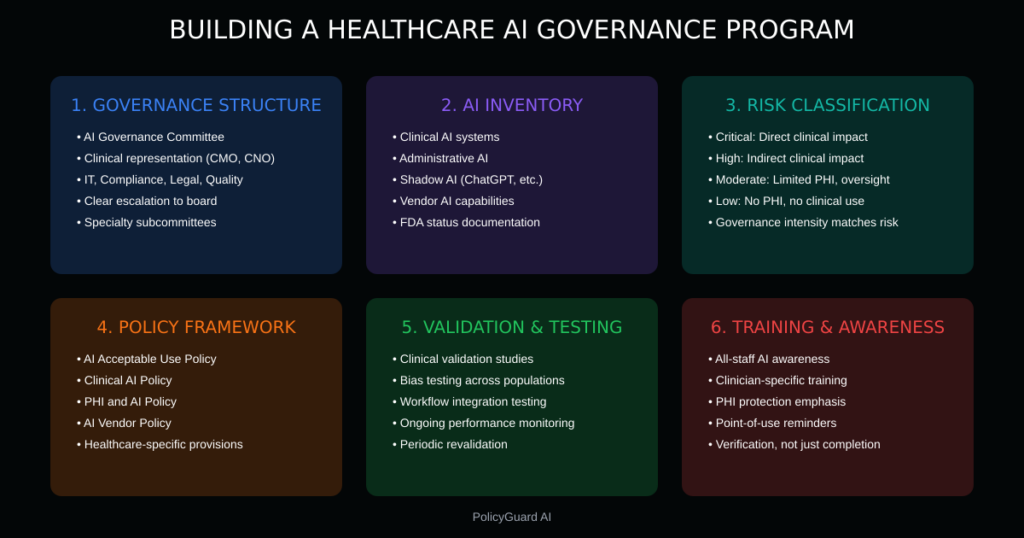

5. Building a Healthcare AI Governance Program

Effective healthcare AI governance requires a structured program that addresses the unique challenges of the healthcare environment.

Governance Structure

AI Governance Committee: Establish a cross-functional committee with representation from:

- Clinical leadership (CMO, CNO, medical staff)

- Information technology (CIO, CISO)

- Compliance and privacy (Privacy Officer, Compliance Officer)

- Legal

- Quality and patient safety

- Health information management

- Nursing informatics

- Representative clinical specialties

Reporting structure: The AI governance committee should report to executive leadership and have a clear escalation path to the board for significant risks.

Clinical subcommittees: For organizations with extensive clinical AI, specialty-specific subcommittees may be needed to evaluate AI systems in their clinical context.

AI Inventory

You cannot govern AI you don’t know exists. Create a comprehensive inventory:

Purpose-built healthcare AI:

- Clinical decision support systems

- Diagnostic AI tools

- Administrative AI systems

- AI features embedded in EHR and other clinical systems

General-purpose AI usage:

- Employee use of ChatGPT, Claude, and similar tools

- AI features in productivity software

- AI tools used by IT, marketing, HR, and other departments

Vendor AI:

- AI capabilities in contracted services

- AI used by business associates

- AI in medical devices and equipment

For each system, document:

- Purpose and intended use

- Data inputs (including any PHI)

- Outputs and how they’re used

- Users and access controls

- Vendor information

- FDA status (if applicable)

- Risk classification

Risk Classification

Not all healthcare AI requires the same governance intensity. Classify systems by risk:

Critical risk (highest governance):

- AI that directly influences clinical decisions

- AI with potential for patient harm if it fails

- AI processing large volumes of PHI

- AI subject to FDA regulation

High risk:

- AI that indirectly influences clinical decisions

- AI processing PHI in ways that could cause significant harm if exposed

- AI making decisions about patient access to services

Moderate risk:

- Administrative AI with limited patient safety impact

- AI processing limited PHI with appropriate safeguards

- AI with human review of all outputs

Low risk:

- AI with no access to PHI

- AI with no clinical application

- AI with extensive human oversight and limited autonomy

Policy Framework

Develop healthcare-specific AI policies:

AI Acceptable Use Policy: Define permitted and prohibited AI uses, with healthcare-specific provisions for PHI and clinical applications.

Clinical AI Policy: Specific requirements for AI in clinical settings, including validation, oversight, and documentation requirements.

PHI and AI Policy: Explicit prohibitions and controls for AI systems accessing or processing PHI.

AI Vendor Policy: Requirements for AI vendors, including BAA provisions, security requirements, and performance monitoring.

For policy templates and guidance, see our AI Acceptable Use Policy Template.

Validation and Testing

Healthcare AI requires rigorous validation:

Pre-deployment validation:

- Performance testing on representative patient populations

- Bias assessment across demographic groups

- Integration testing with clinical workflows

- User acceptance testing with clinical staff

Ongoing monitoring:

- Performance monitoring against established metrics

- Drift detection for AI model performance

- Adverse event tracking related to AI use

- User feedback collection

Periodic revalidation:

- Scheduled comprehensive reviews

- Revalidation after significant updates

- Revalidation when patient populations change

Training and Awareness

Healthcare staff need AI-specific training:

General AI awareness: All staff should understand organizational AI policies, PHI risks with AI tools, and how to report concerns.

Role-specific training:

- Clinicians: Appropriate use of clinical AI, limitations, override documentation

- Administrative staff: PHI protection when using AI tools

- IT staff: AI security and privacy requirements

- Leadership: AI governance responsibilities

Ongoing education: AI capabilities and risks evolve rapidly. Regular updates keep staff current.

6. AI Policies for Healthcare Organizations

Healthcare organizations need AI policies that address industry-specific requirements.

Core Policy Elements

Scope: Clearly define which AI systems and uses the policy covers. Include:

- Clinical AI systems

- Administrative AI systems

- General-purpose AI tools (ChatGPT, etc.)

- AI features embedded in other software

- Employee personal use of AI for work tasks

PHI prohibitions: Explicitly prohibit entering PHI into unauthorized AI systems:

“Employees shall not enter Protected Health Information (PHI) into any AI system that has not been approved by the organization and covered by a Business Associate Agreement. This includes but is not limited to ChatGPT, Claude, Bard, and similar publicly available AI tools. This prohibition applies regardless of whether the employee believes the information has been de-identified.”

Clinical AI requirements: Establish requirements for AI in clinical settings:

“AI-generated clinical recommendations shall be reviewed by a qualified clinician before implementation. Clinicians are responsible for applying clinical judgment to AI recommendations and may override AI recommendations when clinically appropriate. All overrides of AI recommendations shall be documented in the medical record with clinical rationale.”

Approval requirements: Define the process for approving new AI systems:

“All AI systems that will access PHI or be used in clinical settings must be reviewed and approved by the AI Governance Committee prior to deployment. Emergency approvals may be granted by [designated authority] with retrospective committee review.”

Documentation requirements: Specify what must be documented:

“Use of AI in clinical decision-making shall be documented in the patient’s medical record, including the AI system used, the recommendation provided, and the clinical decision made. When clinicians override AI recommendations, documentation shall include the clinical rationale for the override.”

Sample Policy Provisions

Shadow AI prohibition:

“The use of unauthorized AI tools for any work-related purpose involving patient information, clinical decisions, or organizational data is prohibited. Employees who become aware of unauthorized AI use shall report it to [designated contact] without fear of retaliation.”

Vendor AI requirements:

“AI systems provided by vendors that access PHI must be covered by a Business Associate Agreement that specifically addresses AI-related risks. Vendors must provide documentation of AI system validation, bias testing, and performance monitoring capabilities.”

Incident reporting:

“Any suspected AI-related adverse event, near-miss, or PHI exposure shall be reported immediately through the organization’s incident reporting system. AI-related incidents shall be reviewed by the AI Governance Committee in addition to standard incident review processes.”

7. Clinical AI vs Administrative AI: Different Governance Needs

Healthcare AI falls into two broad categories with different governance requirements.

Clinical AI Governance

Clinical AI directly affects patient care and requires the most rigorous governance.

Validation requirements:

- Clinical validation with appropriate patient populations

- Peer-reviewed evidence of safety and efficacy

- Comparison with standard of care

- Prospective monitoring of outcomes

Oversight requirements:

- Physician oversight of AI recommendations

- Clear accountability for clinical decisions

- Documentation of AI role in care

- Override capability and documentation

Integration requirements:

- EHR integration for context and documentation

- Clinical workflow alignment

- Alert management to prevent fatigue

- Fail-safe mechanisms when AI unavailable

Regulatory considerations:

- FDA status determination

- State medical board compliance

- Informed consent requirements

- Malpractice insurance coverage

Administrative AI Governance

Administrative AI supports operations but typically does not directly affect clinical decisions.

Risk focus:

- PHI protection is primary concern

- Accuracy affects billing, access, operations

- Bias may affect access to services

- Efficiency gains must not compromise quality

Oversight requirements:

- Process owner accountability

- Quality assurance sampling

- Exception handling procedures

- Performance monitoring

Regulatory considerations:

- HIPAA compliance for PHI access

- CMS requirements for billing AI

- State insurance regulations for claims AI

- Anti-discrimination requirements

The Gray Zone

Some AI crosses the clinical/administrative boundary:

Prior authorization AI: Decisions about treatment authorization affect clinical care but are made in administrative processes.

Care management AI: Population health and care management tools inform clinical decisions but operate outside direct care delivery.

Documentation AI: AI that generates clinical documentation affects the medical record but may not directly influence treatment decisions.

These systems may require hybrid governance approaches combining elements of clinical and administrative oversight.

8. HIPAA and AI: What You Need to Know

HIPAA compliance is central to healthcare AI governance.

PHI in AI Systems

What constitutes PHI in AI context: PHI includes any individually identifiable health information. In AI contexts, this includes:

- Patient data entered into AI systems

- AI prompts containing patient information

- AI outputs derived from patient data

- Metadata that could identify patients

Minimum necessary standard: AI systems should access only the minimum PHI necessary for their function. Systems requiring broad data access need strong justification.

De-identification considerations: HIPAA provides two methods for de-identification (Expert Determination and Safe Harbor). However, AI systems may be able to re-identify “de-identified” data, raising questions about the adequacy of traditional de-identification for AI use cases.

Business Associate Requirements

When BAAs are required: AI vendors that create, receive, maintain, or transmit PHI on behalf of a covered entity are business associates requiring BAAs.

This includes:

- Cloud-based AI services processing PHI

- AI vendors with access to patient data for training or improvement

- AI systems hosted by third parties

BAA provisions for AI: BAAs for AI vendors should specifically address:

- How PHI will be used in AI training

- Data retention and deletion

- Subcontractor AI use

- Breach notification for AI-related incidents

- Performance monitoring and audit rights

Employee Use of AI Tools

The shadow AI problem: Employees using ChatGPT and similar tools for work tasks may enter PHI, creating HIPAA violations. This is widespread and often undetected.

Research indicates that healthcare workers regularly use AI tools for:

- Drafting clinical notes and summaries

- Answering clinical questions

- Creating patient communications

- Summarizing medical literature

- Administrative tasks involving patient information

Enforcement approach: Address shadow AI through:

- Clear policies prohibiting PHI in unauthorized AI tools

- Training on what constitutes PHI and why it matters

- Technical controls where possible

- Point-of-use reminders when accessing AI tools

- Monitoring and enforcement

For more on shadow AI risks, see Shadow AI: The Hidden Risk in Every Company.

Breach Considerations

When AI use triggers breach notification: If PHI is entered into an unauthorized AI system, it may constitute a breach requiring notification. Analysis should consider:

- Was the AI system authorized to receive PHI?

- Was the PHI encrypted or otherwise protected?

- What is the probability that the PHI was compromised?

- Is there documentation of what information was exposed?

Risk assessment: HIPAA requires a risk assessment to determine if breach notification is required. For AI-related incidents, consider:

- The nature and extent of PHI involved

- Who accessed the PHI (the AI vendor, other users, etc.)

- Whether PHI was actually acquired or viewed

- The extent to which risk has been mitigated

9. FDA Regulation of AI Medical Devices

AI systems that qualify as medical devices face FDA oversight.

When AI Is a Medical Device

Software as a Medical Device (SaMD): Software intended for medical purposes without being part of a hardware medical device. AI systems may qualify as SaMD if they are intended for:

- Diagnosis of disease or conditions

- Treatment, mitigation, or prevention of disease

- Affecting the structure or function of the body

Exempt clinical decision support: Some clinical decision support software is exempt from FDA regulation if it:

- Is not intended to acquire, process, or analyze medical images or signals

- Is intended to display, analyze, or print information about a patient

- Is intended to support clinical decision-making

- Allows independent review by healthcare professionals

Software must meet all four criteria to qualify for exemption.

FDA Requirements for AI Medical Devices

Premarket review: Depending on risk classification, AI medical devices may require:

- 510(k) clearance (substantial equivalence to predicate device)

- De novo classification (new device type with low to moderate risk)

- Premarket approval (PMA) for high-risk devices

Quality system requirements: AI medical device manufacturers must comply with Quality System Regulation (QSR) requirements for design controls, production, and maintenance.

Predetermined change control plans: For AI systems that learn and adapt, FDA has established a framework for predetermined change control plans that allow certain modifications without new premarket review.

Real-world performance monitoring: FDA expects manufacturers to monitor AI device performance in real-world use and report problems.

Organizational Responsibilities

Device procurement: Verify FDA status of AI systems before procurement:

- Is the device FDA-cleared or approved?

- What is the device’s intended use?

- What are the device’s limitations and contraindications?

Use within indications: Ensure AI devices are used within their FDA-cleared indications. Off-label use may affect liability and regulatory status.

Adverse event reporting: Establish processes for reporting AI device-related adverse events to the FDA as required.

Maintenance and updates: Ensure AI devices are maintained according to manufacturer requirements and that updates are properly validated.

10. EU AI Act Impact on Healthcare

For healthcare organizations serving EU patients or operating in Europe, the EU AI Act creates significant new requirements.

Healthcare AI Classification

High-risk healthcare AI: Most healthcare AI qualifies as high-risk under the EU AI Act:

- AI intended as safety components of medical devices

- AI intended for determining access to health services

- AI for evaluating reliability of evidence in investigations

- AI for predicting health risks or emergencies

Prohibited AI: Some AI applications are prohibited entirely:

- Social scoring for healthcare access

- Real-time biometric identification (with limited exceptions)

- Subliminal manipulation affecting health decisions

High-Risk Requirements

Healthcare organizations deploying high-risk AI must ensure systems meet:

Risk management: Establish and maintain a risk management system throughout the AI system lifecycle.

Data governance: Ensure training, validation, and testing data meets quality criteria and is relevant, representative, and free from errors.

Technical documentation: Maintain documentation demonstrating conformity with EU AI Act requirements.

Record-keeping: Maintain logs of AI system operation for traceability.

Transparency: Provide users with information to understand AI outputs and use the system appropriately.

Human oversight: Design systems for effective human oversight, including ability to override or reverse AI decisions.

Accuracy and robustness: Ensure appropriate levels of accuracy, robustness, and cybersecurity.

Compliance Timeline

The EU AI Act entered into force in August 2024 with phased implementation:

- Prohibited practices: February 2025

- General provisions and governance: August 2025

- High-risk system requirements: August 2026

Healthcare organizations should assess their AI systems against EU AI Act requirements now and implement necessary changes before deadlines.

11. Training Healthcare Staff on AI

Effective training is essential for healthcare AI governance.

Training Program Components

All-staff training: Every employee should receive basic AI awareness training covering:

- What AI is and how it’s used in healthcare

- Organizational AI policies

- PHI risks with AI tools

- How to identify and report AI concerns

Role-specific training: Tailored training for different roles:

Clinicians:

- Appropriate use of clinical AI systems

- Understanding AI limitations and uncertainty

- When and how to override AI recommendations

- Documentation requirements

- Informed consent considerations

Administrative staff:

- PHI protection when using AI tools

- Approved vs. prohibited AI uses

- Recognizing AI-generated content

- Reporting unauthorized AI use

IT staff:

- AI security and privacy requirements

- AI system monitoring and maintenance

- Vendor management for AI systems

- Incident response for AI-related events

Leadership:

- AI governance responsibilities

- Risk oversight

- Resource allocation for AI governance

- Regulatory compliance accountability

Training Delivery

Point-of-use reminders: Training that happens only during onboarding is forgotten. Reinforce key messages at point of use:

- Reminders when accessing AI tools

- Prompts before entering data into AI systems

- Just-in-time guidance for clinical AI use

Scenario-based learning: Use realistic scenarios to help staff apply policies:

- “A colleague asks you to run a patient list through ChatGPT to identify high-risk patients. What do you do?”

- “The AI diagnostic system recommends a diagnosis you disagree with. How do you proceed?”

Verification: Training should include assessment to verify comprehension, not just completion. Staff who cannot demonstrate understanding should receive additional training.

Ongoing Education

AI capabilities and risks evolve rapidly. Establish:

- Regular training updates (at least annually)

- Communications about new AI systems or policy changes

- Case studies of AI incidents (anonymized) for learning

- Forums for staff to ask questions and share concerns

12. Audit and Documentation Requirements

Healthcare AI governance requires robust documentation and audit capabilities.

What to Document

AI system documentation:

- System purpose and intended use

- Data inputs and outputs

- Validation and testing results

- User training records

- Configuration and settings

- Updates and changes

Usage documentation:

- Who accessed the system

- What queries or inputs were provided

- What outputs were generated

- What actions were taken based on outputs

Clinical documentation:

- AI involvement in clinical decisions

- AI recommendations

- Clinician review and decision

- Override documentation with rationale

Incident documentation:

- AI-related adverse events

- Near-misses

- PHI exposures

- System failures

- Corrective actions

Audit Trail Requirements

Maintain audit trails that allow you to answer:

- Which AI systems were used by which users?

- What patient data was accessed by AI systems?

- What recommendations did AI systems make?

- What decisions were made based on AI recommendations?

- Were there any overrides, and why?

For guidance on audit trails, see AI Audit Trail: What It Is and Why Regulators Want One.

Regulatory Audit Preparation

Be prepared for audits from:

- HHS Office for Civil Rights (HIPAA)

- FDA (medical device AI)

- State health departments

- CMS (Medicare/Medicaid conditions)

- Joint Commission and other accreditors

- EU AI Act authorities (if applicable)

Maintain documentation that demonstrates:

- Governance structure and accountability

- Policy existence and communication

- Training completion and comprehension

- System validation and monitoring

- Incident response and corrective action

13. How PolicyGuard Supports Healthcare AI Governance

PolicyGuard provides infrastructure for healthcare AI governance, addressing the unique challenges of the healthcare environment.

Healthcare-Specific Capabilities

Healthcare AI policy templates: Policy templates that address healthcare requirements:

- PHI-specific prohibitions and controls

- Clinical AI governance provisions

- HIPAA-aligned requirements

- Documentation standards

Point-of-use enforcement: When healthcare employees access AI tools like ChatGPT, PolicyGuard displays policy reminders and requires acknowledgment. This creates:

- Awareness at the moment of use

- Documentation that policies were communicated

- Deterrent against PHI entry into unauthorized systems

Training with verification: Built-in training modules specific to healthcare AI:

- PHI and AI risks

- HIPAA compliance for AI use

- Clinical AI appropriate use

- Verification through quizzes, not just completion

Audit trail for compliance: Automatic logging creates documentation for:

- HIPAA compliance audits

- Accreditation surveys

- Internal compliance monitoring

- Incident investigation

One-click compliance reports: Generate reports showing:

- Policy acknowledgment rates by department

- Training completion status

- AI tool access patterns

- Compliance gaps requiring attention

Healthcare Deployment

PolicyGuard deploys quickly in healthcare environments:

- Browser extension deployment via standard MDM

- No PHI stored in PolicyGuard systems

- Integrates with existing IT infrastructure

- Scales from small practices to health systems

Addressing Shadow AI

PolicyGuard directly addresses the shadow AI problem in healthcare:

- Visibility into which AI tools employees access

- Policy reminders before AI tool use

- Documentation of awareness for compliance

- Data for identifying training needs

Frequently Asked Questions

What is AI governance in healthcare? AI governance in healthcare is the framework of policies, processes, and controls that ensure AI systems are used safely, ethically, and in compliance with healthcare regulations. It addresses unique healthcare concerns including patient safety, PHI protection, clinical workflow integration, and regulatory requirements from HIPAA, FDA, and other authorities.

Is ChatGPT HIPAA compliant? Standard ChatGPT is not HIPAA compliant and should not be used with PHI. OpenAI does offer enterprise versions with BAA options, but organizations must carefully evaluate whether these meet their HIPAA obligations. Regardless of vendor claims, entering PHI into AI tools creates compliance risks that must be managed through policy, training, and technical controls.

What healthcare AI is regulated by the FDA? AI systems that meet the definition of medical devices are regulated by the FDA. This includes AI intended for diagnosis, treatment, or prevention of disease. Some clinical decision support software is exempt if it meets specific criteria including displaying the basis for recommendations and allowing independent clinician review. Organizations should verify the FDA status of clinical AI systems before deployment.

How does the EU AI Act affect healthcare? The EU AI Act classifies most healthcare AI as high-risk, requiring risk management systems, data governance, technical documentation, transparency, human oversight, and accuracy requirements. Healthcare organizations serving EU patients or operating in Europe must assess their AI systems against EU AI Act requirements and implement necessary changes before the August 2026 deadline for high-risk systems.

How do I prevent employees from putting PHI into ChatGPT? Prevention requires multiple layers: clear policies prohibiting PHI entry into unauthorized AI tools, training on what constitutes PHI and why protection matters, technical controls where possible, point-of-use reminders when accessing AI tools, and monitoring for policy violations. PolicyGuard provides point-of-use enforcement that reminds employees of policies when they access AI tools.

What should be documented when using clinical AI? Clinical AI use should be documented in the medical record, including: the AI system used, the recommendation or output provided, the clinician’s review and assessment, the clinical decision made, and rationale for any overrides of AI recommendations. This documentation protects against liability and supports quality improvement.

Do I need a BAA with AI vendors? If an AI vendor creates, receives, maintains, or transmits PHI on behalf of your organization, they are a business associate requiring a BAA. This includes cloud-based AI services processing patient data, AI vendors with access to data for training or improvement, and AI systems hosted by third parties. The BAA should specifically address AI-related risks.

Related Resources

- The Complete Guide to AI Policy and Governance for Companies — The pillar guide covering all aspects of AI governance

- AI Acceptable Use Policy Template: A Complete Guide — Policy development guidance

- Shadow AI: The Hidden Risk in Every Company — Managing unauthorized AI tool usage

- AI Audit Trail: What It Is and Why Regulators Want One — Audit documentation requirements

- AI Compliance Framework: Step-by-Step Guide — Building compliance infrastructure

- EU AI Act Compliance: What Companies Need to Do — European regulatory requirements