This guide is part of our Complete Guide to AI Policy and Governance for Companies, the central resource for everything you need to know about AI compliance in 2026.

An AI acceptable use policy template is a pre-written document that defines how employees in your organization are allowed to use AI tools like ChatGPT, Claude, Gemini, Copilot, and Midjourney at work. It covers what data can and cannot be shared, which tools are approved, disclosure requirements, and what happens when someone violates the policy. A good template saves you weeks of writing from scratch and ensures you do not miss critical sections that regulators expect.

This guide covers everything you need to know: what an AI acceptable use policy is, why every company needs one, what it must include, common mistakes to avoid, how to enforce it, and how to choose between AI-generated policies and expert-curated templates.

TABLE OF CONTENTS:

- What Is an AI Acceptable Use Policy?

- Why Every Company Needs One in 2026

- 12 Sections Your AI Policy Template Must Include

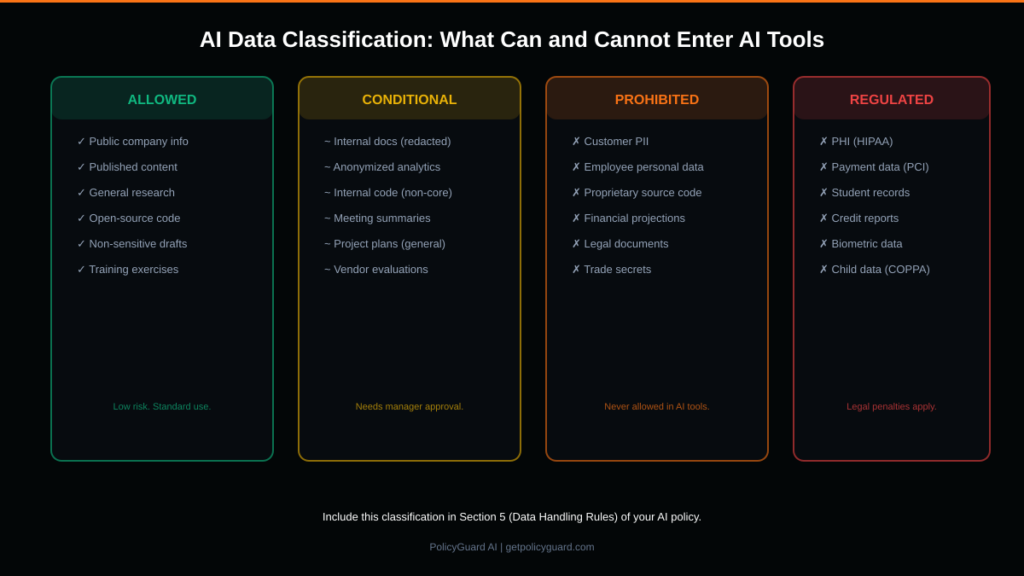

- Data Classification: What Can and Cannot Enter AI Tools

- 7 Common Mistakes That Make AI Policies Useless

- How to Enforce Your Policy (Not Just Publish It)

- AI Policy Generator vs Expert-Curated Templates

- How to Choose the Right Template for Your Company

- Getting Started Today

- Frequently Asked Questions

1. What Is an AI Acceptable Use Policy?

An AI acceptable use policy is a formal document that establishes the rules, boundaries, and expectations for how employees use artificial intelligence tools in the workplace. It is the foundational governance document that every other AI compliance activity builds on.

Think of it like your company’s internet acceptable use policy, but specifically for AI tools. It answers the questions employees actually have: “Can I use ChatGPT for work?” “What about customer data?” “Do I need to tell anyone I used AI?” “What happens if I accidentally paste something I shouldn’t?”

Without this document, employees are making their own individual decisions about AI usage with no guidelines, no guardrails, and no consistency. Some are being overly cautious and not using AI at all (missing productivity gains). Others are pasting confidential data into every tool they can find (creating compliance risk). A policy creates a shared understanding.

For regulatory purposes, having a documented AI acceptable use policy is required or strongly recommended under GDPR, the EU AI Act, SOC 2, HIPAA, NIST AI RMF, and ISO 42001. More importantly, having the policy is only half the equation. You also need to prove that employees have been trained on it, have acknowledged it, and are following it. That is where enforcement comes in, which we cover in Section 6.

2. Why Every Company Needs One in 2026

If you have employees, they are using AI tools. The data is unambiguous: 80% of employees use AI tools their employer has not approved (UpGuard, 2025), and 59% actively hide their AI usage from their employer (Cybernews, 2025).

This is not a future problem. It is happening today. The question is whether you are going to govern it or ignore it.

Three forces are converging in 2026 that make an AI acceptable use policy non-optional.

Regulatory enforcement is accelerating. The EU AI Act is now enforceable with penalties up to 35 million euros or 7% of global revenue. GDPR authorities issued over 1.2 billion euros in fines in 2024. In the US, state-level AI regulations are multiplying. Companies without documented AI governance are increasingly exposed.

Client and partner requirements are tightening. If you handle data for other companies, they are asking about your AI governance as part of vendor assessments, security questionnaires, and contract negotiations. “Do you have an AI acceptable use policy?” is becoming a standard question, right alongside “Do you have a data privacy policy?” Not having one means losing deals.

The cost of incidents is rising. IBM Security’s 2024 report puts the average data breach cost at $4.0 million, with shadow AI adding an estimated $200,000 premium. When an employee pastes customer data into an unapproved AI tool and that data gets exposed, the financial and reputational damage is real.

An AI acceptable use policy does not prevent AI usage. It governs it. It sets clear boundaries so employees can use AI productively while protecting the organization from compliance and data risks.

Alt text: Chart showing the 12 essential sections every AI acceptable use policy must include, from purpose and scope to policy governance

3. 12 Sections Your AI Policy Template Must Include

A real AI acceptable use policy is not a one-page memo. It is a comprehensive document with specific, actionable sections. Here are the 12 sections every template must include, and what each should cover.

Section 1: Purpose and Scope. State why the policy exists and who it applies to. Be specific: “This policy applies to all full-time employees, part-time employees, contractors, and temporary staff who use AI-powered tools in the course of their work for [Company Name].” Define what counts as “AI tools” so there is no ambiguity about coverage.

Section 2: Definitions. Define every key term. What is an “AI tool”? (Include both generative AI like ChatGPT and embedded AI like Grammarly.) What is “personal data” under your policy? What counts as “confidential information”? Clear definitions eliminate the “I didn’t know that counted” defense.

Section 3: Approved AI Tools. Maintain an explicit list of approved tools with any conditions. Example: “ChatGPT (via paid Team plan only, not free personal accounts), GitHub Copilot (for engineering team only, with code review required), Grammarly Business (all employees).” Unapproved tools should be explicitly noted as prohibited.

Section 4: Prohibited Uses. List specific activities that are never allowed. Do not be vague. Say: “Employees must never enter customer names, email addresses, phone numbers, or any other personally identifiable information into any AI tool.” Specificity is what makes a policy enforceable.

Section 5: Data Handling Rules. This is the most critical section. Create a clear data classification system (we cover this in detail in Section 4 below) that categorizes data into Allowed, Conditional, Prohibited, and Regulated tiers with examples for each.

Section 6: Human Oversight Requirements. Specify when AI outputs must be reviewed by a human. This is required by GDPR Article 22 and the EU AI Act for automated decision-making. Define who reviews, what they check, and how review is documented.

Section 7: Disclosure and Transparency. Define when AI usage must be disclosed. Customer communications, published content, code contributions, legal work, and any context where AI involvement would be material information should require disclosure.

Section 8: Training Requirements. Specify that all employees must complete training before using AI tools, define the minimum passing score for any assessment (80% is standard), and set a refresh frequency (annually at minimum, and whenever the policy updates).

Section 9: Compliance Monitoring. Describe how the organization monitors AI usage and policy adherence. This might include browser extension enforcement, periodic access reviews, usage analytics, or manager attestations.

Section 10: Incident Reporting. Provide a clear process for reporting policy violations, including accidental ones. Employees should know that self-reporting a mistake is encouraged, not punished. Include the reporting channel (email, form, manager, or compliance officer).

Section 11: Enforcement and Consequences. Define a graduated consequence structure: first violation might trigger retraining, repeated violations might result in restricted AI access, serious violations (like deliberately sharing regulated data) might lead to disciplinary action. Be fair but clear.

Section 12: Policy Governance. Name the policy owner (typically the compliance officer, CTO, or CISO), set the review cadence (annually and whenever a relevant regulation changes), and define the update approval process.

For a deeper dive into these sections and how they apply across all types of AI policies, see our Complete Guide to AI Policy and Governance.

Writing all 12 sections from scratch typically takes 2-4 weeks of work from a compliance professional. PolicyGuard provides 19+ expert-curated templates that include all 12 sections pre-written, with built-in training modules and quiz questions. You pick, customize, and publish. The hard work is done.

Alt text: Data classification chart for AI tools showing four tiers: Allowed (public data), Conditional (internal data with approval), Prohibited (confidential and personal data), and Regulated (HIPAA, PCI, FERPA protected data)

4. Data Classification: What Can and Cannot Enter AI Tools

The data handling section of your policy is the one that prevents real incidents. It needs to be specific and easy for every employee to understand, regardless of their technical background. Here is the four-tier classification system that covers most organizational needs.

Tier 1: Allowed (Green). Data that can be freely entered into approved AI tools with no restrictions. This includes publicly available company information, published marketing content, general research queries, open-source code, non-sensitive drafts, and training exercises. Low risk.

Tier 2: Conditional (Yellow). Data that can be entered into approved AI tools only with manager approval or after specific redaction. This includes internal documents with sensitive details redacted, anonymized analytics data, non-core internal code, general meeting summaries, high-level project plans, and vendor evaluations. Medium risk.

Tier 3: Prohibited (Orange). Data that must never be entered into any AI tool under any circumstances. This includes customer personally identifiable information (names, emails, phone numbers, addresses), employee personal data, proprietary source code, financial projections and non-public financial data, legal documents and privileged communications, and trade secrets or strategic plans. High risk.

Tier 4: Regulated (Red). Data that is prohibited AND subject to specific legal penalties if mishandled. This includes protected health information under HIPAA, payment card data under PCI DSS, student educational records under FERPA, credit report information under FCRA, biometric data under state laws like Illinois BIPA, and children’s data under COPPA. Legal penalties apply beyond organizational consequences.

Include this classification system in your policy with specific examples relevant to your industry. Employees should be able to look at any piece of data and immediately know which tier it falls into.

Alt text: Infographic showing 7 common mistakes in AI acceptable use policies including using AI to generate the policy, writing a vague one-pager, no enforcement mechanism, and no audit trail

5. 7 Common Mistakes That Make AI Policies Useless

Most AI acceptable use policies fail not because they are malicious, but because they are poorly constructed. Here are the seven mistakes we see most often.

Mistake 1: Using AI to generate the policy itself. This is the most ironic and most dangerous mistake. An AI-generated compliance document can contain inaccurate legal references, outdated regulatory information, fabricated citations, and subtly wrong guidance that looks plausible. When your compliance evidence was written by the same technology you are trying to govern, you have a credibility problem that auditors will not overlook.

Mistake 2: Writing a vague one-pager instead of a real policy. “Use AI responsibly and do not share sensitive data” is not a policy. It is a suggestion. Auditors need specifics: which tools are approved, what data types are prohibited, what the training requirements are, and what happens when someone violates the rules. Vague policies offer zero protection in an investigation.

Mistake 3: No enforcement mechanism beyond email. Sending a PDF to all employees and asking them to “read and follow it” is not enforcement. You have no proof anyone opened the email, no proof anyone read the document, and no proof anyone understood or agreed to follow it. Real enforcement requires active acknowledgment at the point of use.

Mistake 4: No training or comprehension verification. Publishing a policy without training is like posting speed limit signs without teaching anyone to drive. Employees need to understand what the policy means in their daily work, and you need a quiz or assessment to verify they actually understand it.

Mistake 5: Missing data handling specifics. “Be careful with data” is not a data handling rule. Employees need to know exactly which data types are allowed, conditional, prohibited, and regulated. Without the four-tier classification we described above, employees are making judgment calls with no framework.

Mistake 6: No audit trail or evidence collection. When an auditor asks “How do you know your employees follow your AI policy?”, your answer cannot be “We emailed it out” or “We had a meeting about it.” You need timestamped evidence: who acknowledged the policy, when, through which mechanism, and for which policy version.

Mistake 7: Never updating the policy. A policy written in 2023 does not cover the EU AI Act, which became enforceable in 2025. Regulations change, new AI tools emerge, and organizational needs evolve. A policy that is not reviewed at least annually (and updated when regulations change) becomes a compliance liability instead of protection.

6. How to Enforce Your Policy (Not Just Publish It)

The gap between “having a policy” and “proving enforcement” is where compliance risk lives. Here is how to close it.

Step 1: Publish and assign. Your policy needs to be published in a system that tracks who has access to it, not emailed as a PDF attachment. Assign the policy to all relevant employees (or specific departments if you have department-level policies). The system should track assignment status.

Step 2: Train and verify. Assign training modules that explain the policy in practical terms. Follow the training with a quiz that verifies comprehension. Track completion status and quiz scores for every employee. Require retraining for employees who do not meet the minimum passing score.

Step 3: Enforce at the point of use. Deploy a browser extension that detects when employees open AI tools (ChatGPT, Claude, Gemini, Copilot, and others) and presents a policy acknowledgment popup. The employee reads the policy highlights, clicks “I Acknowledge,” and the acknowledgment is timestamped and logged. This happens every time, at the moment it matters, with no extra workflow for the employee.

Step 4: Monitor and report. Your compliance dashboard should show real-time status across the organization: how many employees have acknowledged the policy, who has completed training, who has not, and which AI tools are being accessed. When an auditor asks for evidence, export a report and hand it over.

PolicyGuard provides this entire enforcement stack: policy publishing with assignment tracking, automatic training with quizzes, browser extension enforcement on 20+ AI tools, and one-click audit report exports. See how it works.

7. AI Policy Generator vs Expert-Curated Templates

You might be tempted to use ChatGPT or another AI tool to generate your AI acceptable use policy. It feels efficient. It is fast. And it is a bad idea. Here is why.

AI-generated policies contain errors you cannot easily spot. AI models hallucinate. They invent legal citations that do not exist, reference regulations that have been superseded, and produce guidance that sounds authoritative but is subtly wrong. In a compliance document, a single inaccurate requirement or a fabricated regulatory reference can create liability.

You cannot defend an AI-generated policy to regulators. When an auditor asks who authored your compliance policy and your answer is “ChatGPT,” you have undermined the credibility of your entire governance program. Regulators expect policies to be authored by qualified professionals who understand the specific regulatory requirements.

AI generators produce generic output. They do not know your industry, your regulatory environment, your organizational structure, or your specific risk profile. A healthcare company and a fintech startup have fundamentally different compliance requirements. AI generators produce one-size-fits-all output that fits nobody well.

The alternative: expert-curated templates. Templates written by compliance professionals start with regulatory accuracy because the authors understand the specific requirements of GDPR, the EU AI Act, SOC 2, HIPAA, and other frameworks. They include industry-specific guidance because different templates exist for different verticals. They are reviewed and updated by humans when regulations change.

PolicyGuard’s 19+ templates are written by compliance professionals, cover every major regulation, and include training modules and quiz questions built in. No AI generation. No hallucinations. No liability from fabricated compliance guidance.

Related guide: AI Policy Generator vs Expert-Curated Templates: Why We Chose Humans (coming soon)

8. How to Choose the Right Template for Your Company

With 19+ templates available, here is how to pick the right starting point.

If you have no AI policy at all: Start with the General AI Acceptable Use Policy template. It covers the fundamentals that every company needs regardless of industry or regulatory environment. You can layer additional templates on top later.

If you handle personal data of EU residents: Add the GDPR AI Data Processing Policy template. It covers the specific GDPR articles that apply to AI tool usage.

If you sell into the EU market: Add the EU AI Act Compliance Policy template. It aligns your governance with the risk-based framework that is now enforceable.

If you are preparing for SOC 2: The SOC 2 AI Controls Policy template maps directly to the Trust Services Criteria that auditors evaluate.

If you are in healthcare: The Healthcare AI Policy (HIPAA) template addresses the specific requirements for protecting health information in AI tools.

If you are in financial services: The Financial Services AI Policy template covers SEC, FINRA, and OCC requirements.

If you are not sure: Start with the General AI Acceptable Use Policy. It is comprehensive enough to cover most organizations and provides a solid foundation that you can build on as your governance program matures.

Browse all templates at getpolicyguard.com/templates.

9. Getting Started Today

You do not need to spend weeks building an AI governance program from scratch. Here is the fastest path from zero to audit-ready.

Step 1 (1 minute): Go to PolicyGuard’s template library and select the General AI Acceptable Use Policy template (or whichever template matches your primary compliance need).

Step 2 (2 minutes): Customize it with your company name, approved AI tools list, and any department-specific rules. Training modules and quiz questions are already built in.

Step 3 (2 minutes): Publish the policy, assign it to your employees, and have them install the Chrome browser extension.

From that point forward, every time an employee opens an AI tool, they see your policy and acknowledge it. Every acknowledgment is timestamped and logged. Every training completion and quiz score is tracked. And when an auditor asks for proof, you click Export.

Start your free 14-day trial: getpolicyguard.com

Frequently Asked Questions

What is an AI acceptable use policy? An AI acceptable use policy is a formal document that defines how employees are allowed to use AI tools at work. It covers approved tools, prohibited data types, disclosure requirements, training obligations, and enforcement consequences. It is the foundational document of any AI governance program.

Is an AI acceptable use policy legally required? While no single law requires a document called an “AI acceptable use policy,” the underlying requirements exist across multiple regulations. GDPR requires documented data processing policies. The EU AI Act requires transparency and human oversight documentation. SOC 2 requires technology usage controls. An AI acceptable use policy is the practical way to meet all of these requirements in one document.

How long should an AI acceptable use policy be? A comprehensive AI acceptable use policy is typically 3,000 to 5,000 words. It needs to be detailed enough to cover all 12 essential sections but readable enough that employees will actually engage with it. PolicyGuard templates strike this balance with clear formatting and practical language.

Can I use ChatGPT to write my AI acceptable use policy? We strongly recommend against it. AI-generated compliance documents can contain hallucinated legal references, outdated regulatory information, and subtly inaccurate guidance. For a document that may be scrutinized by regulators, human-authored, expert-reviewed content is the only responsible approach.

How often should an AI acceptable use policy be updated? At minimum, annually. Additionally, the policy should be reviewed and updated whenever a relevant regulation changes (like the EU AI Act enforcement), whenever your organization adopts new AI tools, or whenever an incident reveals a gap in the current policy.

What is the difference between an AI acceptable use policy and an AI governance framework? An AI acceptable use policy is a specific document that governs employee behavior with AI tools. An AI governance framework is the broader system of policies, processes, and controls that manages AI risk across the organization. The acceptable use policy is one component of the framework. For more on frameworks, see our Complete Guide to AI Policy and Governance.

Comments 3