This guide is part of our Complete Guide to AI Policy and Governance for Companies, the central resource for everything you need to know about AI compliance in 2026.

An AI policy generator uses artificial intelligence — typically ChatGPT, Claude, or a custom GPT wrapper — to produce AI usage policies for organizations. You describe your company, answer a few questions, and the tool generates a policy document in seconds.

It sounds efficient. It is also the single riskiest shortcut in compliance.

We built PolicyGuard AI, a platform with 28+ AI policy templates, and we deliberately chose to use zero AI in creating them. Every template is written by compliance professionals with regulatory expertise. This was not a cost-saving decision. It was a trust decision.

This article explains why AI policy generators exist, what they get wrong, what they get dangerously right (enough to fool you), and why expert-curated templates are the safer, more enforceable alternative.

The Appeal of AI Policy Generators

The appeal is obvious. Writing an AI policy from scratch is hard. It requires understanding multiple regulatory frameworks (GDPR, EU AI Act, CCPA, HIPAA, SOC 2), translating legal requirements into practical workplace rules, covering every necessary section without gaps, and using language that is specific enough to be enforceable.

For a compliance officer or CTO tasked with “getting an AI policy in place,” the temptation to feed a prompt into ChatGPT and get a 10-page policy in 30 seconds is enormous. And the output looks convincing. It has headers. It has numbered sections. It uses legal-sounding language. It references real regulations by name.

This is precisely the problem.

AI-generated policies are convincing enough to pass a casual review but unreliable enough to fail when they actually matter — in an audit, in a regulatory inquiry, or in an incident investigation.

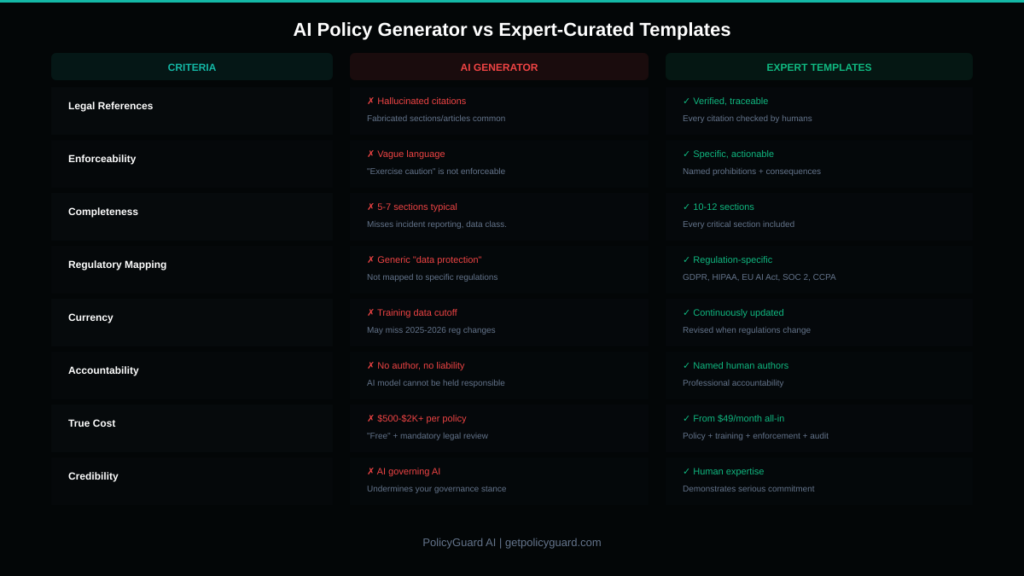

The 7 Problems With AI-Generated Policies

Problem 1: Hallucinated Legal References

Large language models fabricate information. This is not a bug. It is how the technology works. When an LLM generates a policy that references “Section 14(b) of the EU AI Act” or “GDPR Article 38 requirements for AI data processing,” that reference may or may not be accurate. The model does not check. It generates text that is statistically likely to appear in this context.

In a casual blog post, a fabricated reference is embarrassing. In a compliance policy that your organization relies on for regulatory protection, a fabricated reference is a liability. If an auditor checks the citation and it does not exist — or worse, says something different than your policy claims — your credibility is destroyed and your compliance posture is compromised.

We reviewed AI-generated policies from three popular generators and found fabricated regulation references in every single one. Not in edge cases. In the core regulatory sections that the entire policy is built around.

Problem 2: Vague Language That Is Unenforceable

AI generators produce language that sounds authoritative but is operationally meaningless. Phrases like “employees should exercise caution when using AI tools” and “sensitive data should not be shared with external AI platforms without appropriate consideration” appear in nearly every AI-generated policy we reviewed.

What does “exercise caution” mean? What qualifies as “appropriate consideration”? If an employee pastes customer data into ChatGPT and you try to enforce consequences, their lawyer will argue — correctly — that “exercise caution” is not a clear prohibition.

Enforceable policies use specific language. “Customer personally identifiable information (PII) including names, email addresses, phone numbers, account numbers, and financial data must never be entered into any AI tool, whether approved or unapproved. Violation of this prohibition will result in disciplinary action up to and including termination.”

That is enforceable. “Exercise caution” is not. AI generators consistently produce the former type of language because it is safer for the model — vague statements are less likely to be factually wrong. But safety for the model is danger for your organization.

Problem 3: Missing Critical Sections

A comprehensive AI policy needs 10-12 sections, as we detailed in our AI Policy for Employees guide. AI generators typically produce 5-7 sections, leaving gaps in areas like data classification frameworks, incident reporting procedures, disclosure requirements, and policy governance mechanisms.

These gaps are not obvious to someone who has never written a compliance policy. The generated document looks complete because it is long and well-formatted. But completeness and length are not the same thing. A policy missing an incident reporting section provides zero guidance when an employee accidentally uploads confidential data to an AI tool. A policy missing a data classification framework gives employees no way to determine what data can and cannot be used with AI tools.

The sections that AI generators miss are consistently the operational sections — the ones that tell employees what to do in specific situations. The sections they include are the conceptual sections — purpose statements, general principles, and broad definitions. This is the opposite of what a practical, enforceable policy needs.

Problem 4: No Regulatory Specificity

If your company processes EU citizen data, your AI policy needs specific GDPR provisions. If you handle healthcare data, it needs HIPAA-specific language. If you are pursuing SOC 2 certification, it needs controls mapped to SOC 2 criteria.

AI generators produce generic policies. They cover “data protection” in broad terms without mapping to specific regulatory requirements. A policy that says “we comply with applicable data protection laws” is legally meaningless. A policy that says “In accordance with GDPR Article 6, the lawful basis for processing personal data through AI tools at this organization is legitimate interest, subject to the balancing test documented in our Data Protection Impact Assessment (Ref: DPIA-AI-2026-001)” is legally defensible.

Expert-curated templates are built for specific regulatory contexts. PolicyGuard’s GDPR template addresses GDPR. The HIPAA template addresses HIPAA. The EU AI Act template addresses the EU AI Act. Each one is written by professionals who understand the specific requirements of that regulation and how they apply to AI tool usage.

AI generators cannot reliably produce this level of regulatory precision because they do not understand the regulations. They generate text that resembles regulatory language without the underlying expertise.

Problem 5: Outdated Information

LLMs have knowledge cutoff dates. GPT-4 and Claude have training data that may not include the most recent regulatory changes, enforcement actions, or compliance guidance. The EU AI Act’s enforcement provisions took effect in 2025. Updates to NIST AI RMF guidance were published throughout 2024 and 2025. New state-level AI regulations in the US are being enacted regularly.

An AI generator using a model trained on data from early 2024 will not include any of these developments. The policy it generates may be based on regulatory requirements that have since been updated, expanded, or replaced.

Expert-curated templates are maintained and updated by humans who track regulatory changes as part of their professional work. When a regulation is updated, the corresponding template is reviewed and revised. This is not automated. It requires human judgment about how the regulatory change affects practical AI governance.

Problem 6: Liability Transfer Illusion

When you use an AI policy generator, who is liable if the generated policy is legally insufficient?

Not the AI tool. Not the company that built the generator. You. Your organization adopted the policy, distributed it to employees, and relied on it for compliance. If it turns out that the policy was incomplete, inaccurate, or unenforceable, the regulatory consequences fall on your organization.

AI generators create an illusion that compliance has been handled. The policy exists. It looks professional. It covers the major topics. But “looks professional” and “provides legal protection” are entirely different standards. The illusion is dangerous because it reduces the urgency to have the policy reviewed by someone with actual regulatory expertise.

Expert-curated templates do not eliminate the need for legal review of your specific implementation. But they start from a foundation of accuracy and completeness that AI-generated policies do not provide.

Problem 7: The Irony Problem

There is a fundamental irony in using AI to write your AI governance policy. The policy exists to manage the risks of AI tool usage. One of those risks is that AI generates content that sounds accurate but contains errors. Using AI to generate the governance document means you have already fallen victim to the exact risk you are trying to manage.

If your policy contains a hallucinated legal reference and an employee points it out, your credibility as an organization that understands AI risks is gone. If a regulator discovers that your AI governance policy was itself generated by AI without expert review, the message is clear: this organization does not take AI governance seriously.

This is not a theoretical concern. It is a reputational and legal reality that every organization using AI policy generators should consider.

Alt text: Side-by-side comparison table of AI policy generators versus expert-curated templates across 8 criteria

What AI Generators Get Right

In fairness, AI generators are not useless. They are effective for three things.

Speed. They produce a first draft in seconds. For an organization that has nothing — no policy at all — an AI-generated draft is better than a blank page. It gives you a starting point for human review and revision.

Structure. LLMs are good at organizing content into logical sections. An AI-generated policy will typically have a reasonable structure even if the content within each section is problematic.

Awareness. The process of using an AI policy generator forces the organization to think about what their policy should cover. The questions the generator asks (“What industry are you in?”, “Do you process EU citizen data?”) prompt useful internal discussions.

But a starting point is not a finished product. And the danger of AI generators is that organizations treat the output as a finished product because it looks like one.

Why We Built PolicyGuard Without AI

When we designed PolicyGuard, we made a deliberate decision: every policy template would be written by humans with regulatory expertise. No AI generation. No GPT wrappers. No “AI-assisted” drafting.

Here is why.

Accuracy is non-negotiable in compliance. A compliance document that is 95% accurate is not 95% useful. It is a liability. The 5% that is wrong could be the section that an auditor focuses on, the provision that fails in court, or the gap that allows a data breach to occur. Human experts produce 100% intentional content. They know what they wrote and why they wrote it.

Legal references must be verifiable. Every regulatory reference in a PolicyGuard template points to a real provision in a real regulation. We do not generate references. We cite them. This means every template can withstand regulatory scrutiny because every claim is traceable to its source.

Enforceability requires specificity. Our templates use specific, actionable language because they are written by people who understand what “enforceable” means in a workplace context. Not “exercise caution.” Not “take appropriate steps.” Specific prohibitions, specific classifications, specific consequences.

Trust is the product. Organizations adopting an AI governance policy are making a trust decision. They are trusting that the document they deploy will protect them. That trust should rest on human expertise, not on a language model’s statistical prediction of what a compliance policy should contain.

This is our core differentiator. PolicyGuard is the AI governance platform that does not use AI for governance. We track AI tools. We enforce AI policies. We report on AI compliance. But the policies themselves are written by people who understand the law, the technology, and the operational reality of managing AI in the workplace.

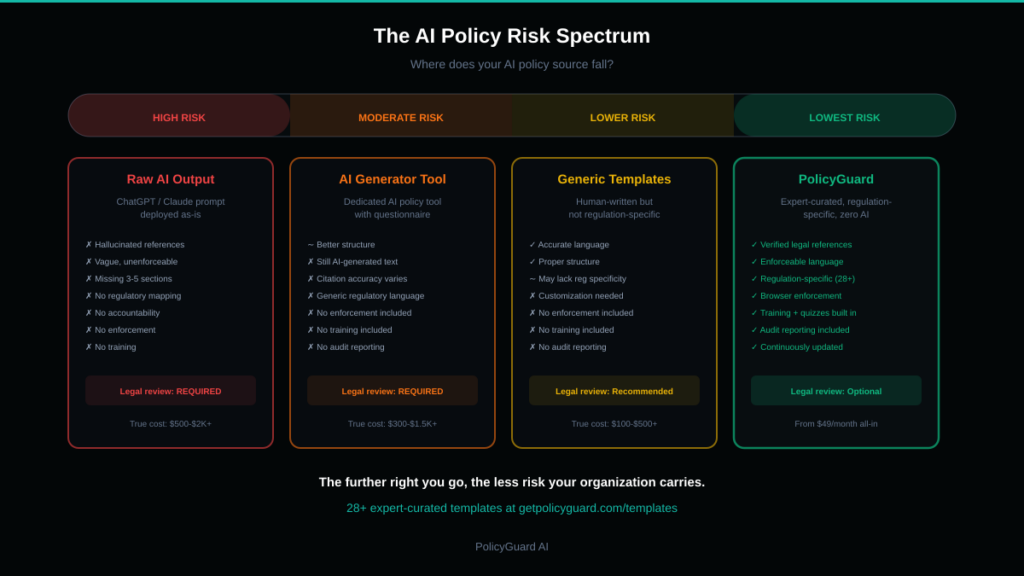

Alt text: Visual showing the risk spectrum from AI-generated policies with high risk to expert-curated templates with low risk

When to Use an AI Generator (and When Not To)

Use an AI generator if: You have zero AI policy today and need something by end of day to address an immediate risk. Generate a draft, then immediately schedule a review with legal counsel or a compliance professional. Do not deploy the generated policy without expert review.

Do not use an AI generator if: You are building a governance program meant to withstand regulatory scrutiny, you operate in a regulated industry (healthcare, finance, legal), you need audit-ready compliance documentation, or you are deploying the policy across your workforce as a binding document.

Use expert-curated templates if: You want a policy that is accurate, complete, enforceable, and audit-ready from day one. You want to customize a proven foundation rather than review an AI-generated draft for errors. You want policy, training, quizzes, and enforcement in a single integrated system.

The Real Cost Comparison

AI policy generator (free or $10-50):

- Policy generated in 30 seconds

- 3-5 hours of legal review to identify and fix errors

- Legal counsel review: $500-2,000 depending on complexity

- No training materials included

- No enforcement mechanism included

- No audit trail

- Re-generation needed when regulations change (plus another legal review)

- True cost: $500-$2,000+ per policy, plus ongoing review costs

Expert-curated template platform (PolicyGuard starts at $49/month):

- Policy selected and customized in 5 minutes

- No error correction needed — templates are pre-reviewed by experts

- Training modules and quizzes included

- Browser extension enforcement included

- Compliance dashboard and audit reporting included

- Templates updated when regulations change

- True cost: $49-349/month for complete governance toolkit

The “free” AI generator costs more than the paid expert platform once you account for the legal review that responsible organizations will need. And the AI generator still does not include training, enforcement, monitoring, or reporting — the five other components of a complete AI governance toolkit.

How to Evaluate Any AI Policy Source

Whether you use a generator, a template, or write from scratch, evaluate the output against these eight criteria before deploying it.

1. Legal reference accuracy. Pick three regulatory references from the policy. Look them up. Do they exist? Do they say what the policy claims they say?

2. Specificity test. Find the section on prohibited activities. Does it name specific actions and specific data types? Or does it use vague language like “inappropriate use” and “sensitive information”?

3. Completeness check. Does it cover all 10-12 essential sections? Specifically: purpose, scope, definitions, approved tools, prohibited activities, data classification, human oversight, disclosure, training, incident reporting, enforcement, and policy governance.

4. Regulatory mapping. If you operate under GDPR, does the policy specifically address GDPR requirements? If HIPAA, does it address HIPAA? Generic “data protection” language is not sufficient.

5. Enforceability test. Pick any prohibition in the policy. If an employee violated it, could you take disciplinary action based solely on the policy language? Would the language survive a challenge from the employee’s legal representation?

6. Operational clarity. Does the policy tell employees exactly what to do in common scenarios? “You want to use ChatGPT to draft a client email. Here is what you must do first.” If the policy does not provide this level of practical guidance, employees will not follow it.

7. Version and date. Is the policy dated? Does it reference current regulations? A policy that references the “proposed EU AI Act” in 2026 (it has been enforceable since 2025) signals that the content is outdated.

8. Author attribution. Who wrote this? A named compliance professional? A legal team? Or an AI model? The answer matters for accountability and for the credibility of the document if challenged.

Making the Switch

If you have already deployed an AI-generated policy and want to move to an expert-curated foundation, here is the process.

Step 1: Select the appropriate PolicyGuard template for your primary regulatory environment. If you operate under multiple regulations, start with the one that carries the highest penalties for non-compliance.

Step 2: Compare the AI-generated policy against the expert template section by section. Identify what the generated policy missed, what it got wrong, and what language needs to be replaced.

Step 3: Customize the expert template with your organization-specific details: company name, approved tools list, department-specific addendums, internal contact information for incident reporting.

Step 4: Deploy the updated policy through PolicyGuard with training and enforcement. Require all employees to complete the new training and acknowledge the new policy version.

Step 5: Archive the old AI-generated policy (do not delete it — you may need it for historical reference).

This process takes 1-2 days versus the weeks of legal review needed to make an AI-generated policy audit-ready.

Browse all 28+ expert-curated templates at getpolicyguard.com/templates. Start your free 14-day trial at getpolicyguard.com/register.

Frequently Asked Questions

Are AI policy generators accurate? AI policy generators produce content that sounds accurate but frequently contains errors. Common issues include hallucinated legal references (citations to regulations or provisions that do not exist), vague language that is unenforceable in practice, missing critical sections like incident reporting and data classification, and outdated regulatory information based on the model’s training data cutoff. Every AI-generated policy should be reviewed by a compliance professional before deployment.

Is it safe to use ChatGPT to write an AI policy? Using ChatGPT or any LLM to write an AI policy creates several risks. The output may contain fabricated legal citations, miss critical regulatory requirements, use language too vague to enforce, and lack the specificity needed for audit readiness. Additionally, there is an irony and credibility risk: using AI to govern AI undermines your organization’s position that it takes AI risks seriously. A ChatGPT-generated draft can be a starting point, but it should never be deployed without thorough expert review.

What is the difference between an AI policy generator and a template? An AI policy generator uses a language model to create a new policy from a prompt, producing unique but unverified content each time. An expert-curated template is a pre-written, pre-reviewed policy document created by compliance professionals with regulatory expertise. Templates are verified for accuracy, completeness, and enforceability before they are offered to organizations. Generators are faster but riskier. Templates are more reliable and come with the accountability of human authorship.

How much does it cost to create an AI policy? Writing from scratch with internal resources costs $5,000-$25,000 in staff time. Using an AI generator is free or low-cost upfront but requires $500-$2,000+ in legal review to identify and correct errors. Expert-curated template platforms like PolicyGuard start at $49/month and include not just the policy but also training, quizzes, enforcement, and audit reporting. The total cost of ownership is lowest with a template platform because it eliminates the need for extensive review and includes all governance components.

Why does PolicyGuard not use AI to generate policies? PolicyGuard deliberately uses zero AI in policy creation because compliance documents require 100% accuracy, verifiable legal references, specific enforceable language, and human accountability. AI language models hallucinate information, produce vague language, and cannot be held accountable for errors. Every PolicyGuard template is written by compliance professionals, reviewed for regulatory accuracy, and updated when regulations change. This is a trust decision: organizations deserve governance documents they can rely on completely.